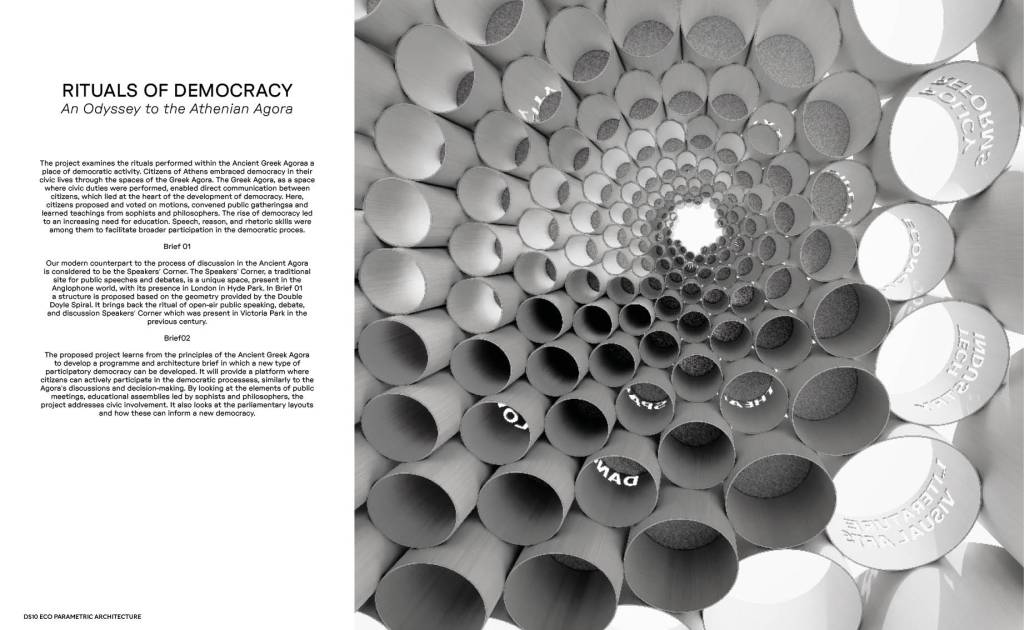

ECO-PARAMETRIC ARCHITECTURE:

MATERIAL BASED RITUALS AND ECONOMIES

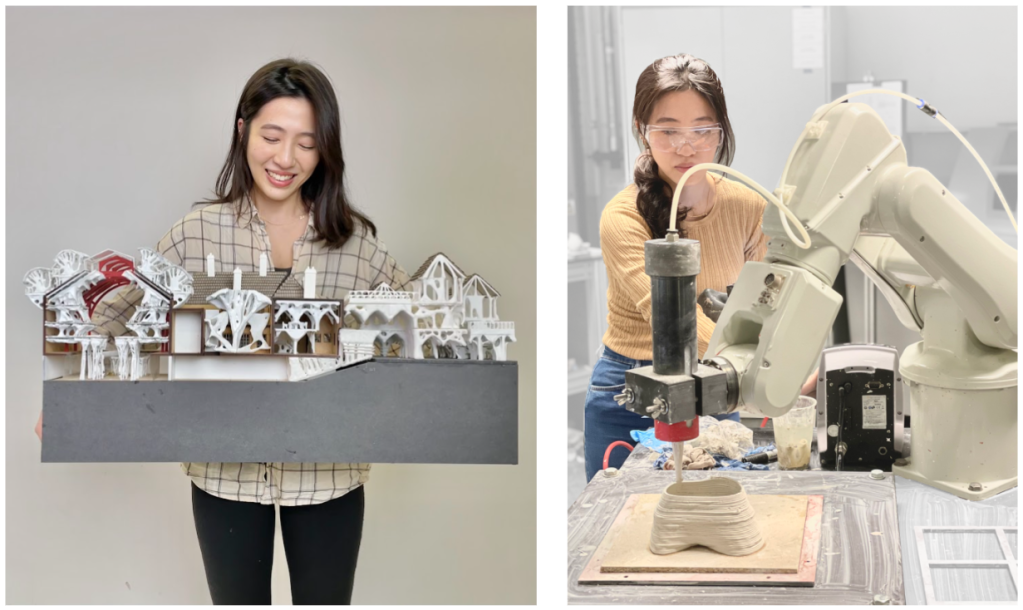

We are back! 10 Year as DS10 (Diploma Studio 10). We STILL Want To Learn. Join our studio at University of Westminster with Toby Burgess and Arthur Mamou-Mani. This year we will be looking at Temples and Sacred Spaces, using A.I. and Parametric tools, fusing nature and architecture in London’s discarded office buildings.

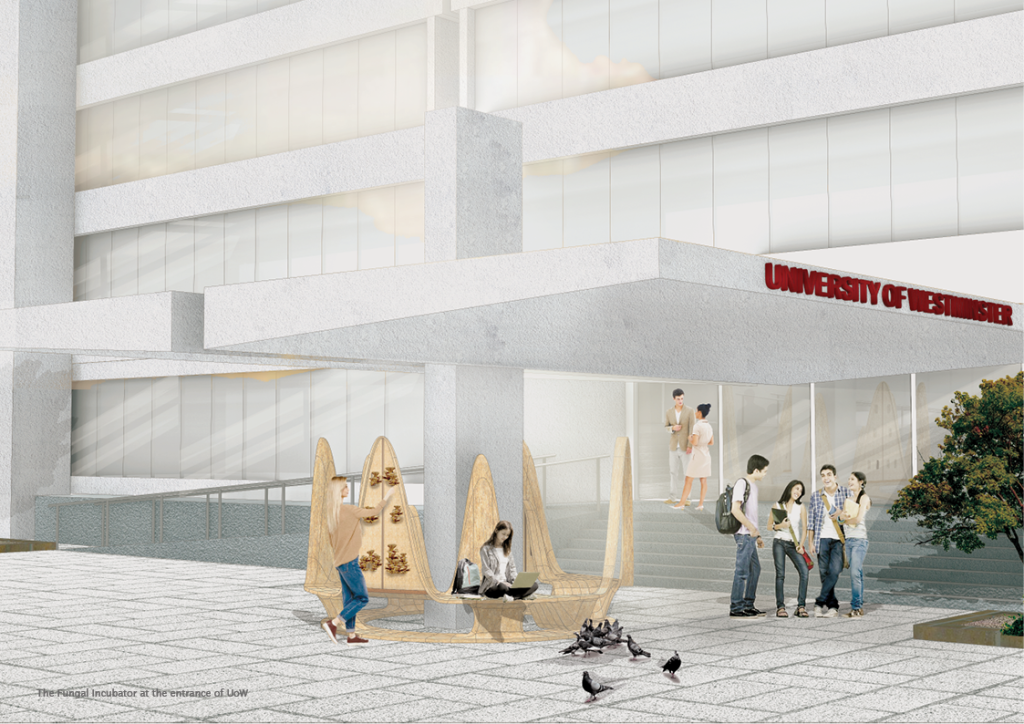

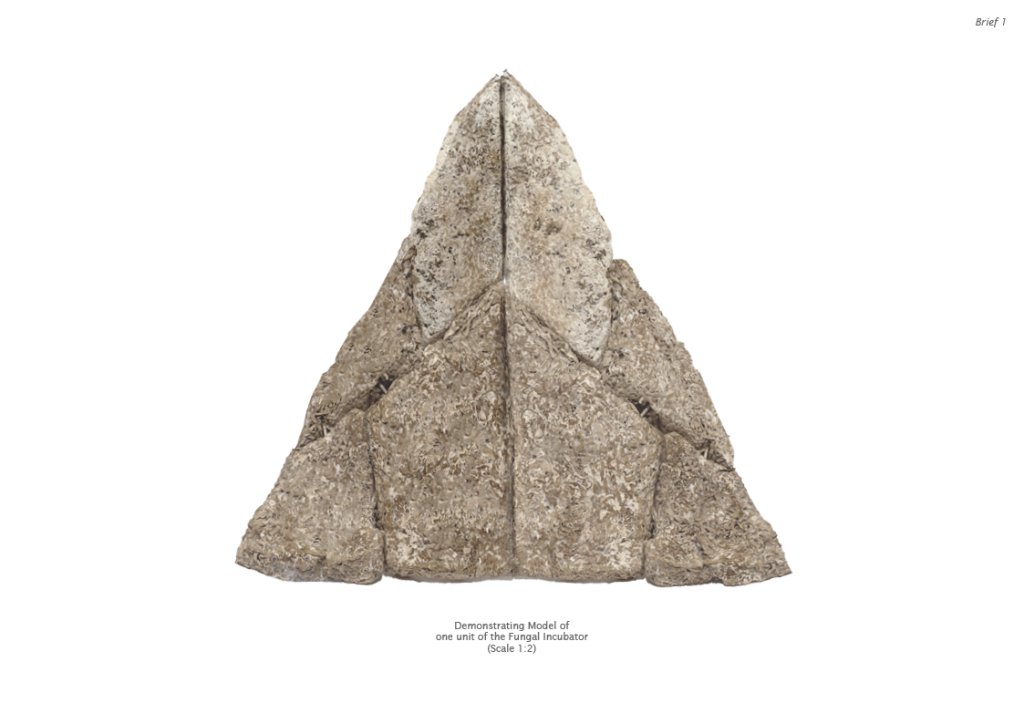

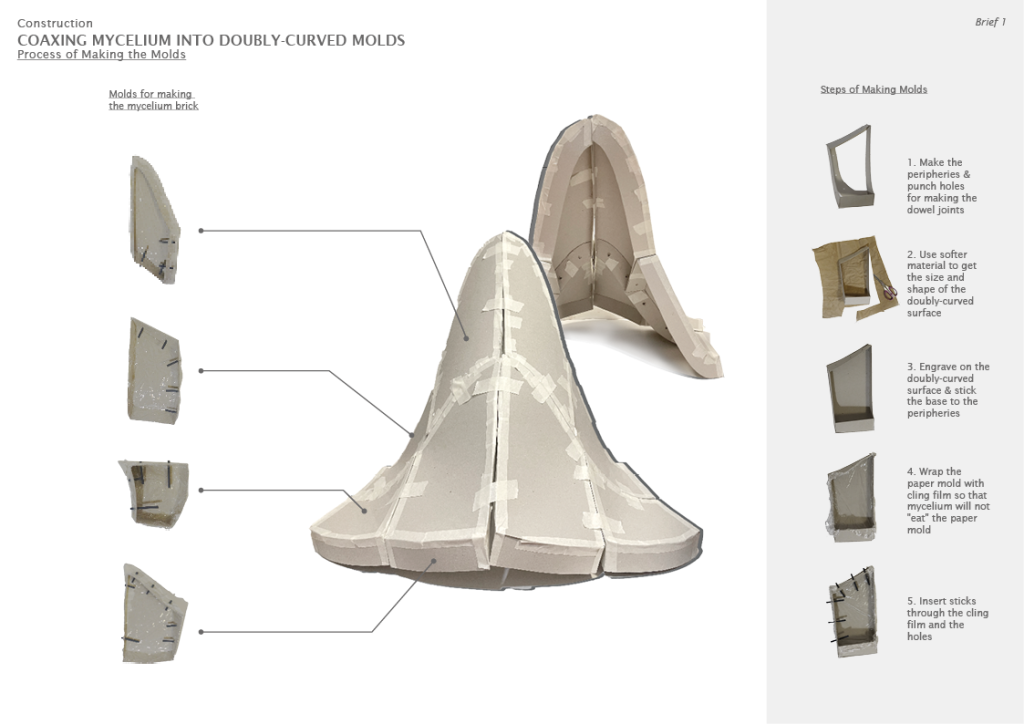

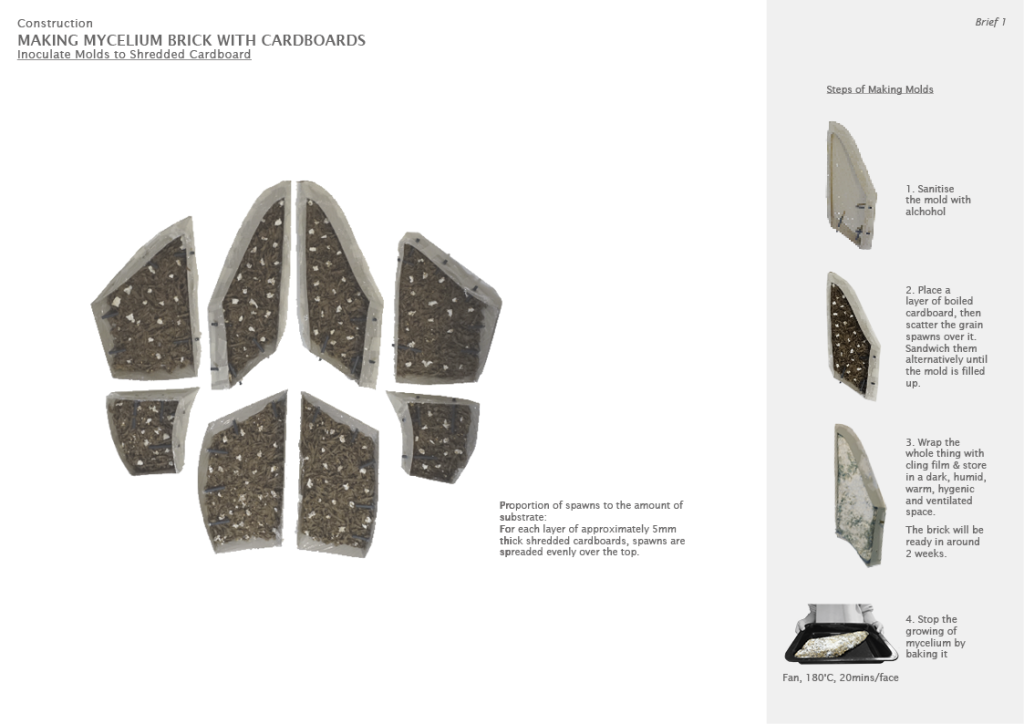

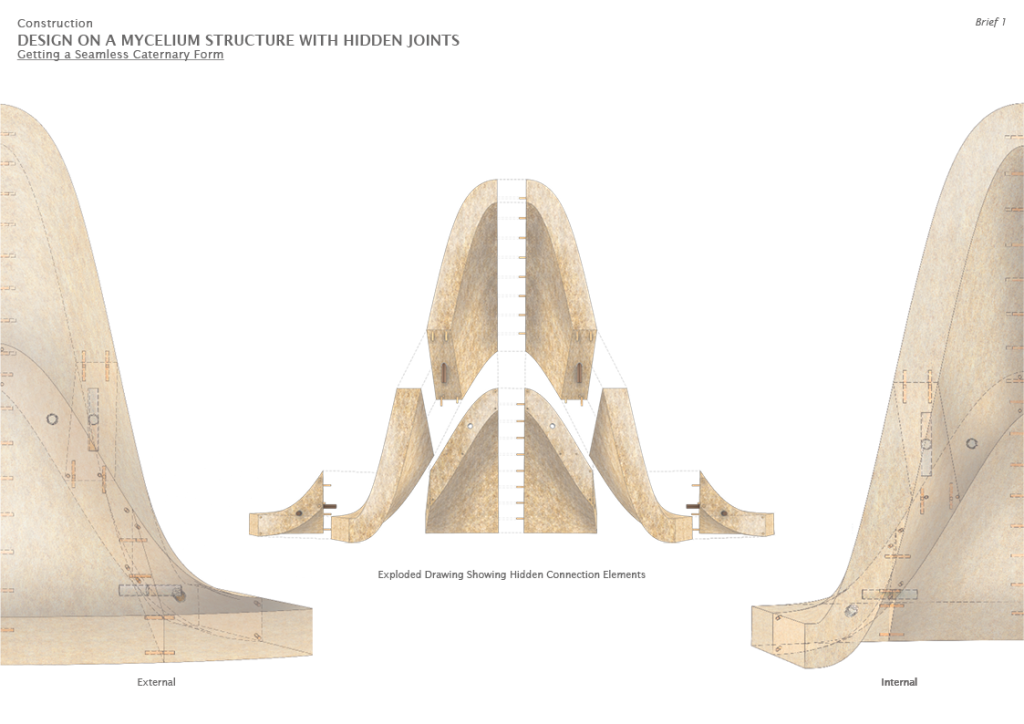

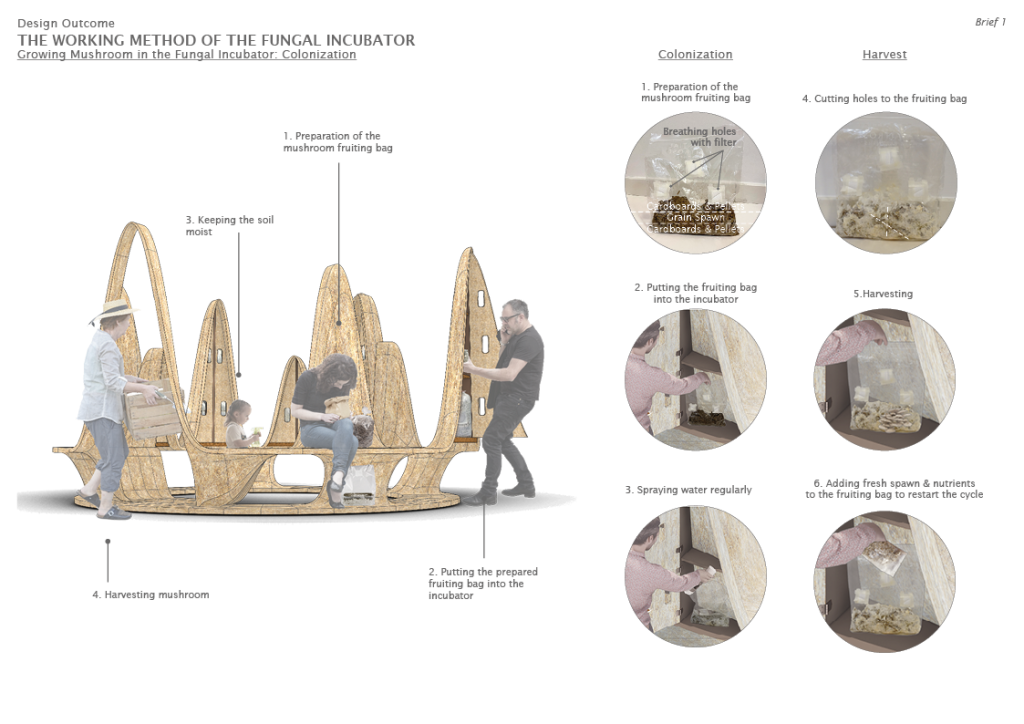

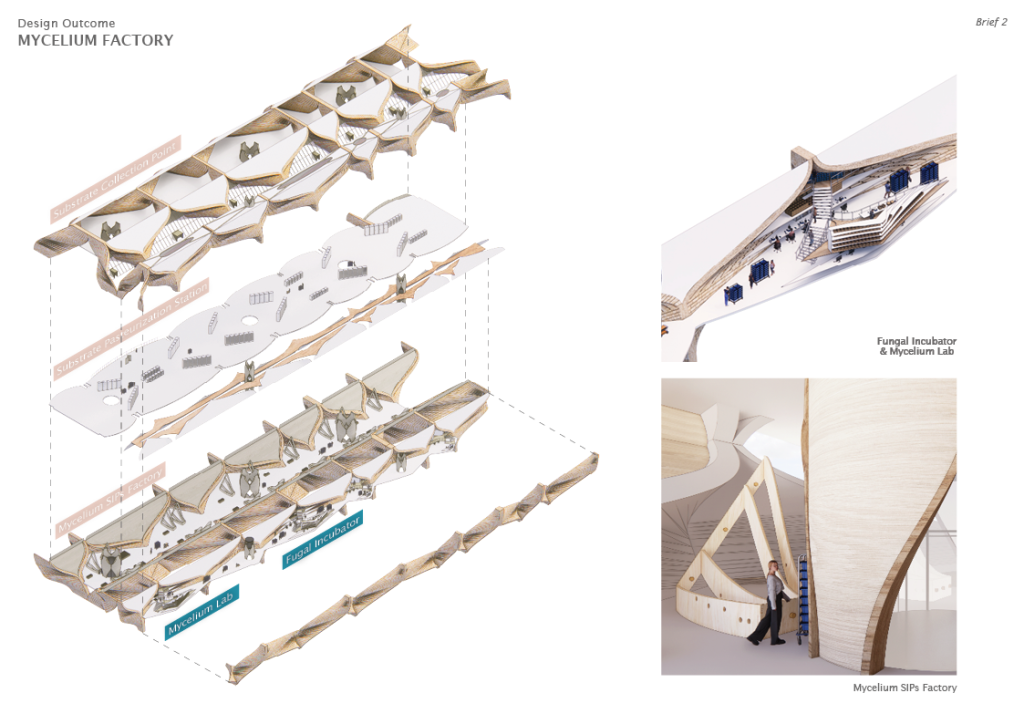

The Fungal Incubator is a hybrid modular piece of urban furniture and an instrument for mushroom cultivation. It provides an innovative solution to the rising consumption of cardboards by turning them into substrates that nourish mushrooms. Besides, it incorporates a catenary structure that serves as public seating furniture.

The Fungal Incubator allows mushrooms, specifically oyster mushrooms to grow on cardboards. It involves three main cultivation steps: inoculation, colonization and fruiting. The whole process will only take from two weeks to a month to see the edible mushrooms growing from the incubator. During the period of growth, the transparent display cabinet will show how the mycelium “eats” the cardboard substrates. Therefore, one of the biggest characteristics of the Fungal Incubator is that the display cabinet is always changing.

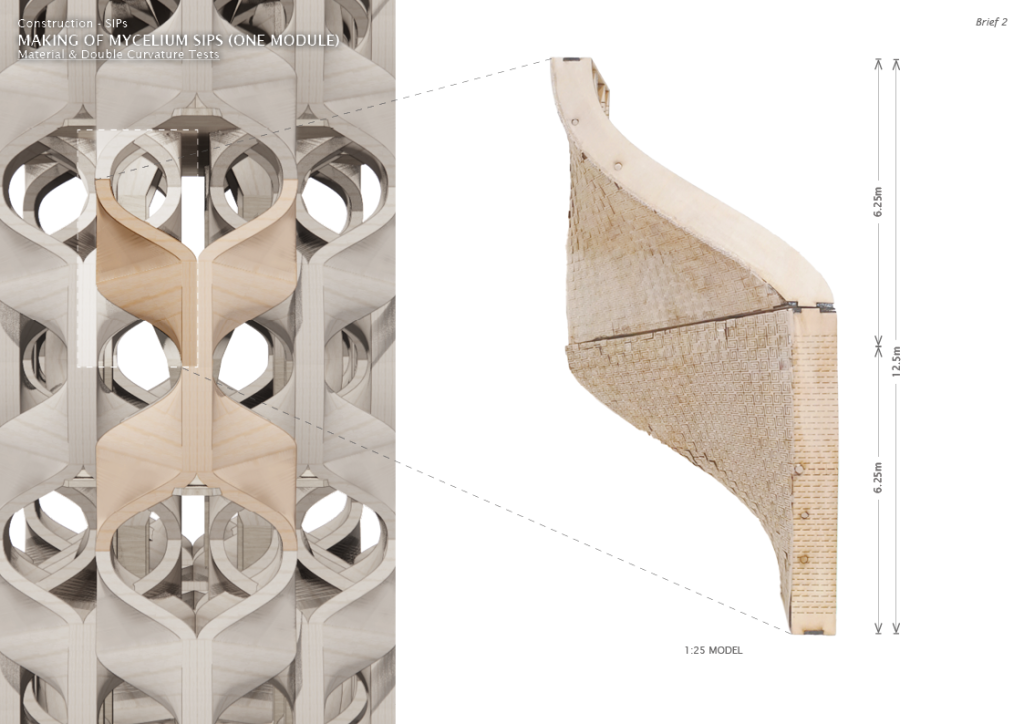

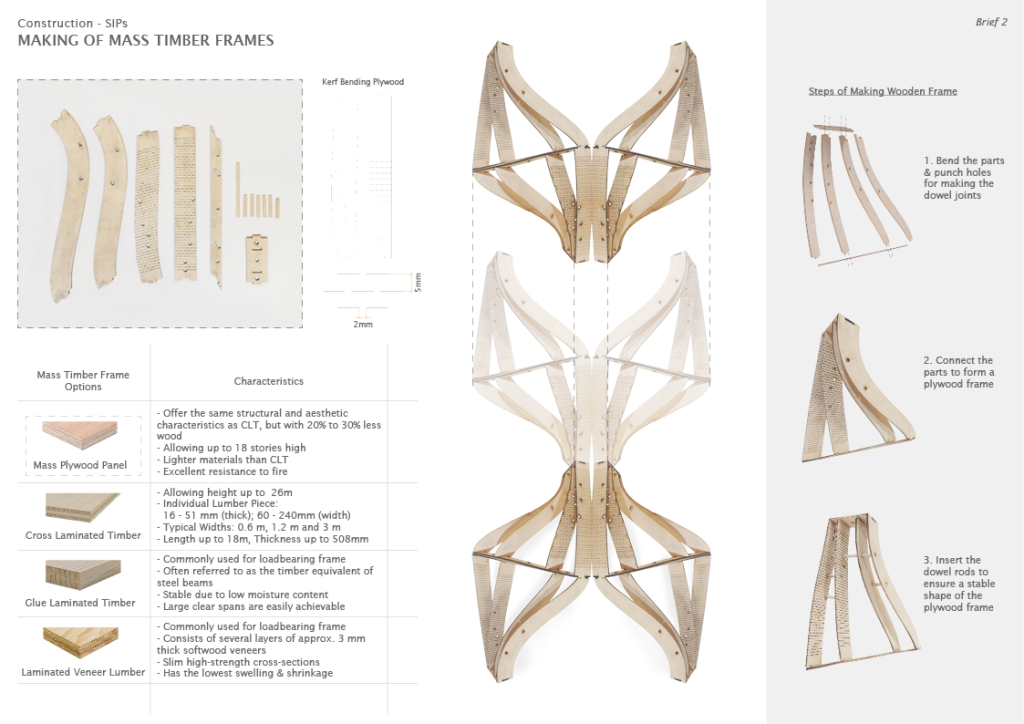

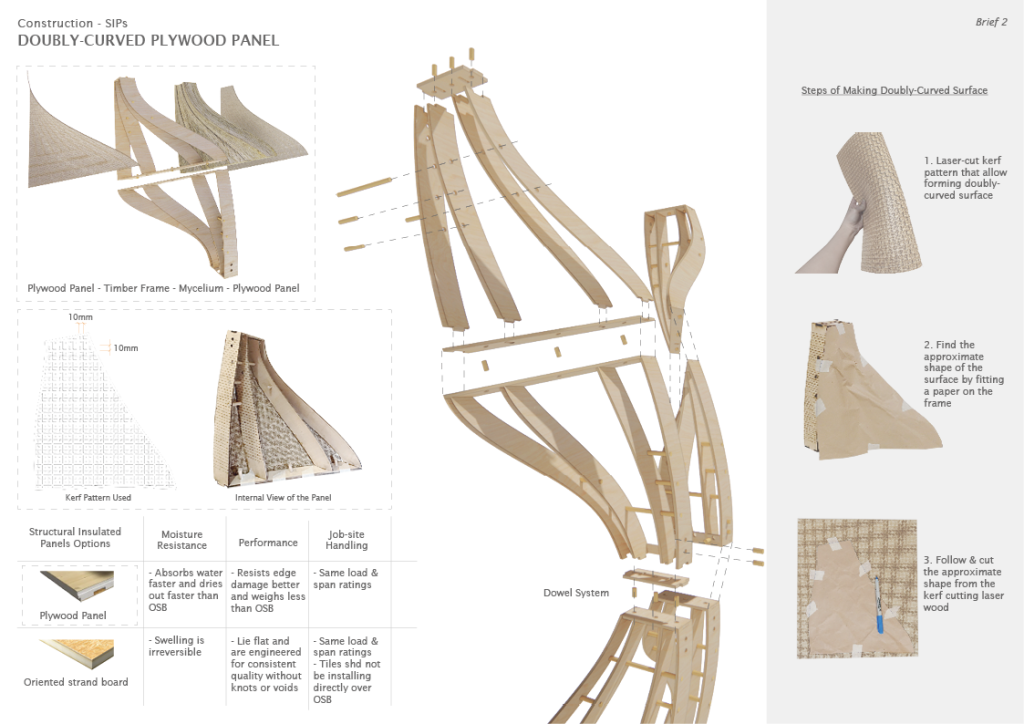

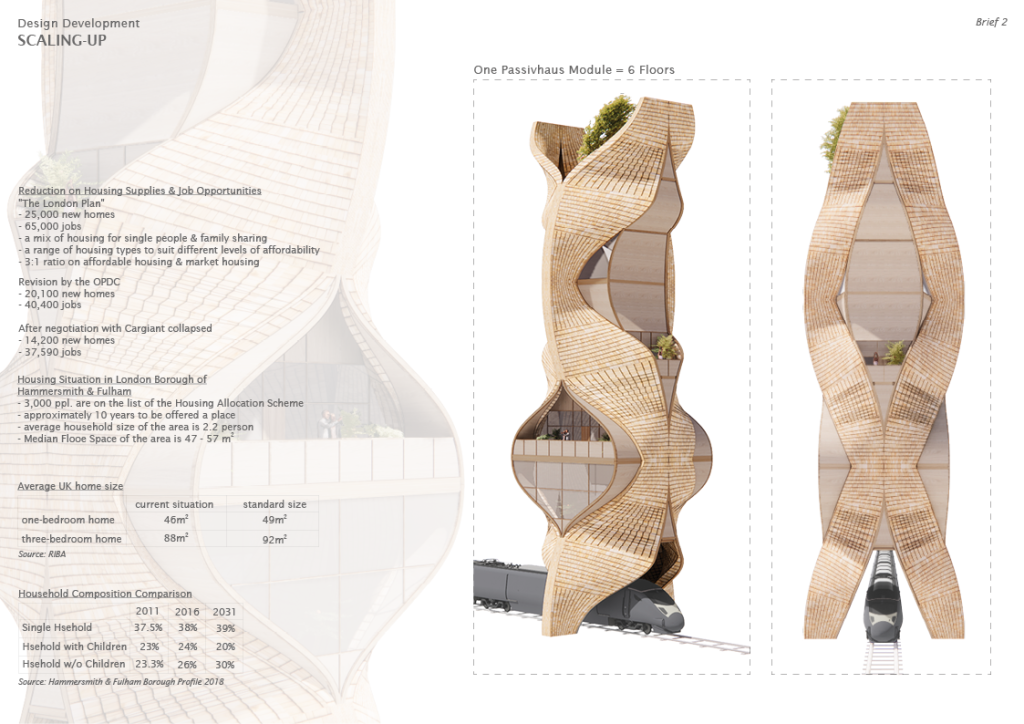

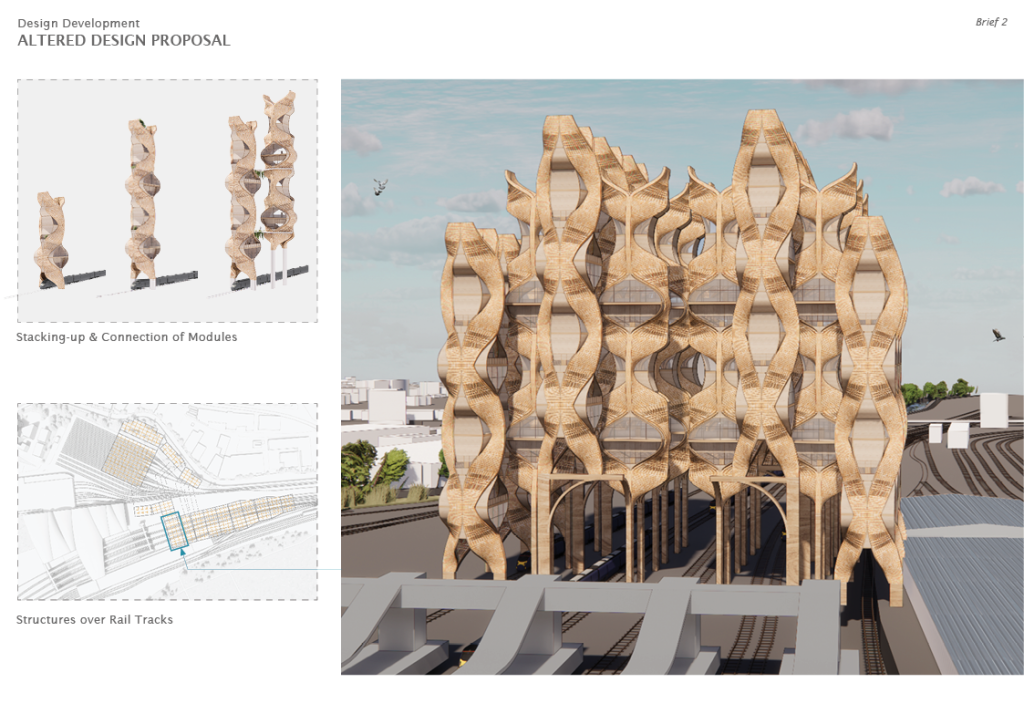

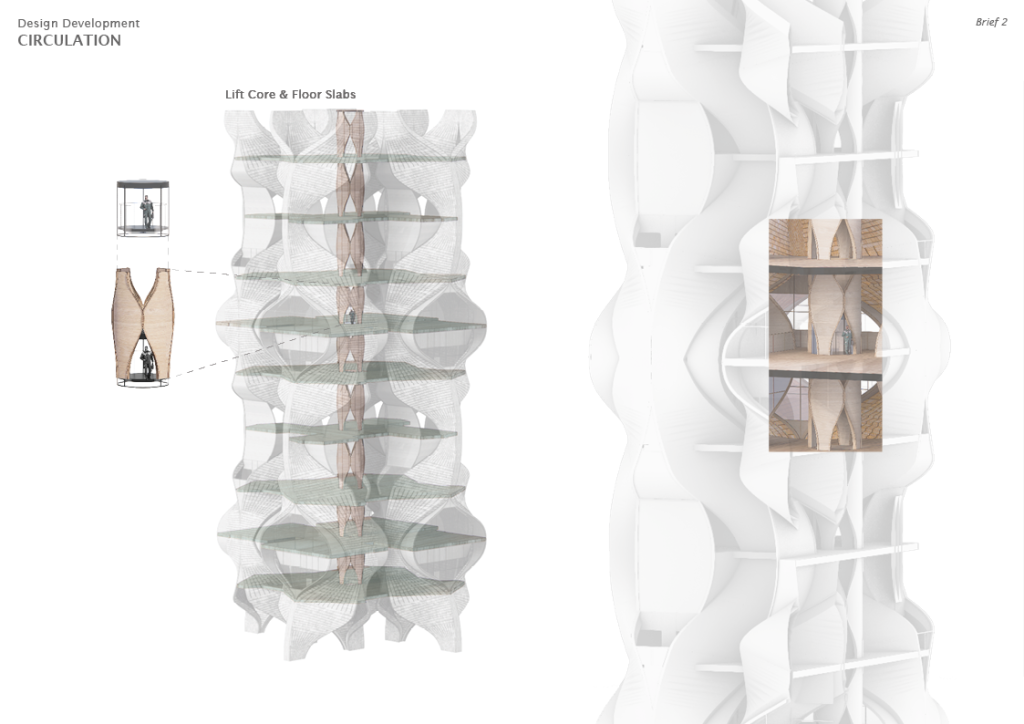

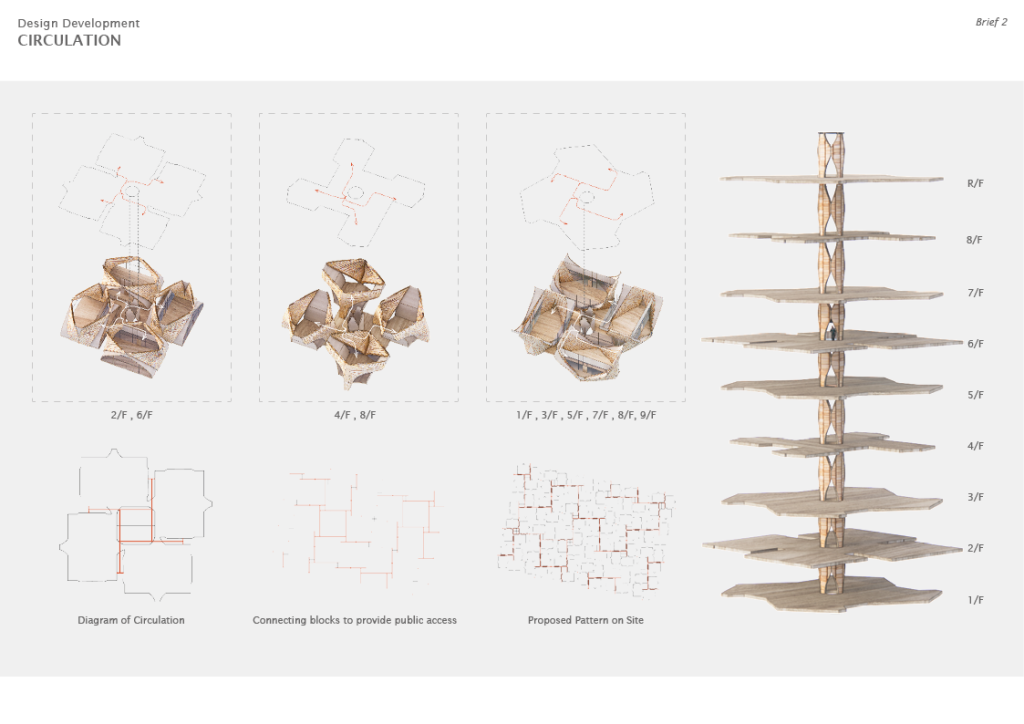

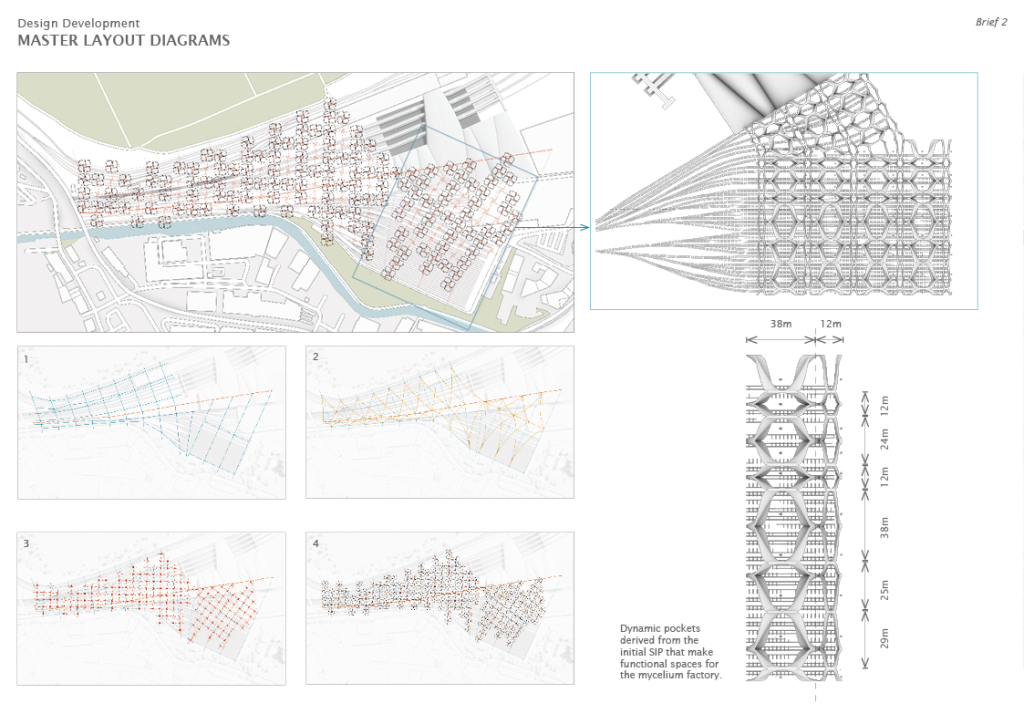

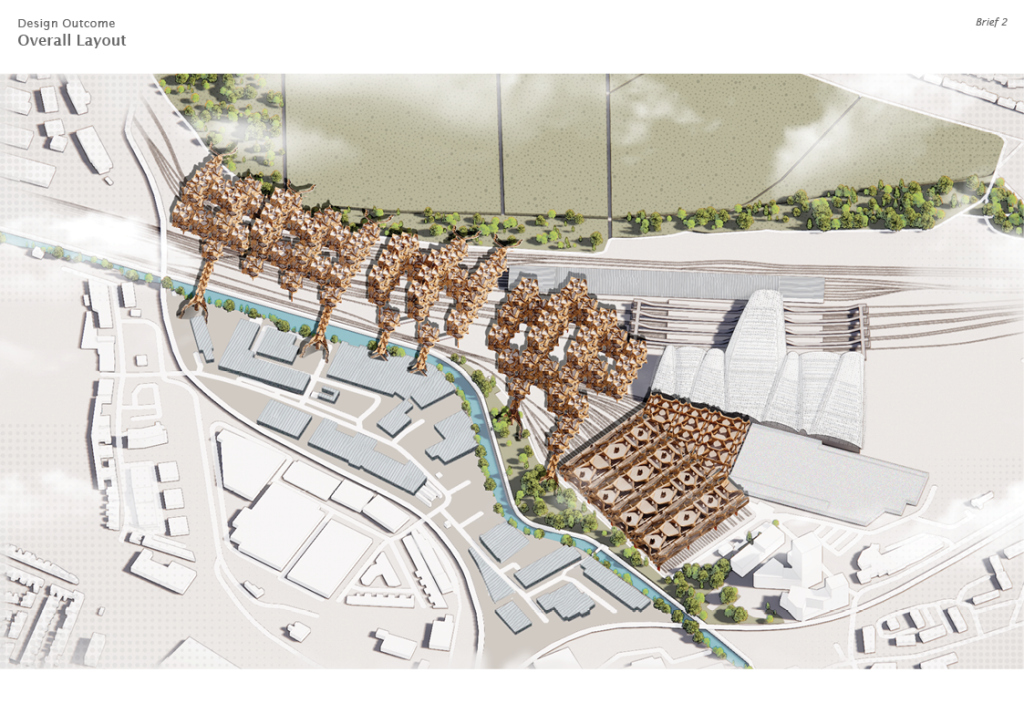

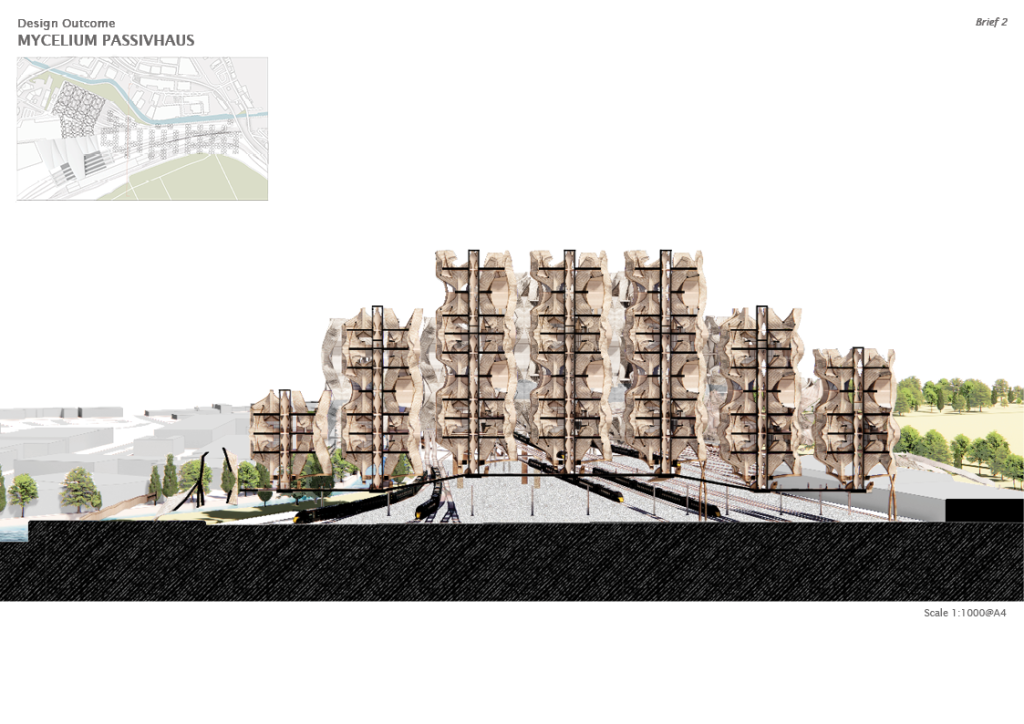

In this semester, a gigantic architecture will be designed based on the bio-material, concepts and techniques developed in brief 1. One of the railway station in the UK has been chosen to utilize the vacant space above the railway tracks.

I chose the Old Oak Common Depot as my project site. It is currently under construction in order to turn into an important station for the High Speed Train Development Scheme in the UK. The scheme is going to turn the industrial area into a modern super hub which is going to change the district vigorously.

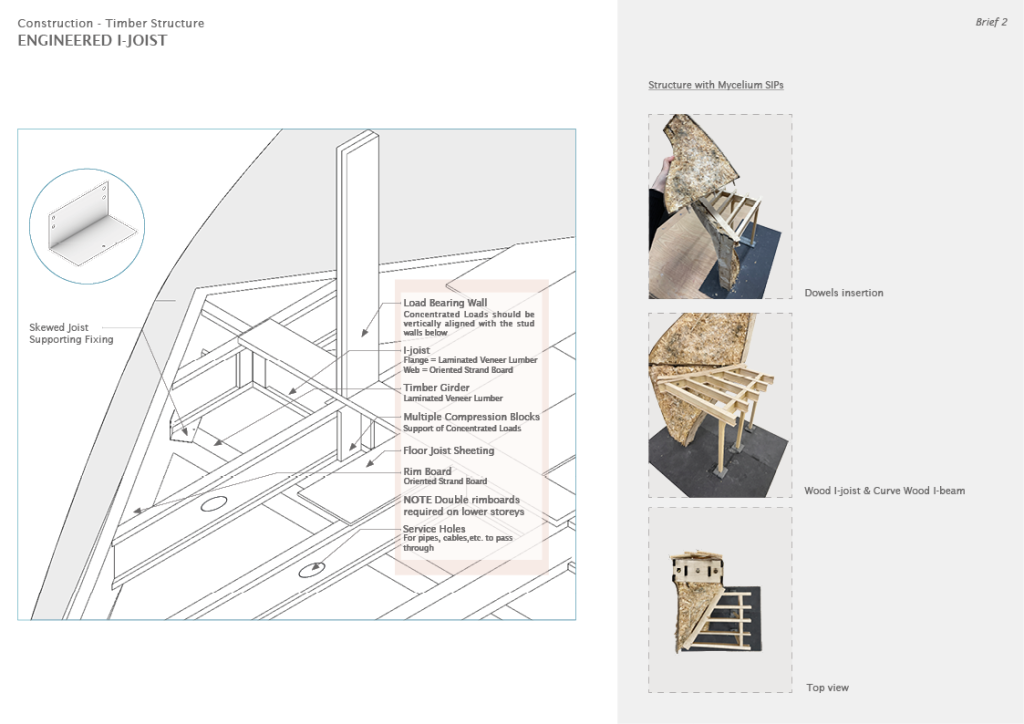

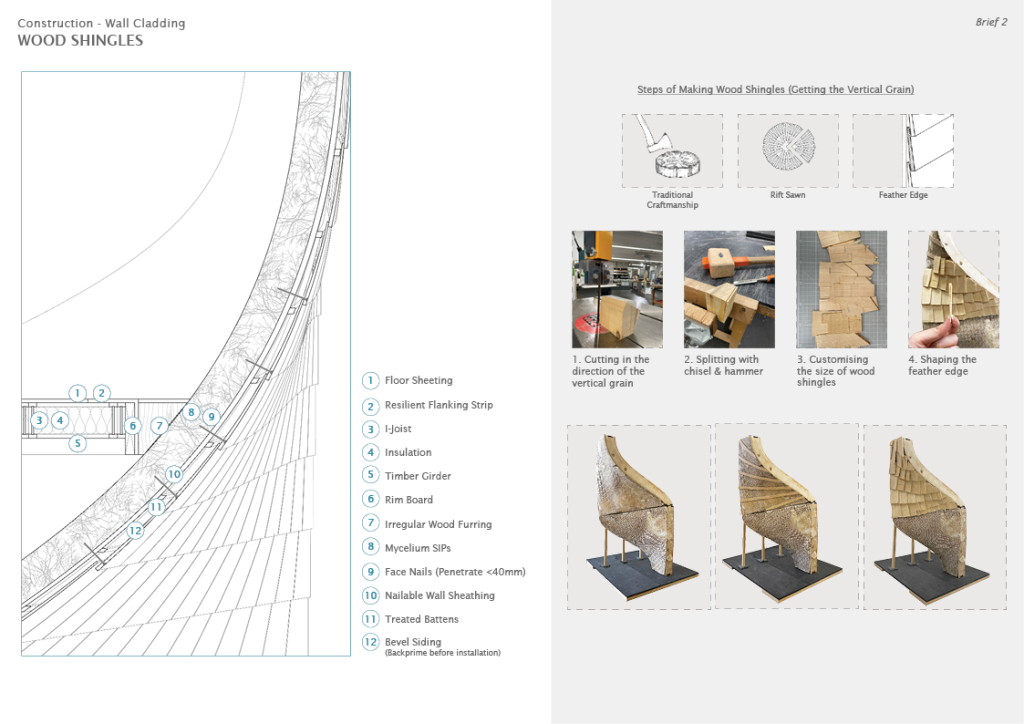

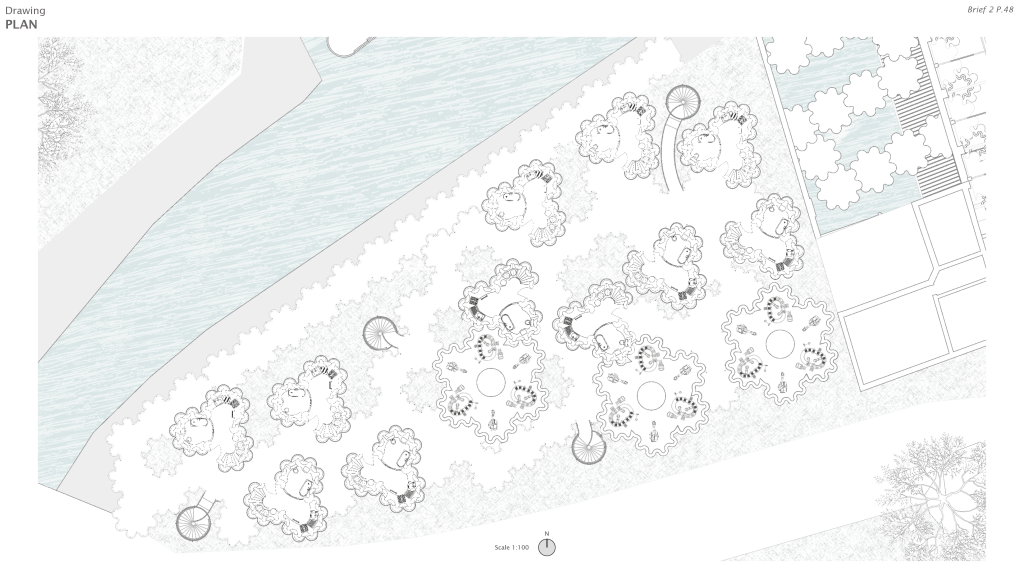

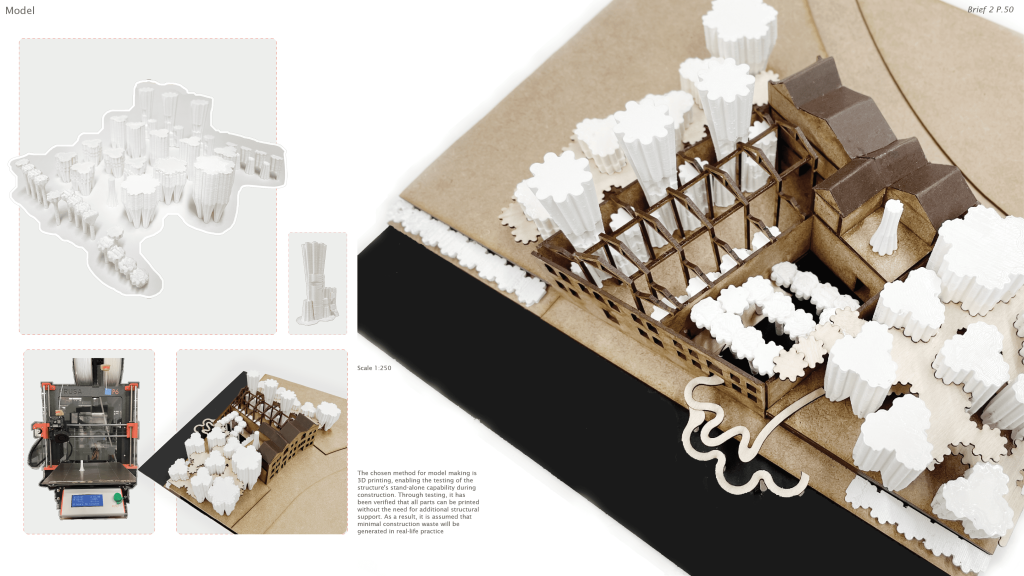

In this project, an organic form of clusters of residential blocks are being introduced to the site. The structure is composed of timbers as well as SIPs that made up of mycelium. It aims to provide residential units with the concept of passivhaus.

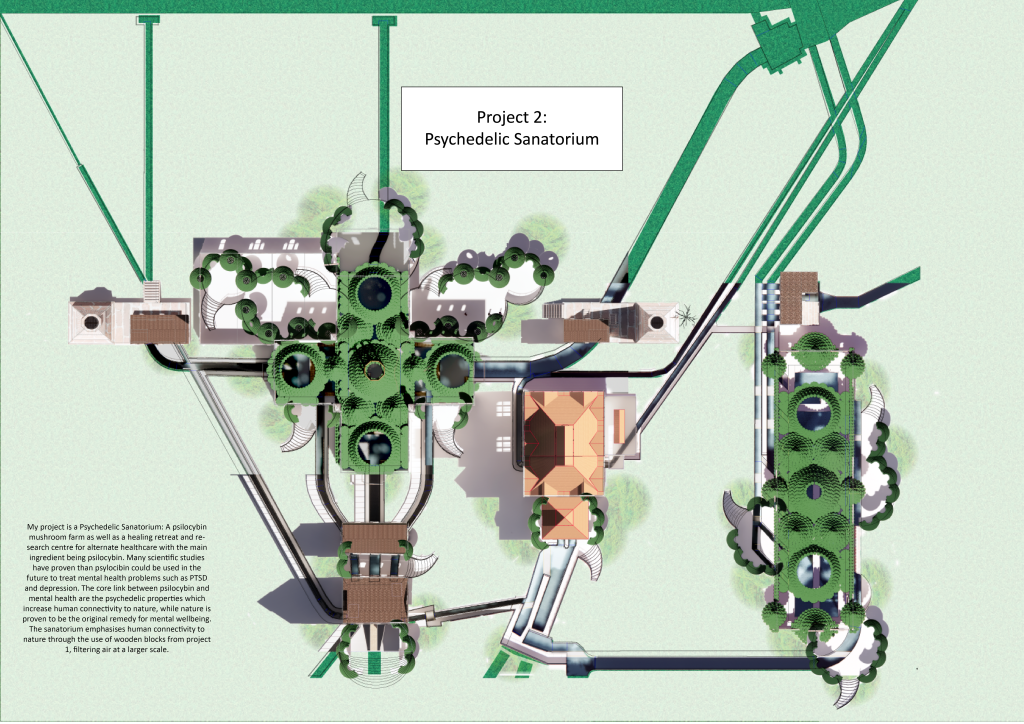

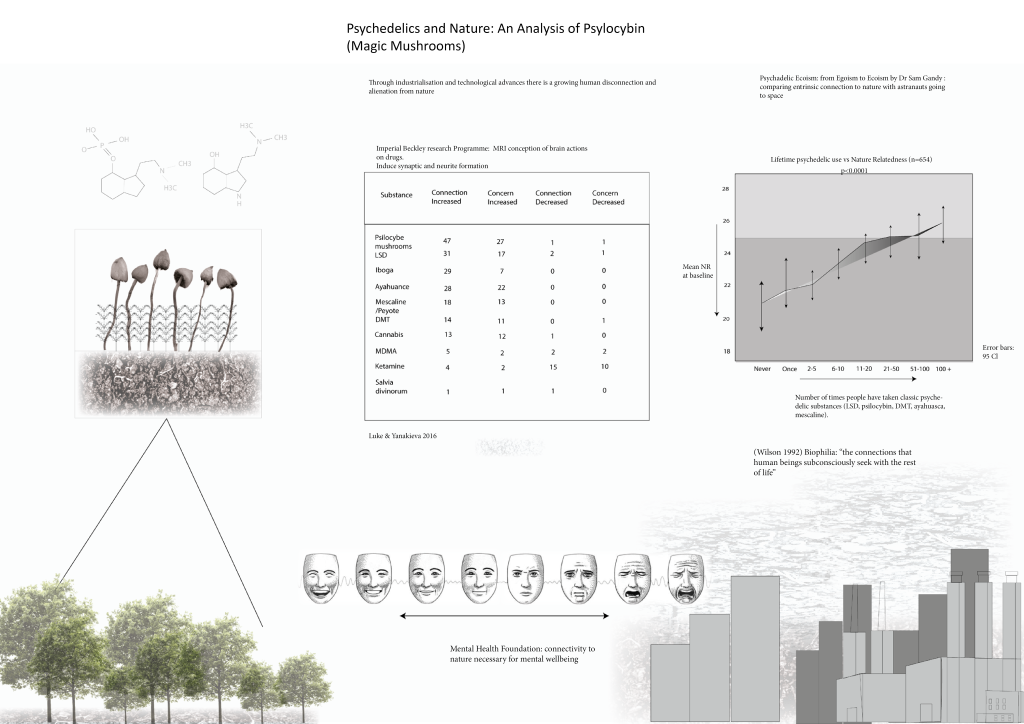

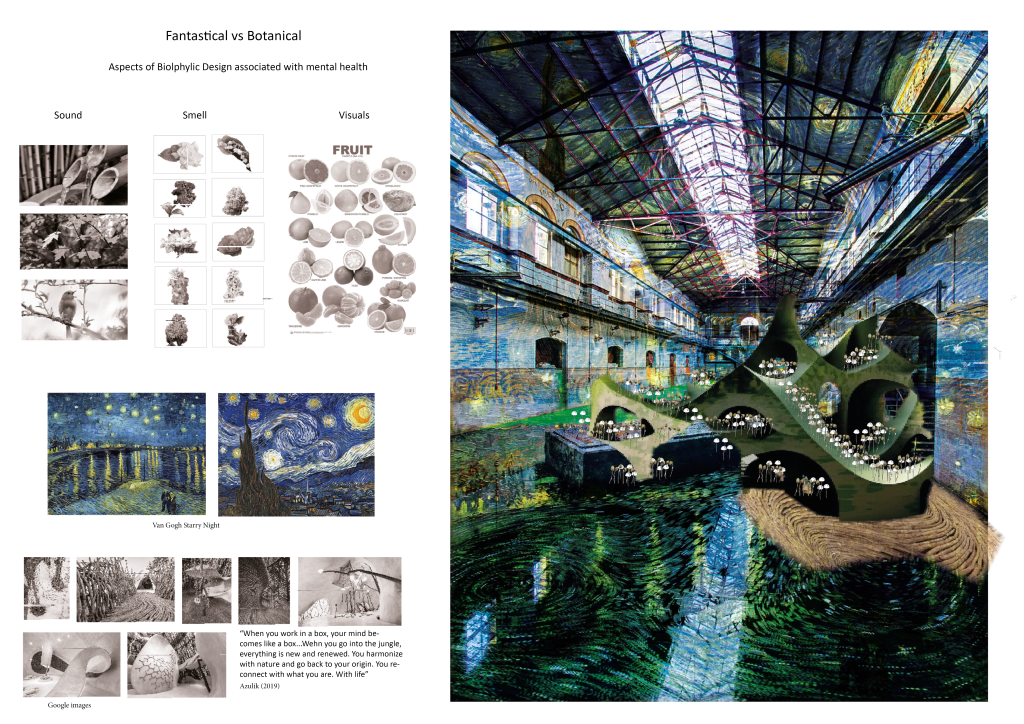

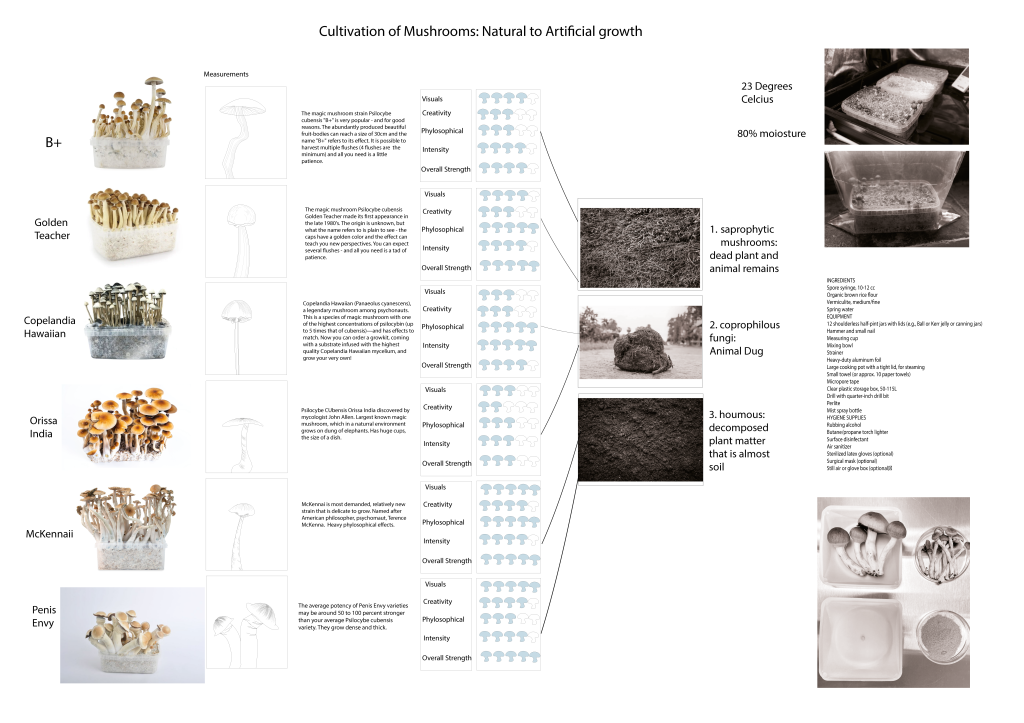

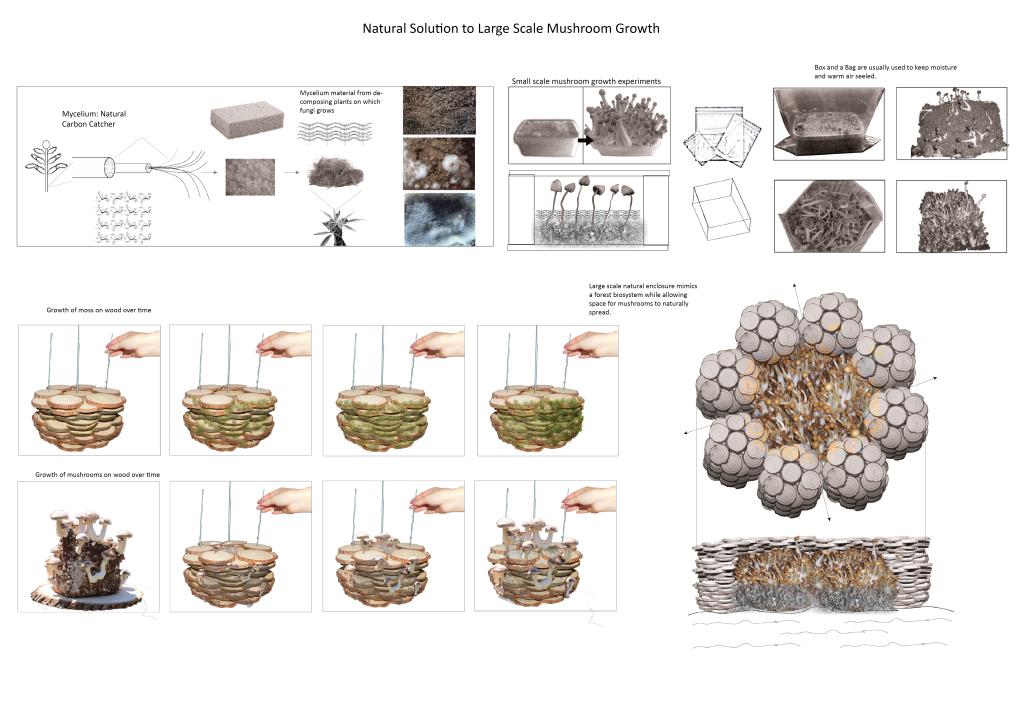

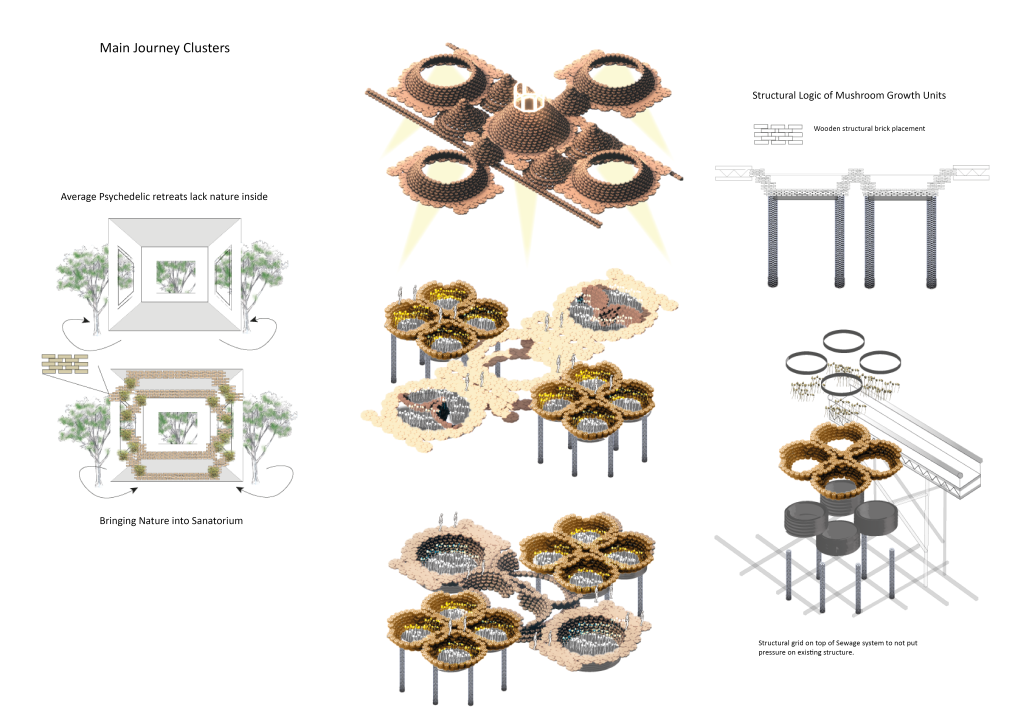

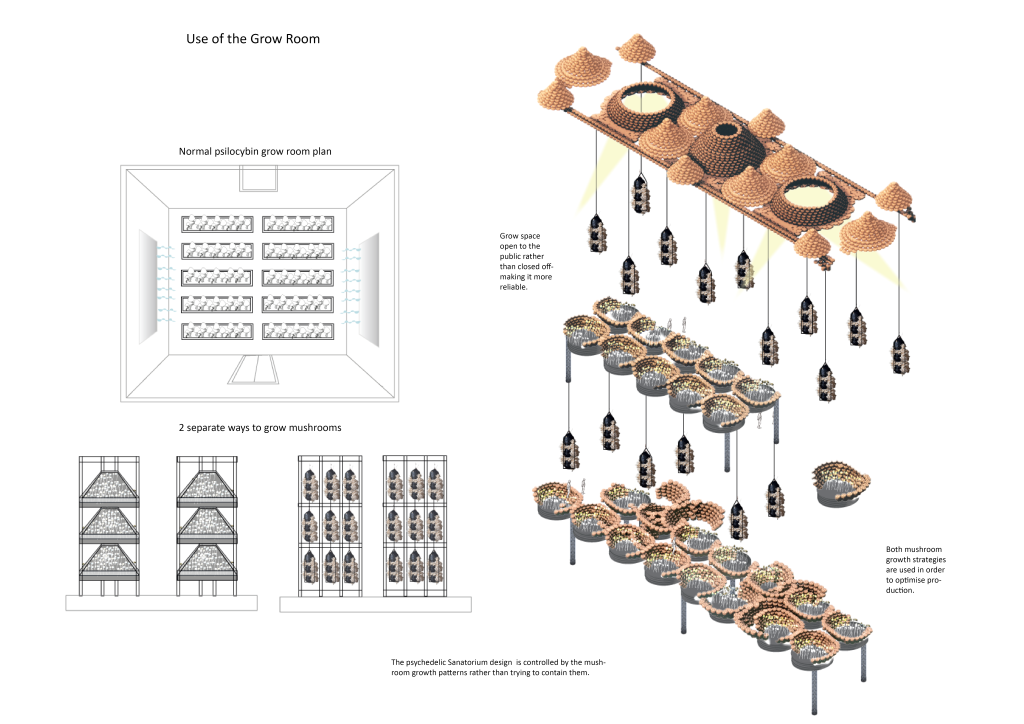

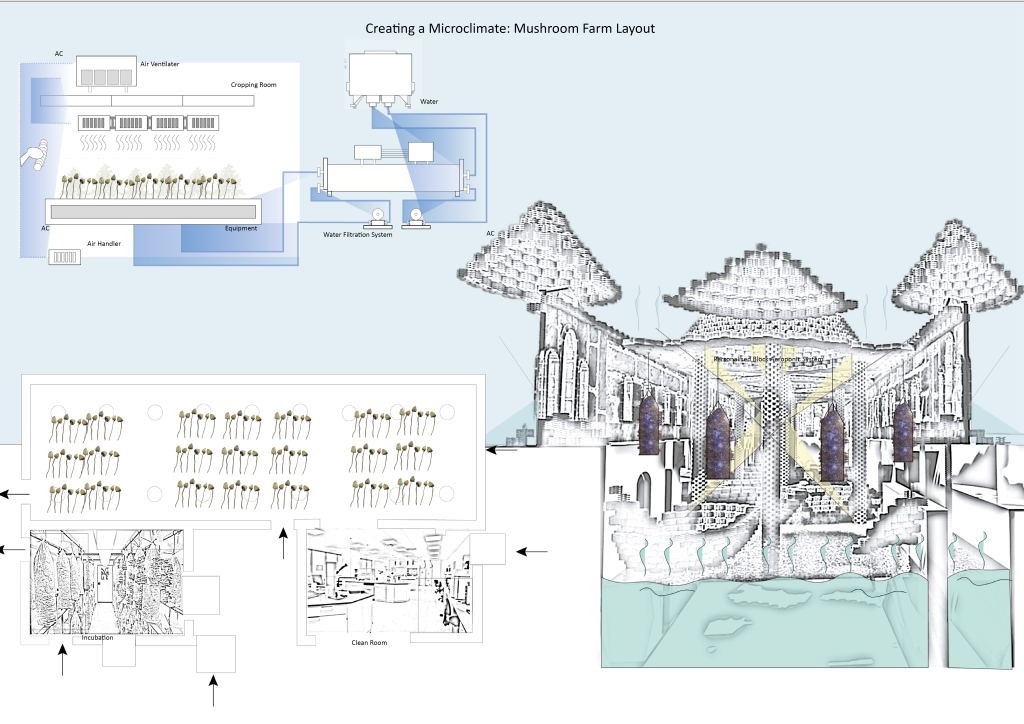

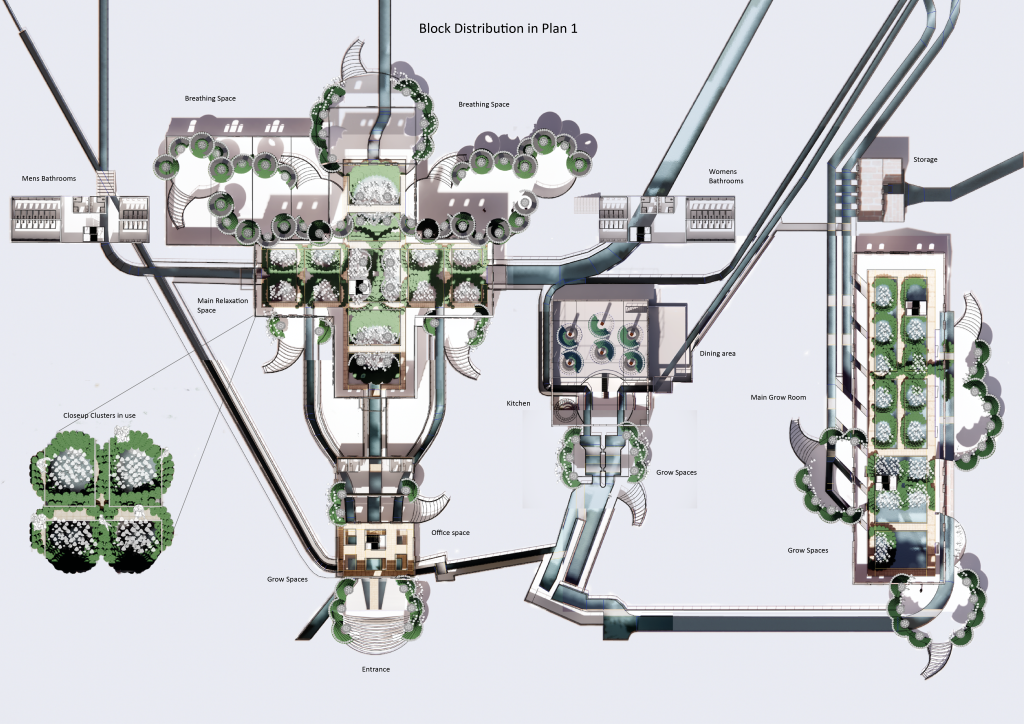

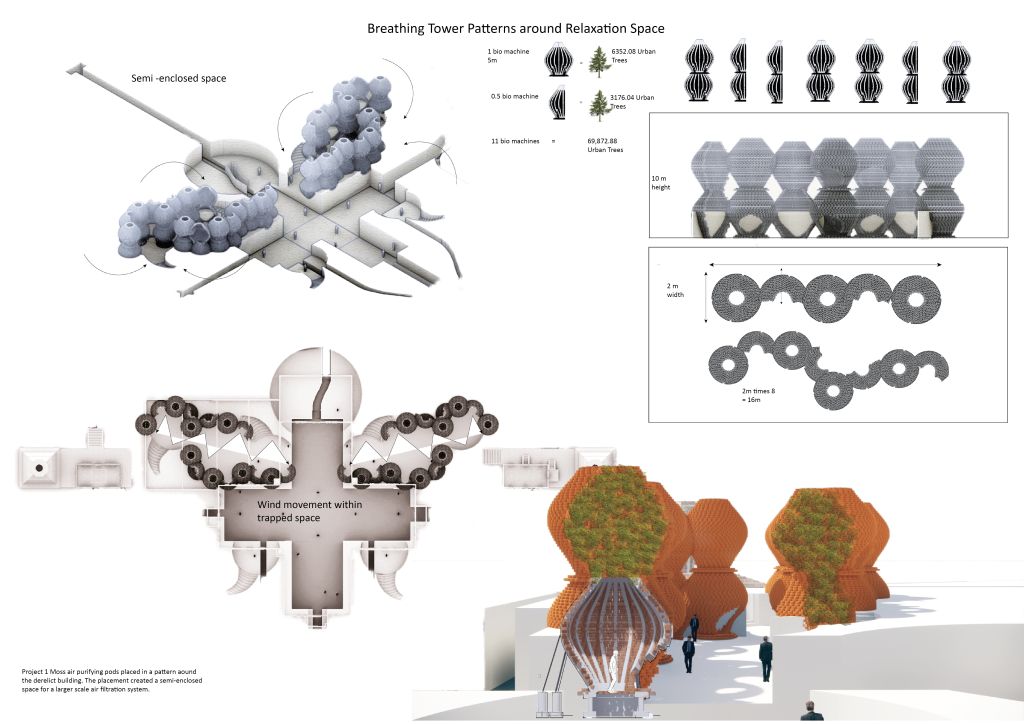

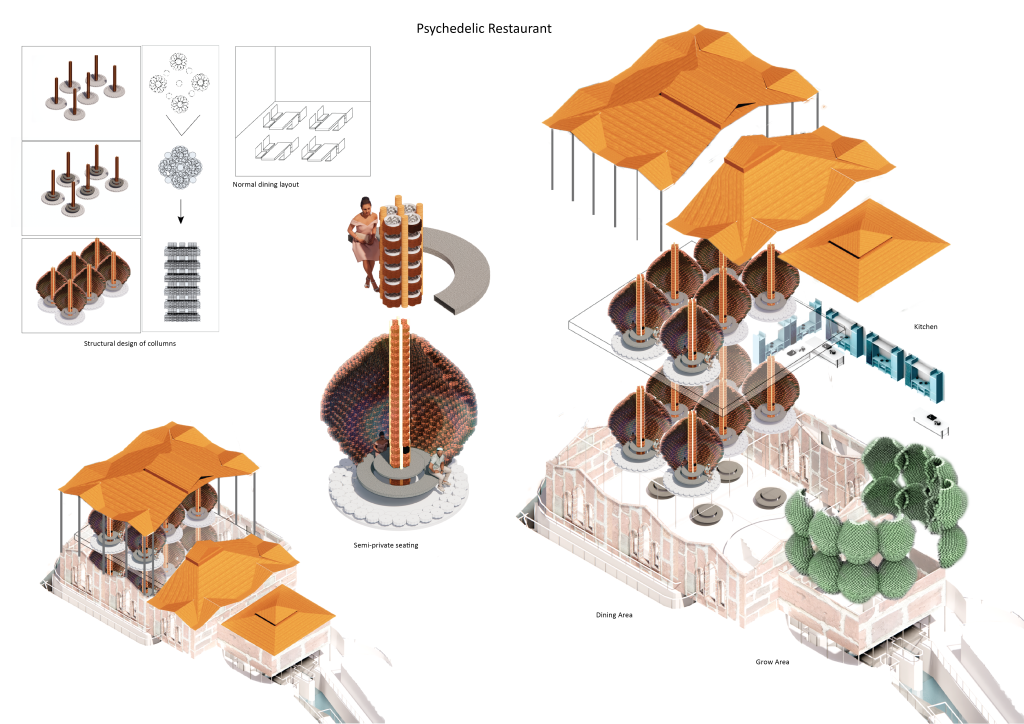

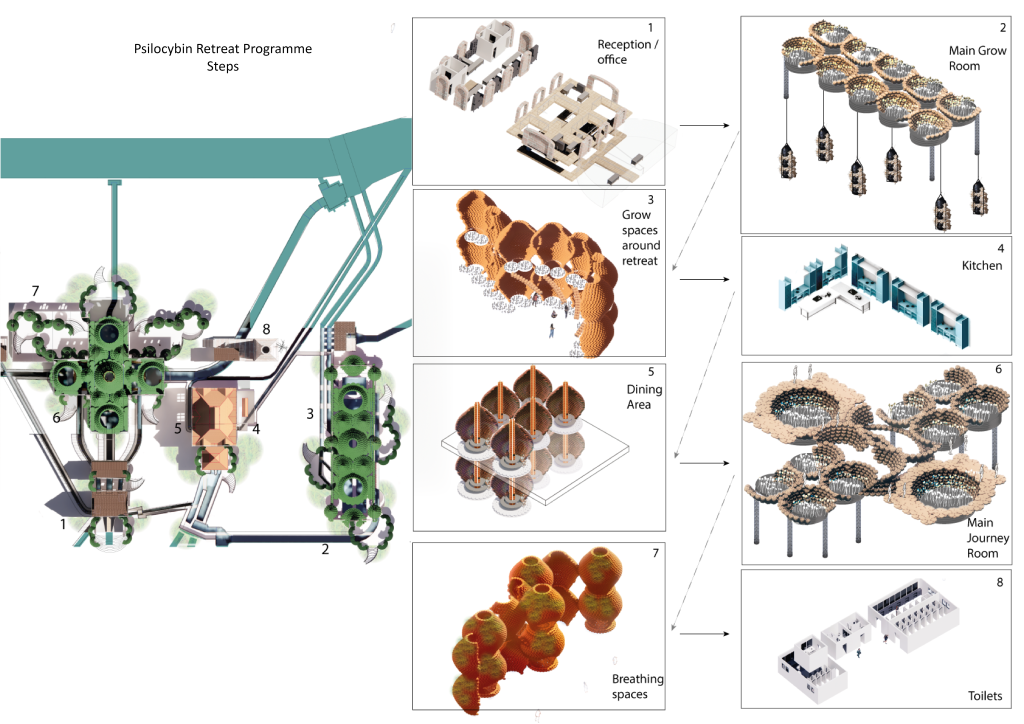

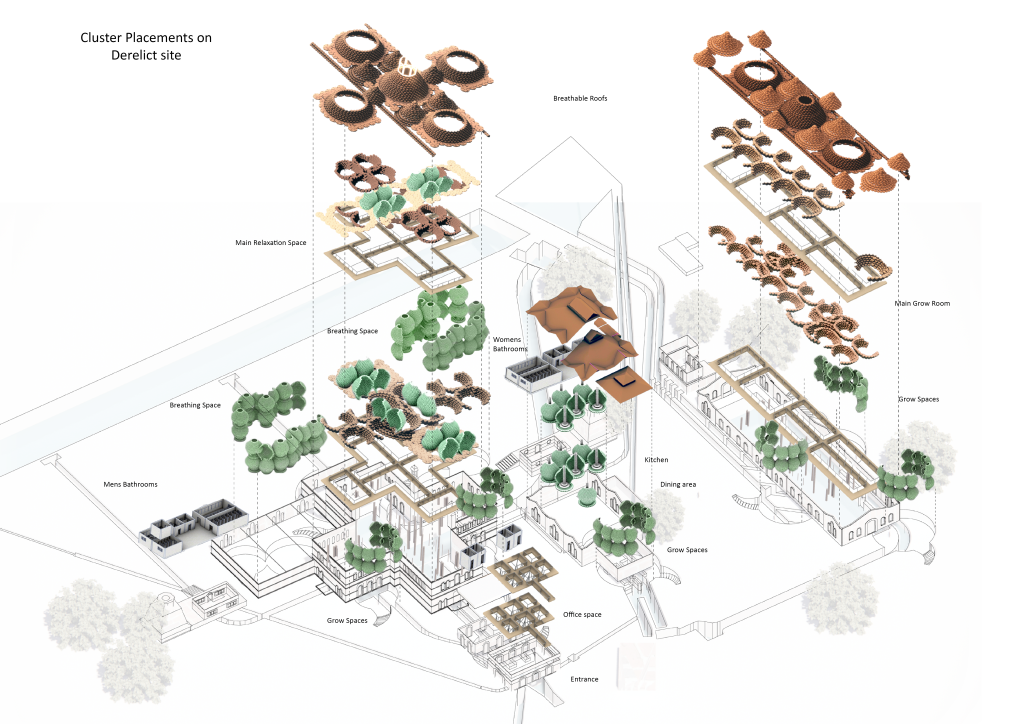

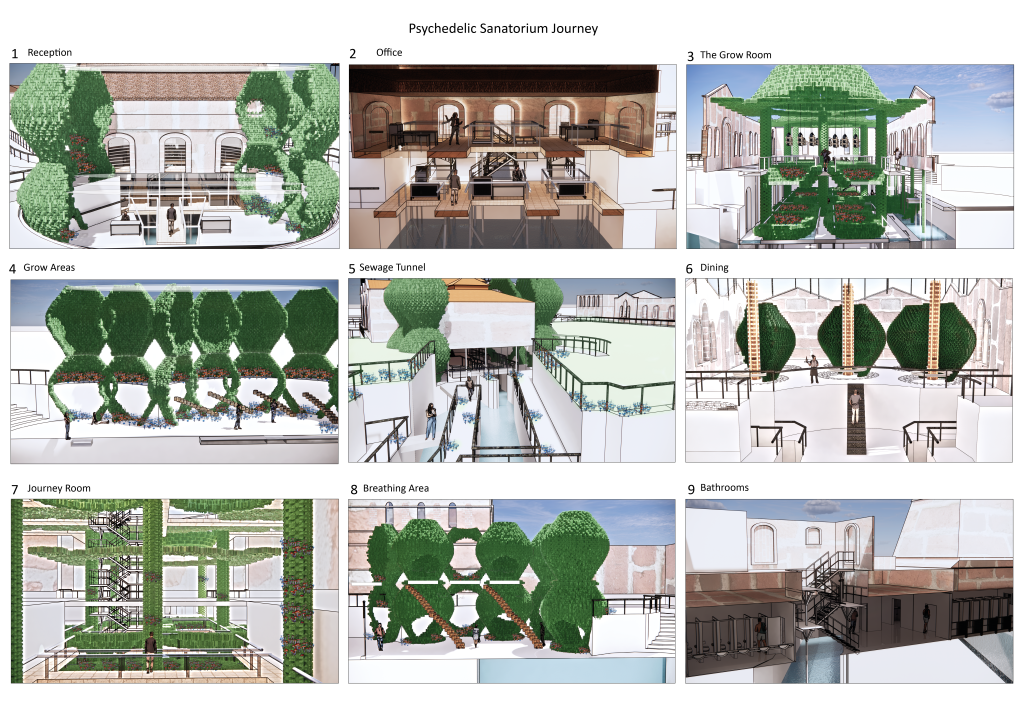

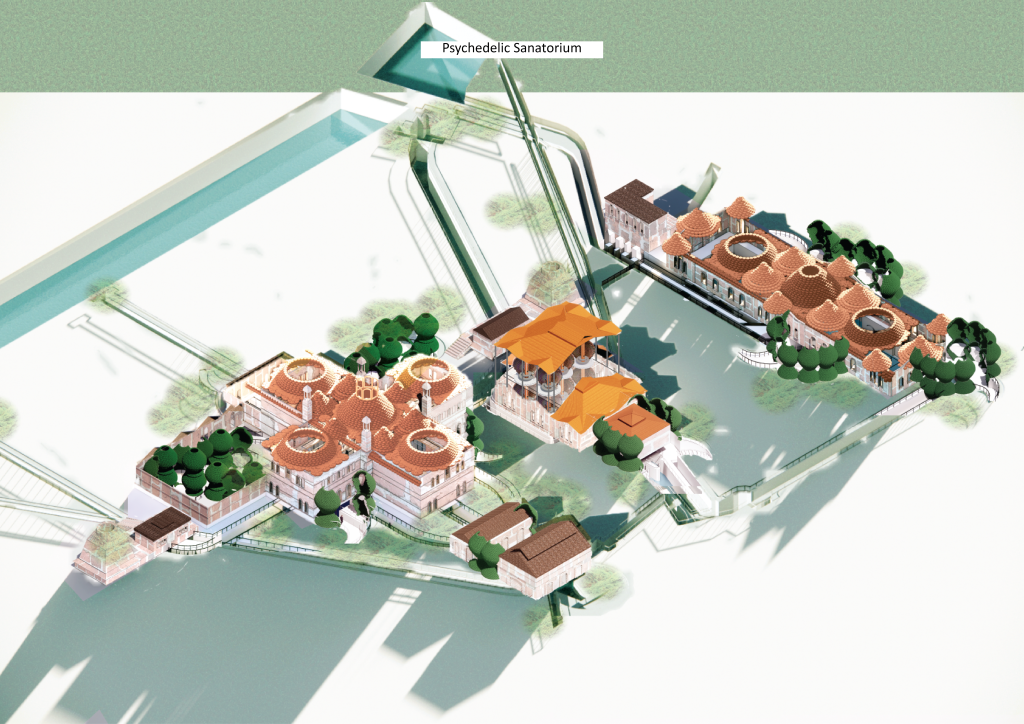

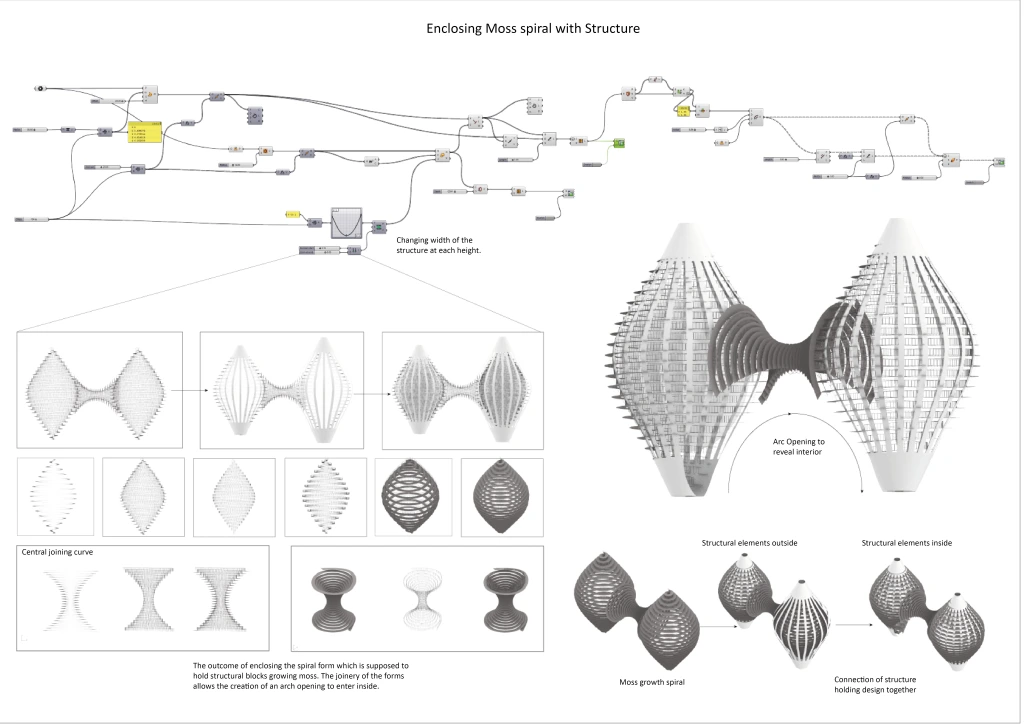

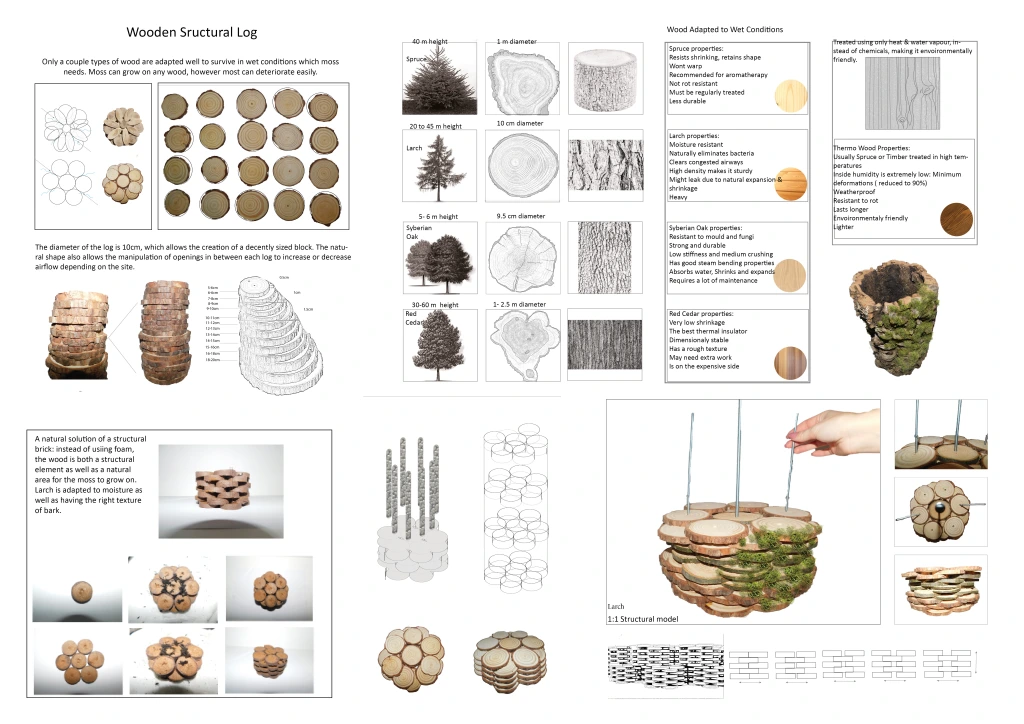

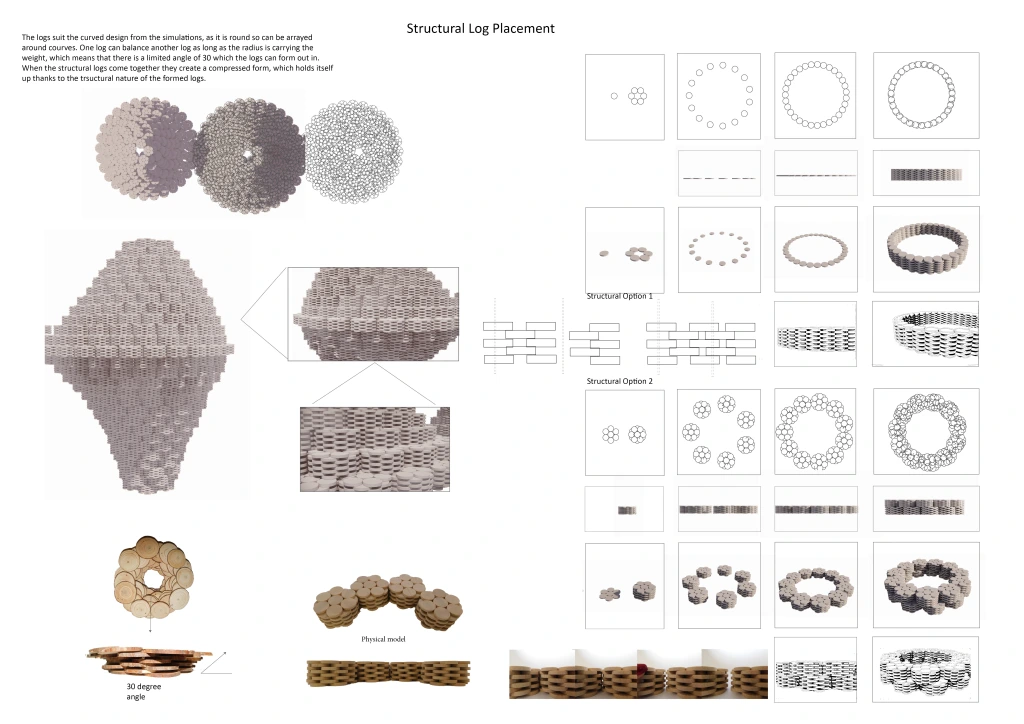

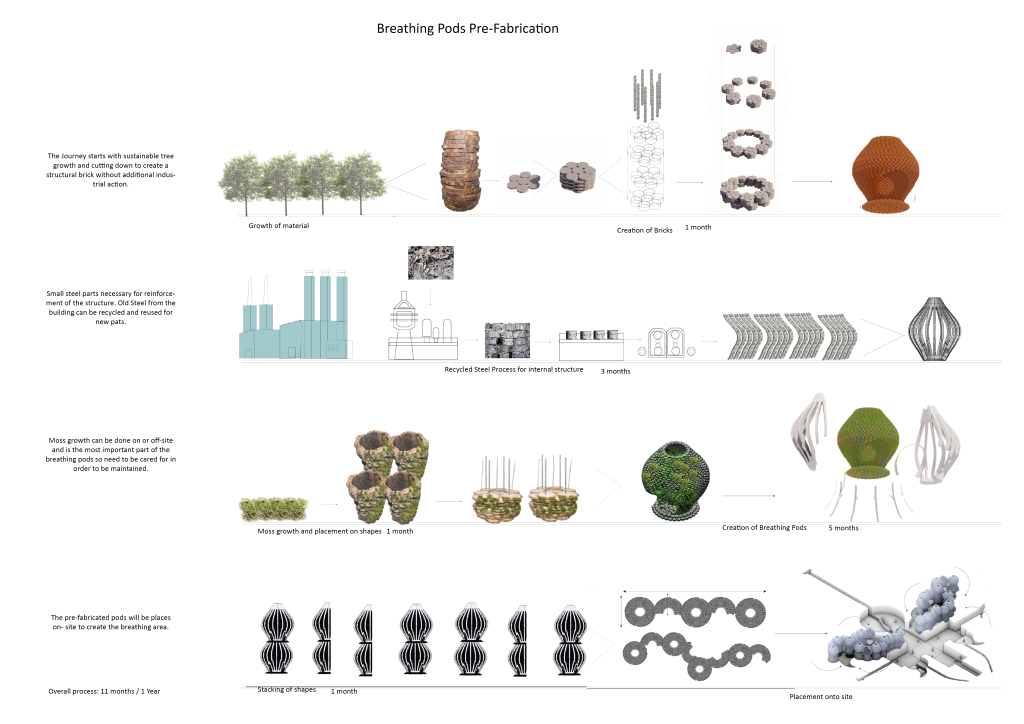

Spiritual healing and air filtration in one. A psilocybin farm, and research centre for alternative healthcare. The core links between psilocybin and mental health are the psychedelic properties which increase human connectivity to nature, while nature is proven to be the original remedy for mental wellbeing. The project enhances this connection by providing a mushroom ecosystem with the creation of a microclimate from natural larch logs. The same structural logic is used for breathing pods, filtering air through the use of moss, enhancing the spiritual healing properties.

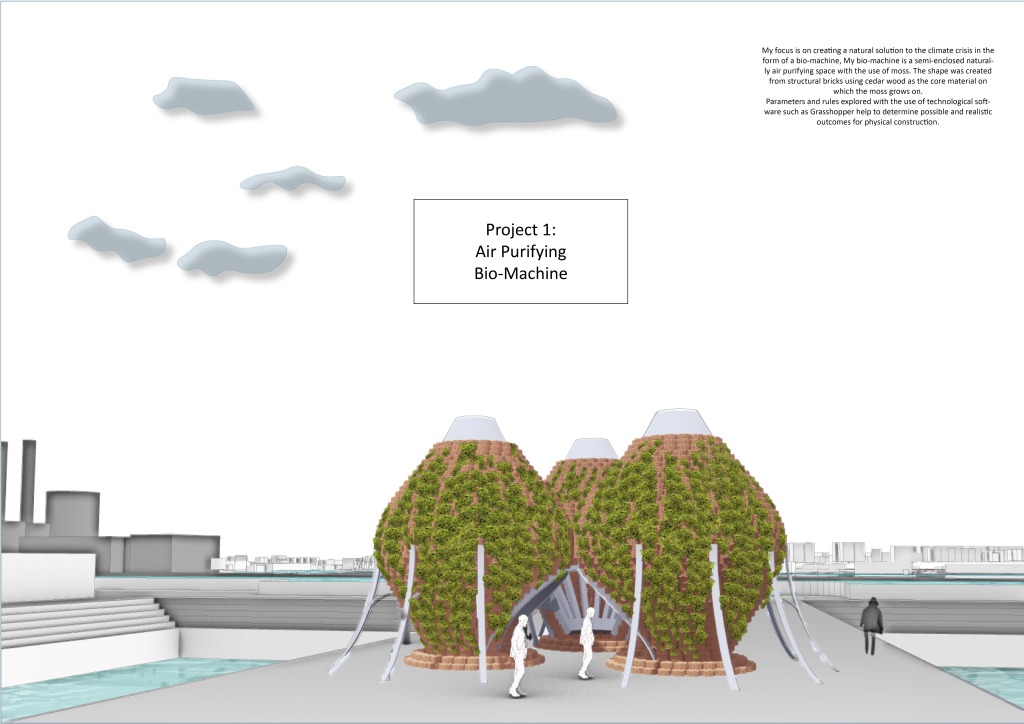

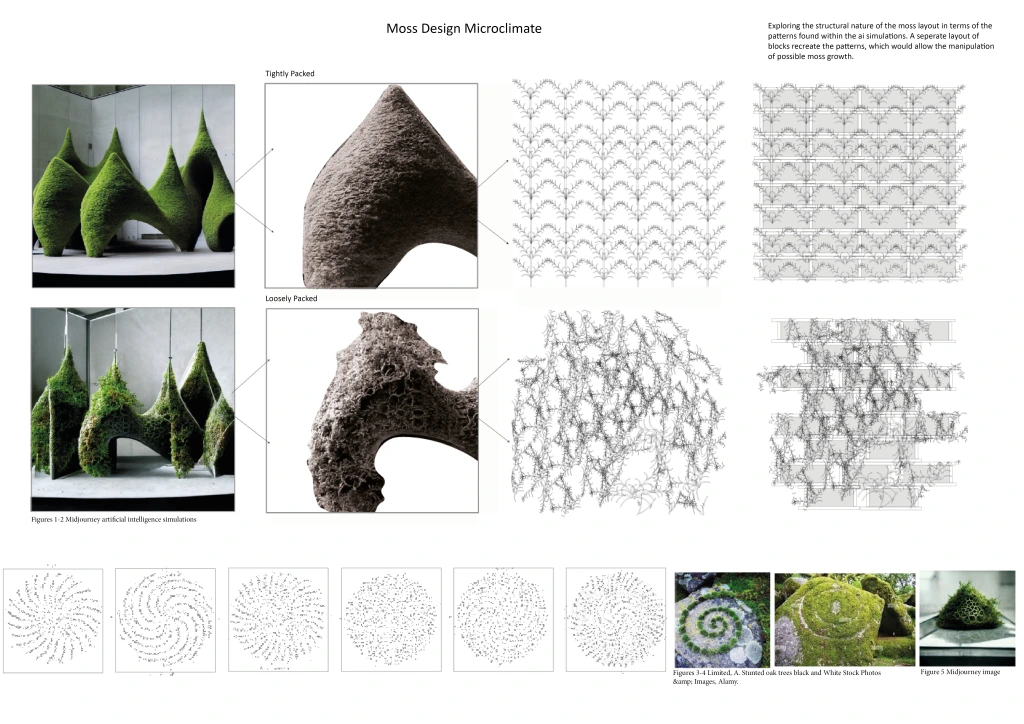

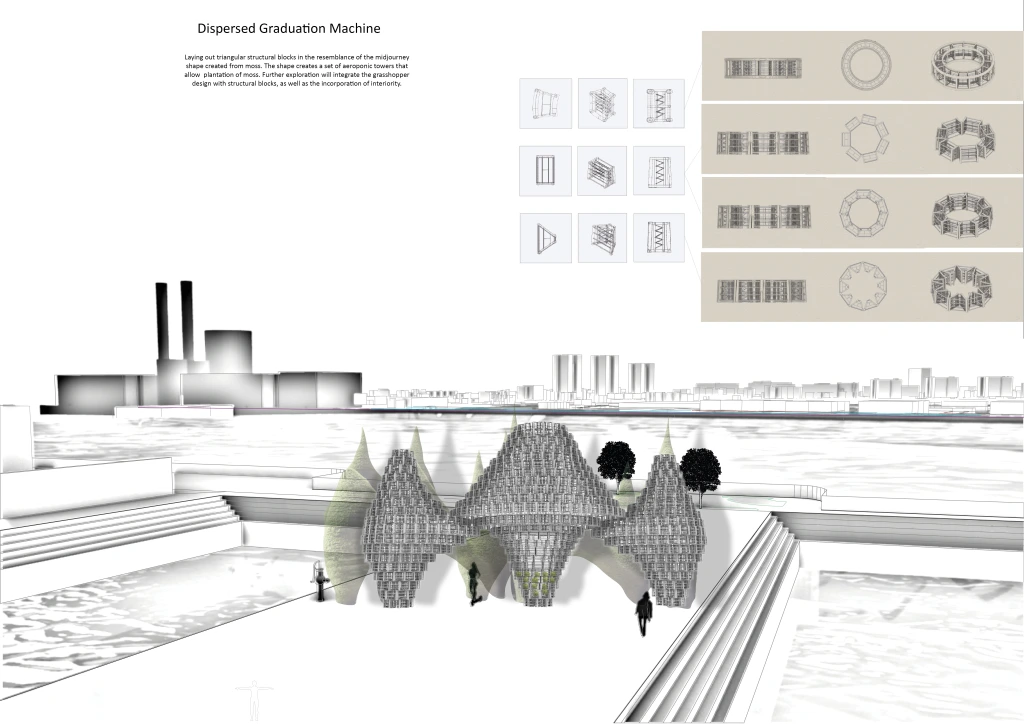

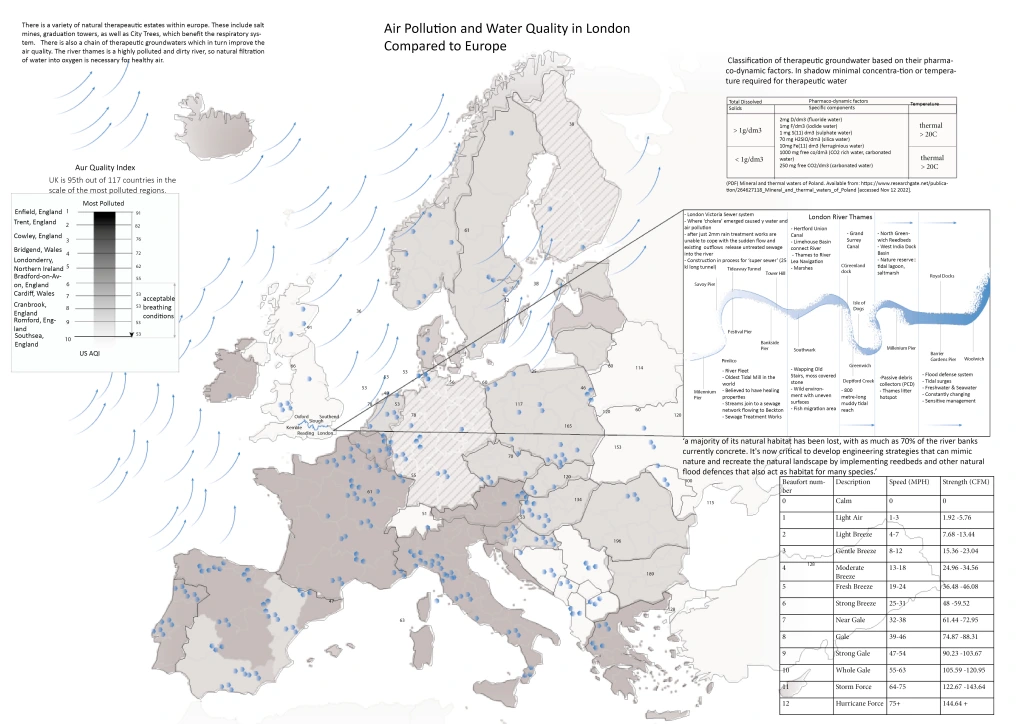

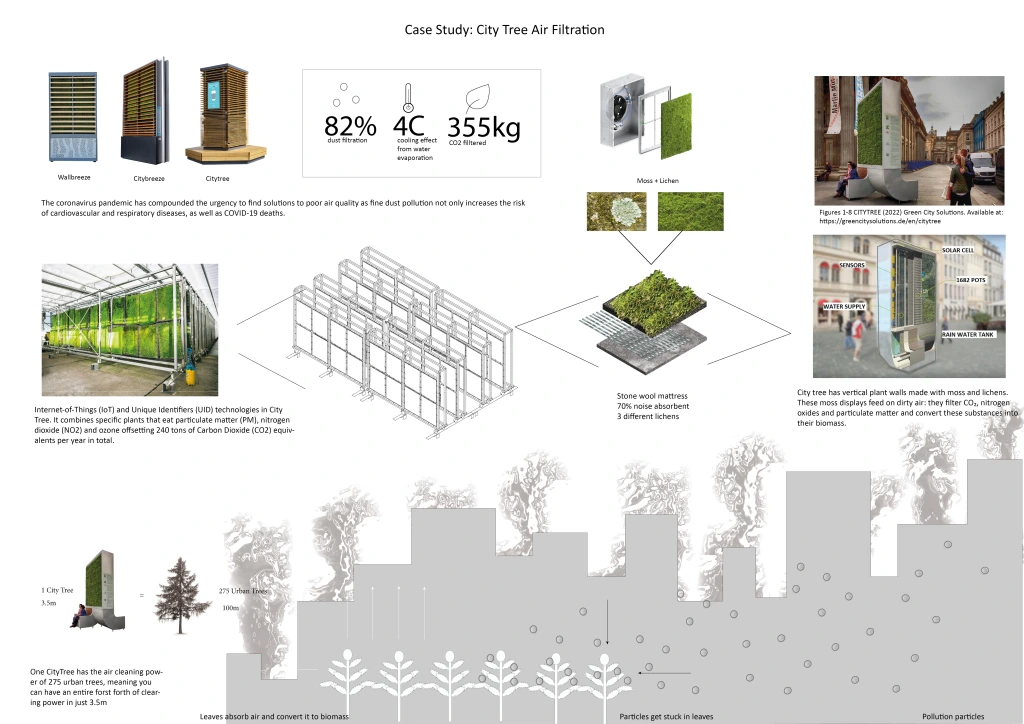

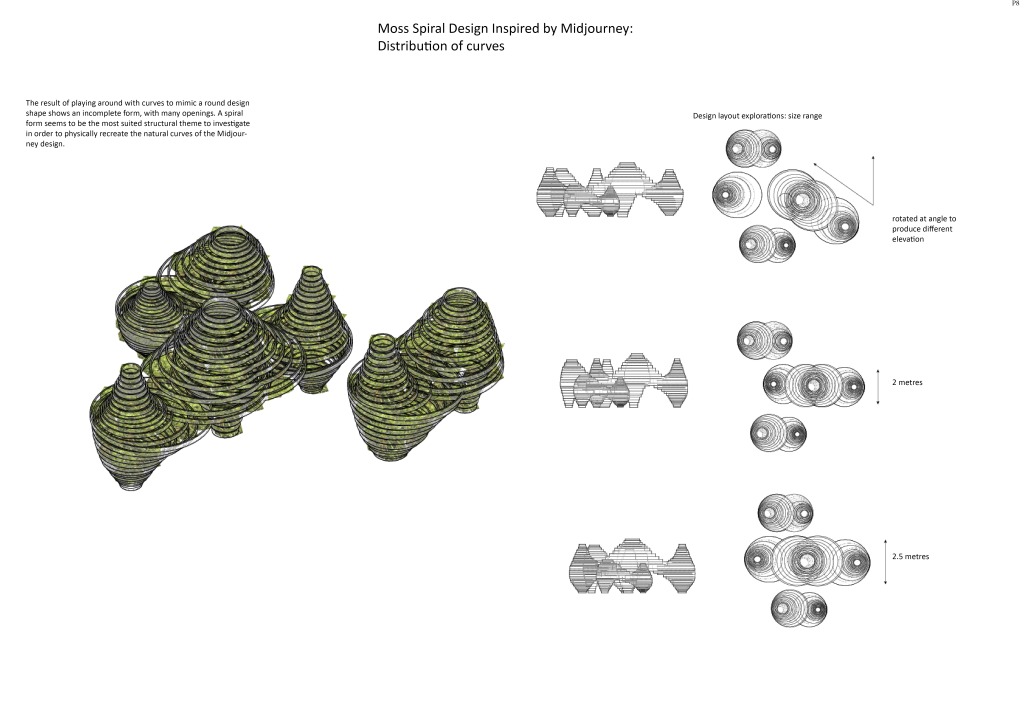

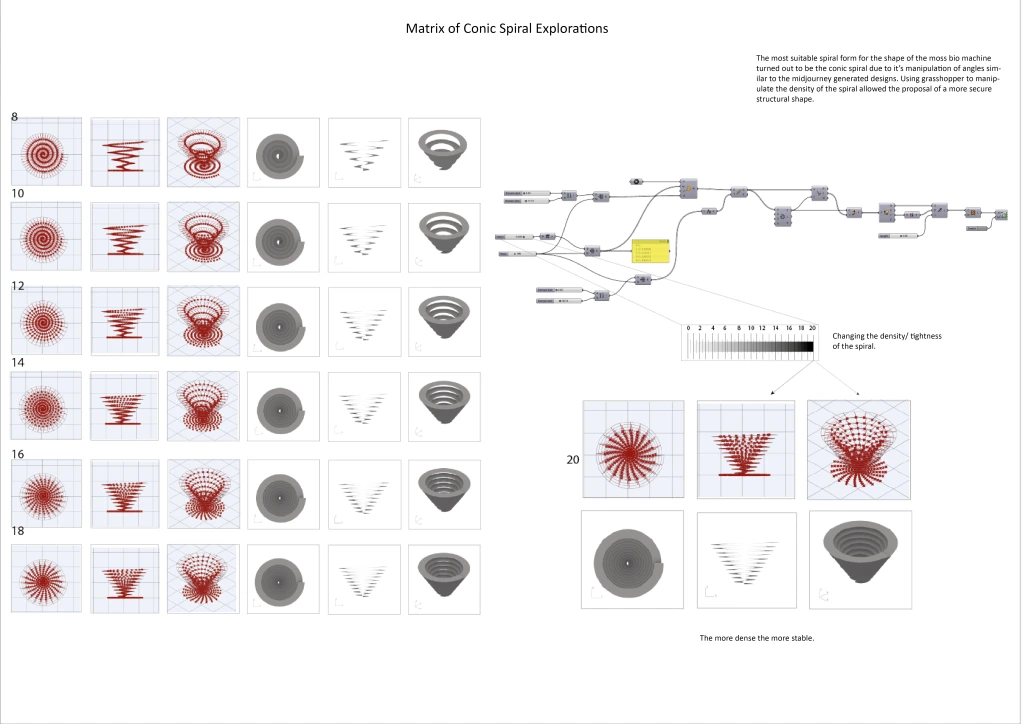

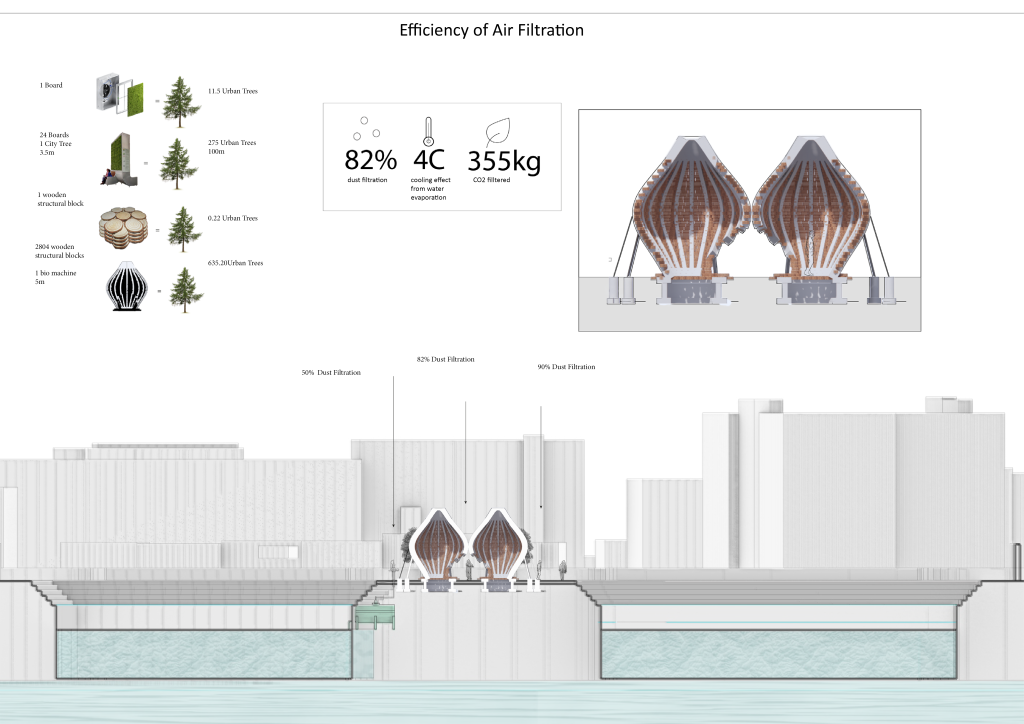

The breathing pods are bio-machines focused on creating a natural solution to the issue of pollution within large cities. Continuing the aim of City Tree walls placed around London: the pods offer an architectural and natural rendition inspired by AI generated Midjourney images. A single pod is a semi-enclosed space created from structural wooden logs placed in a circular motion: depending on one another to hold themselves up. Moss growth in its natural habitat and wind velocity created through the gaps within the logs make 1 breathing pod equal to the air filtration of up to 600 urban trees during high wind levels.

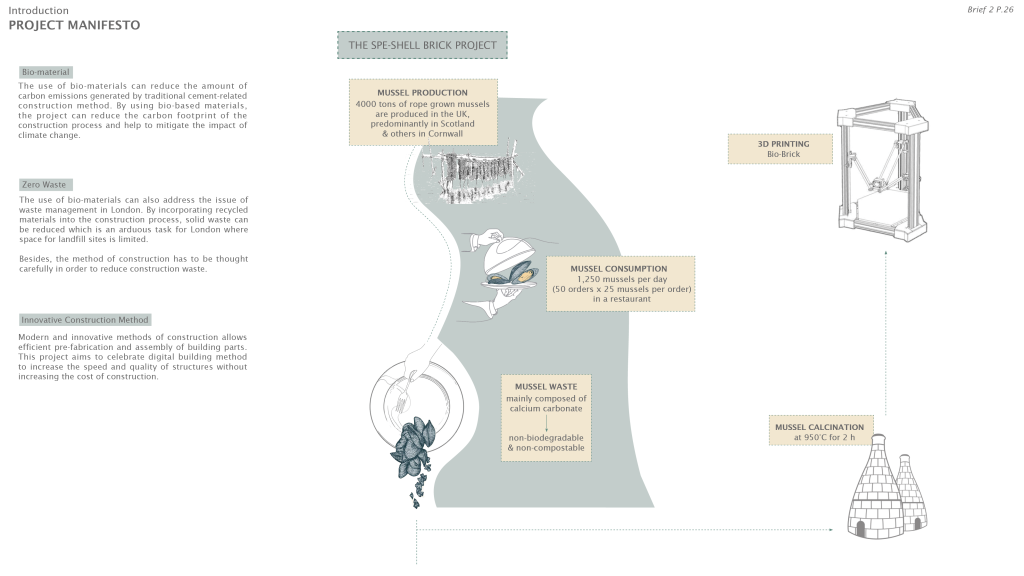

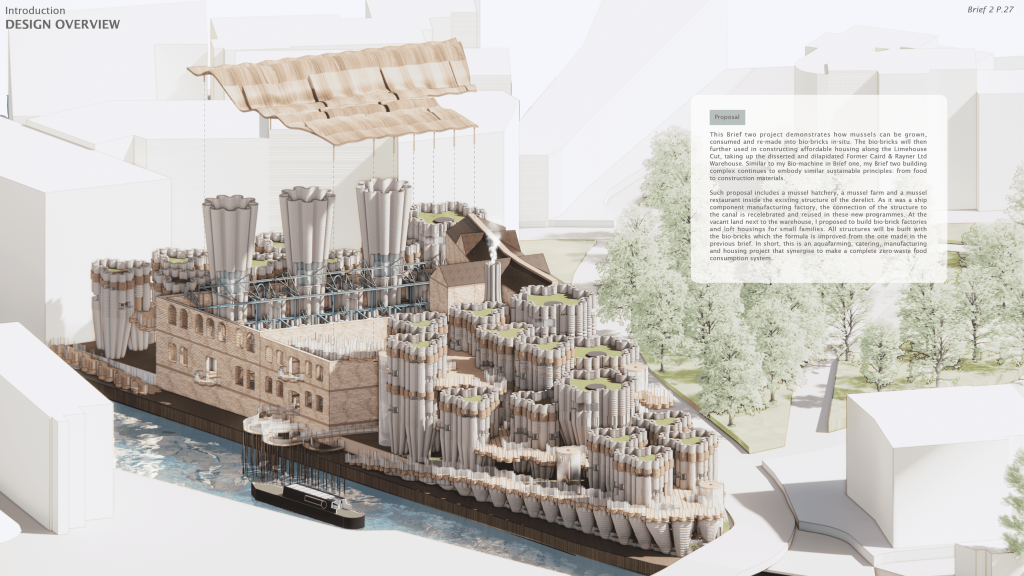

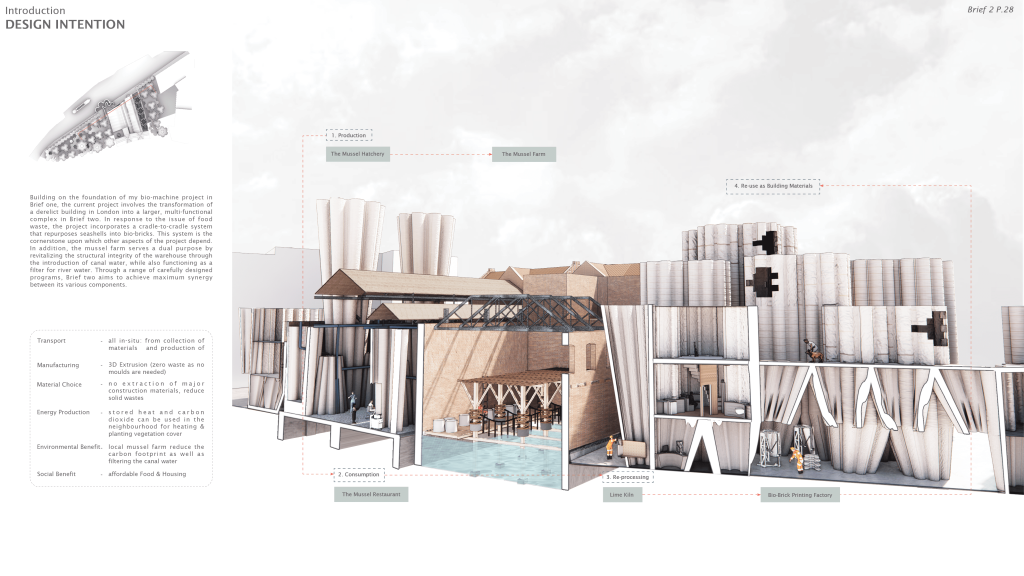

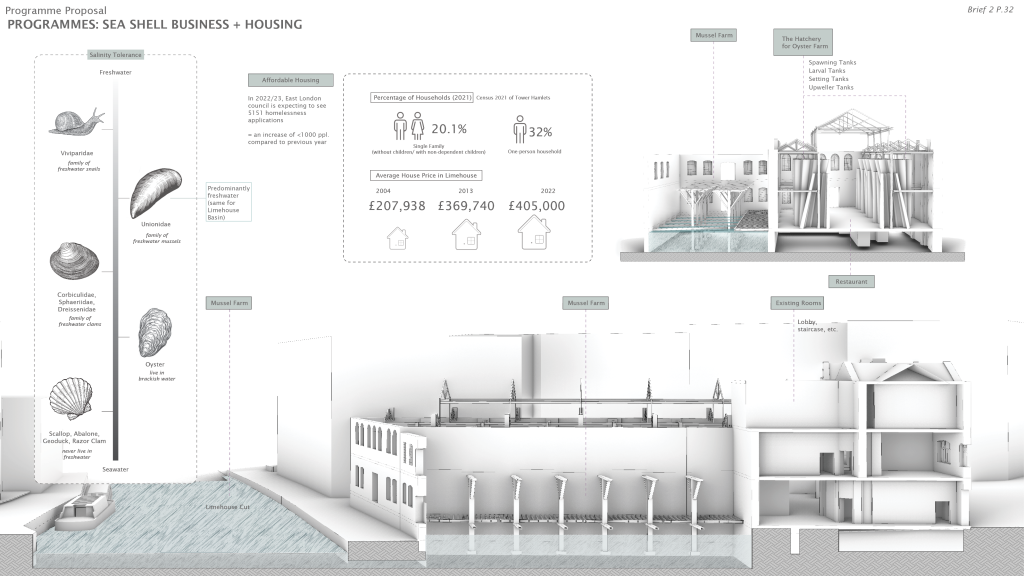

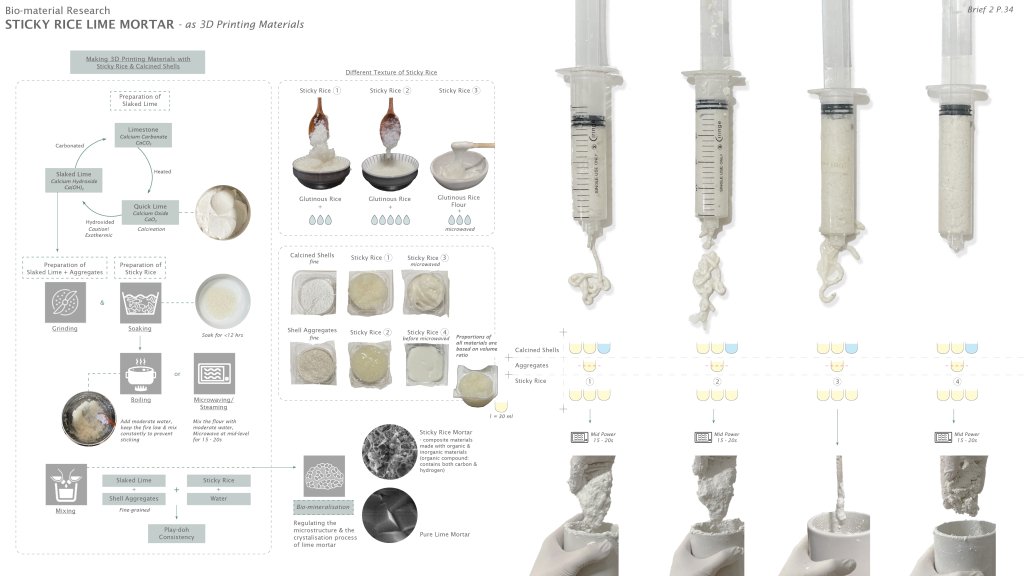

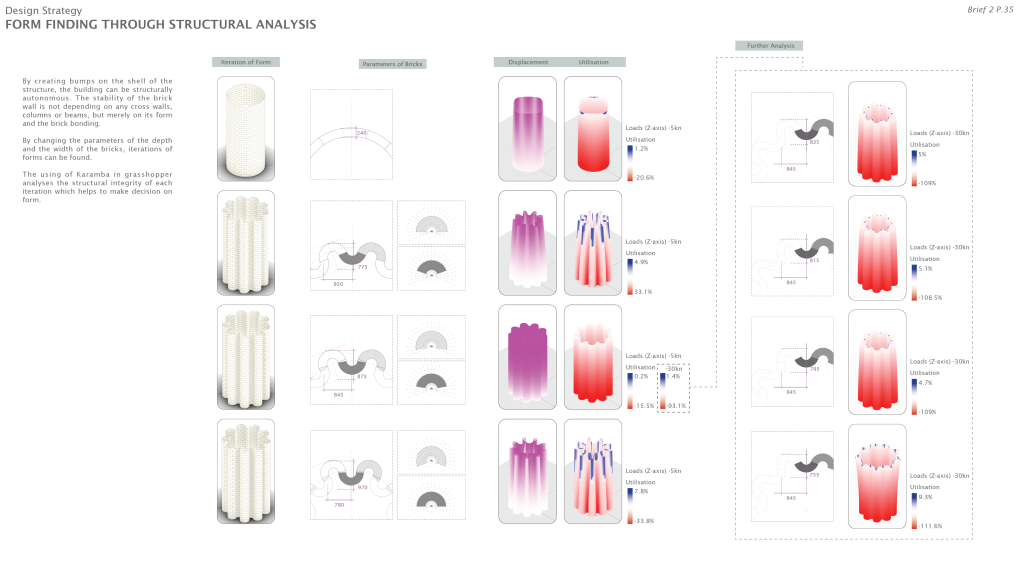

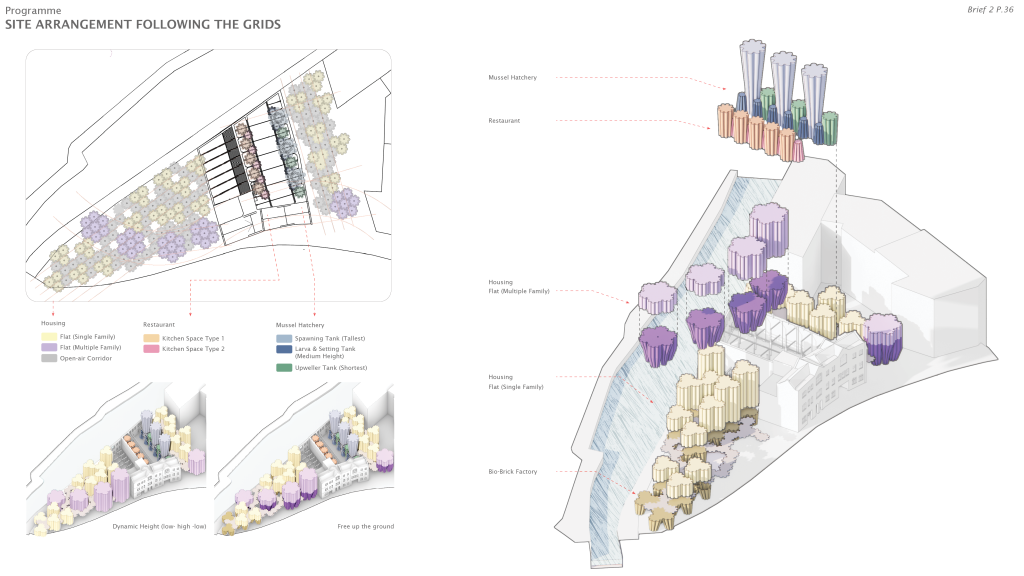

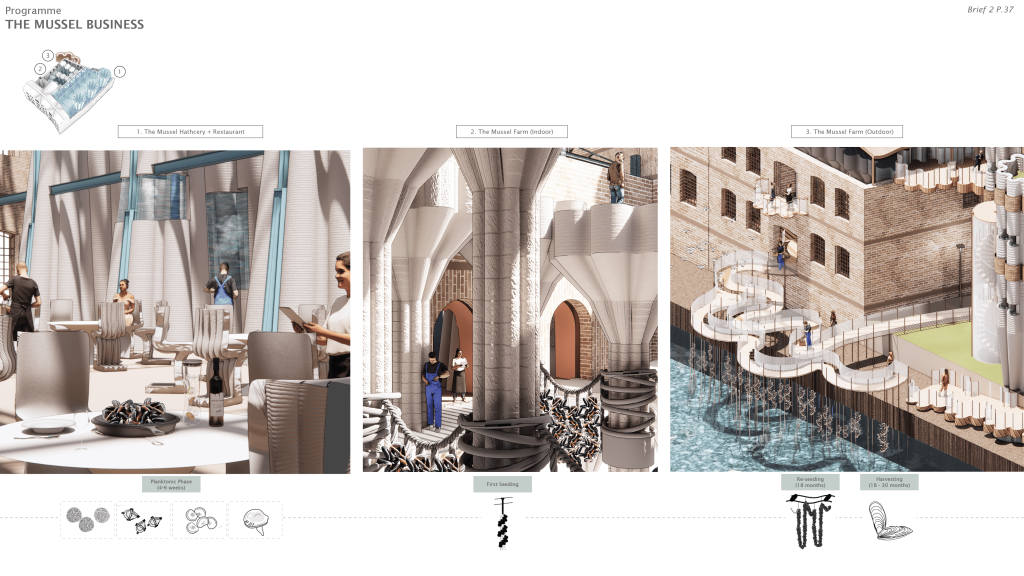

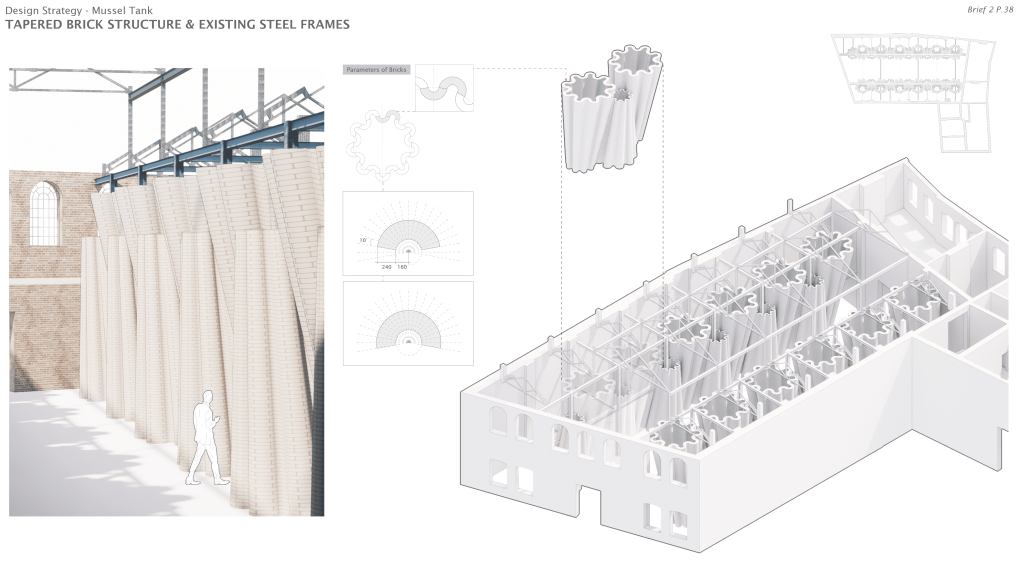

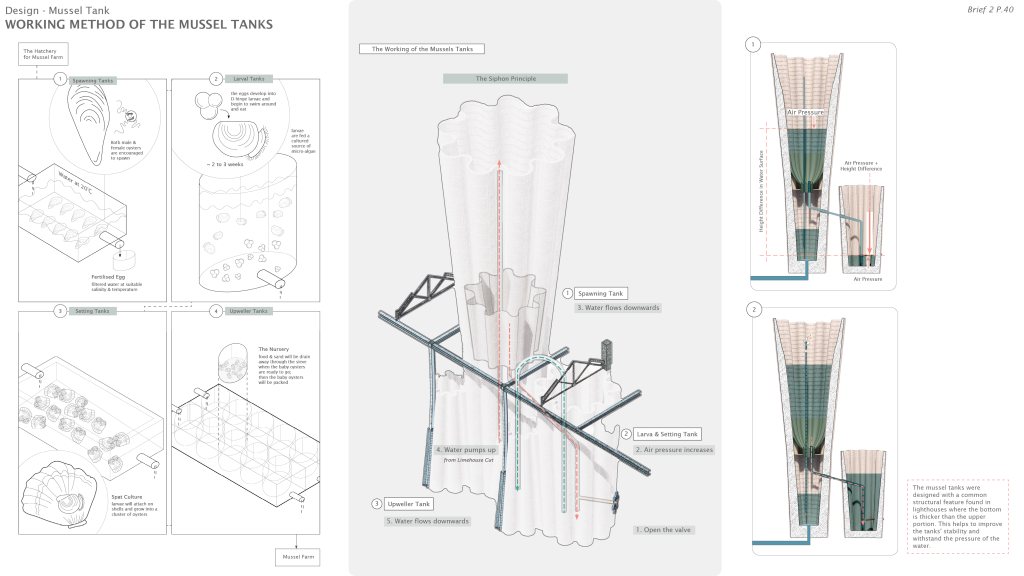

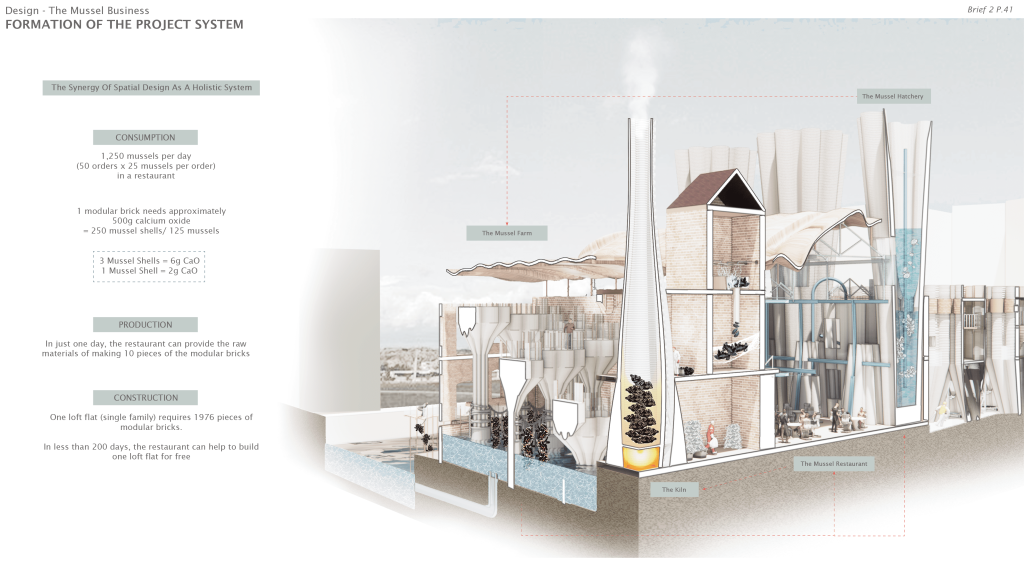

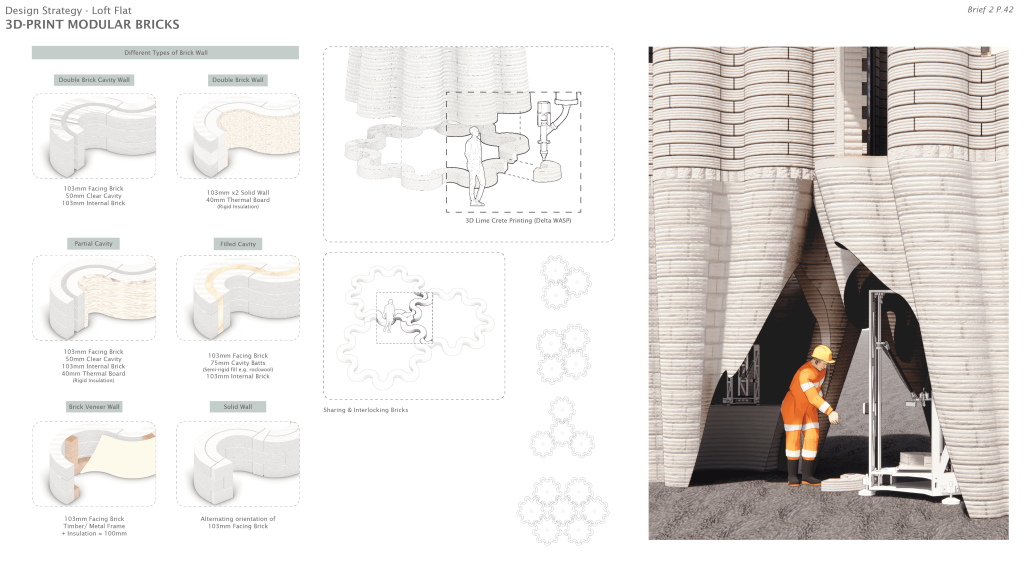

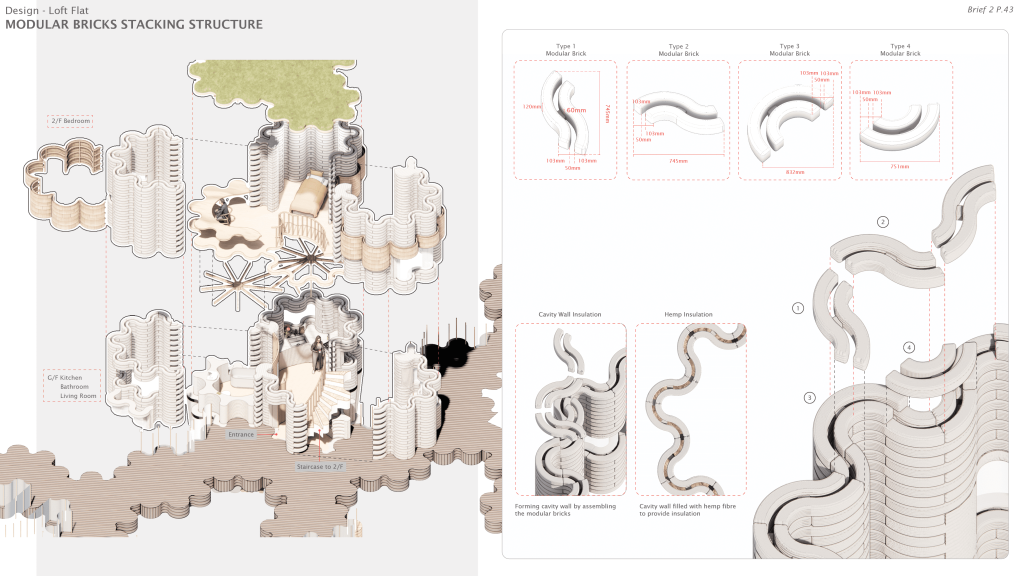

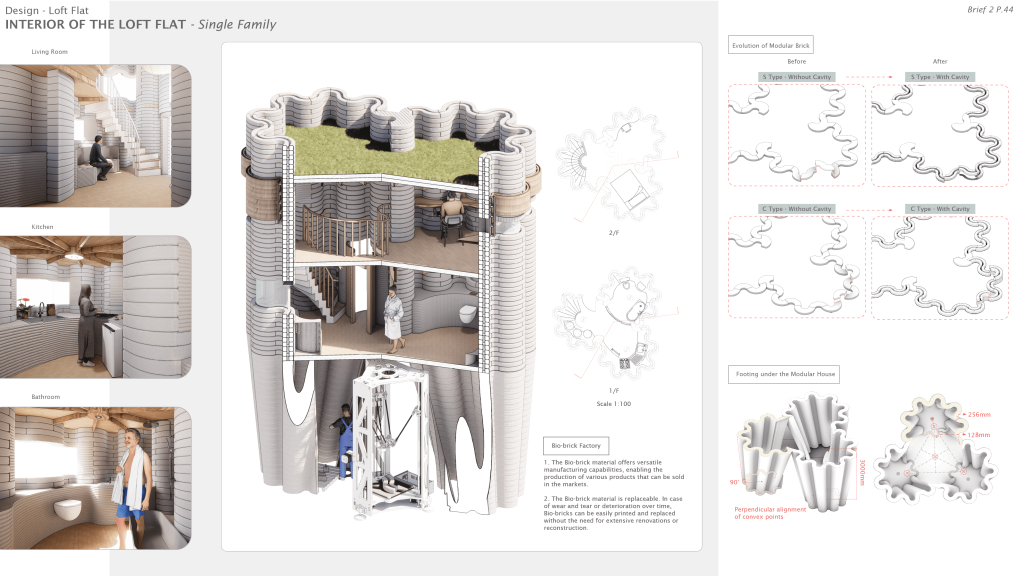

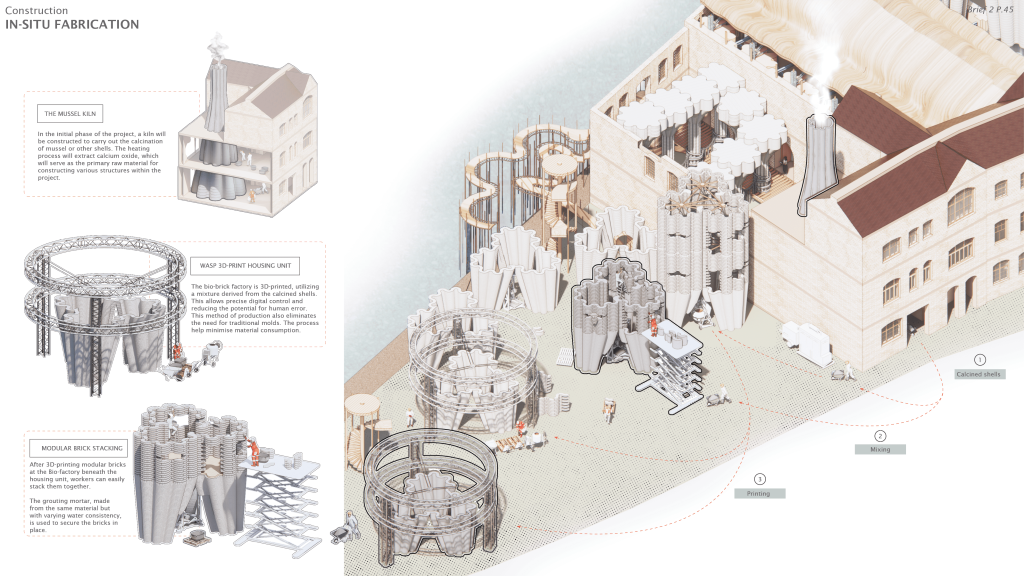

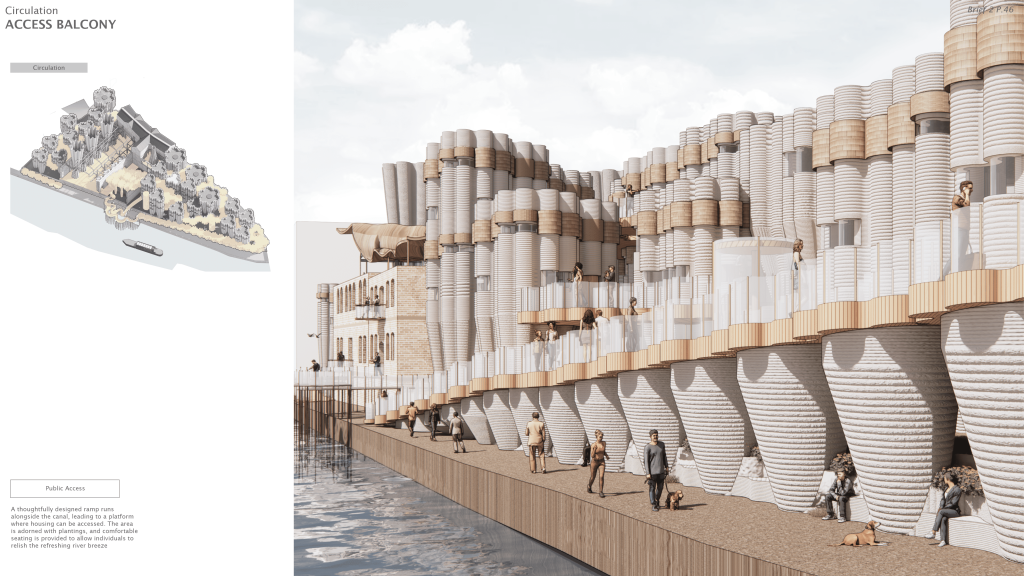

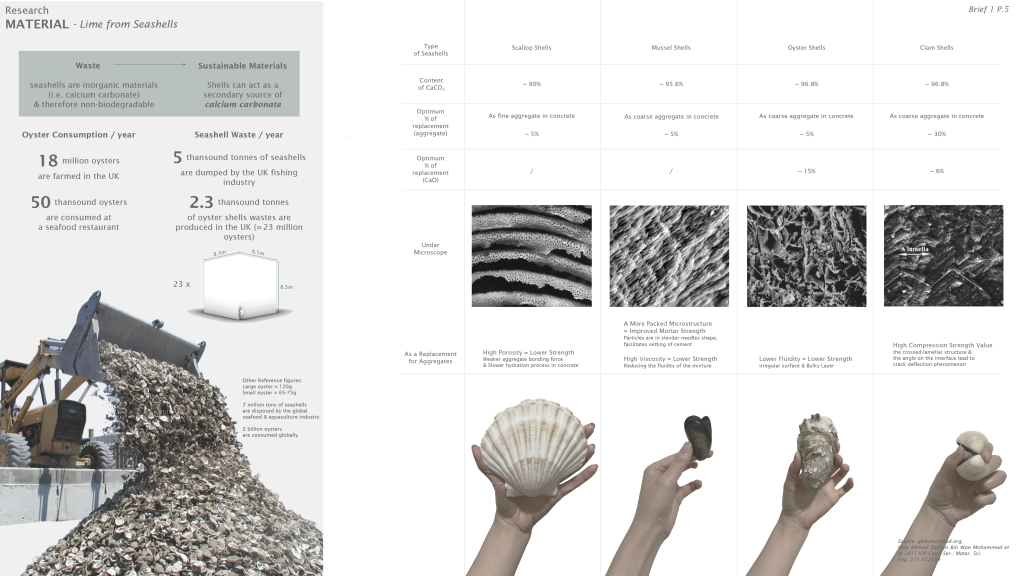

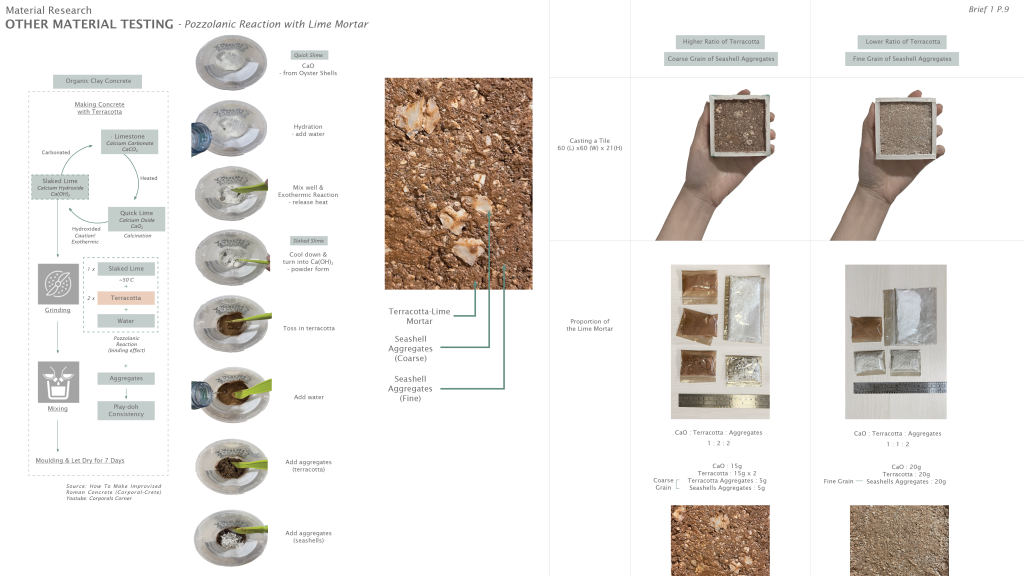

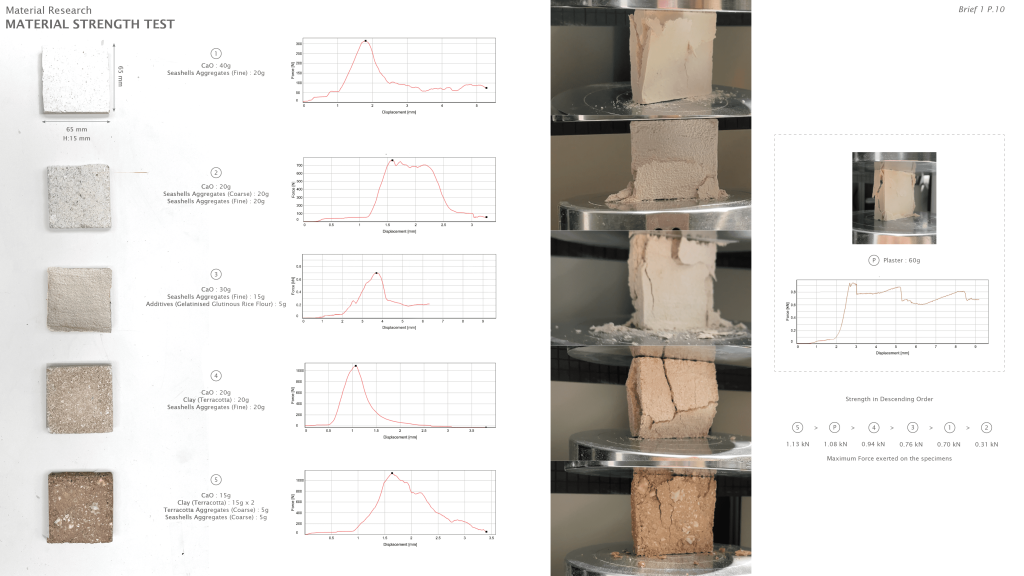

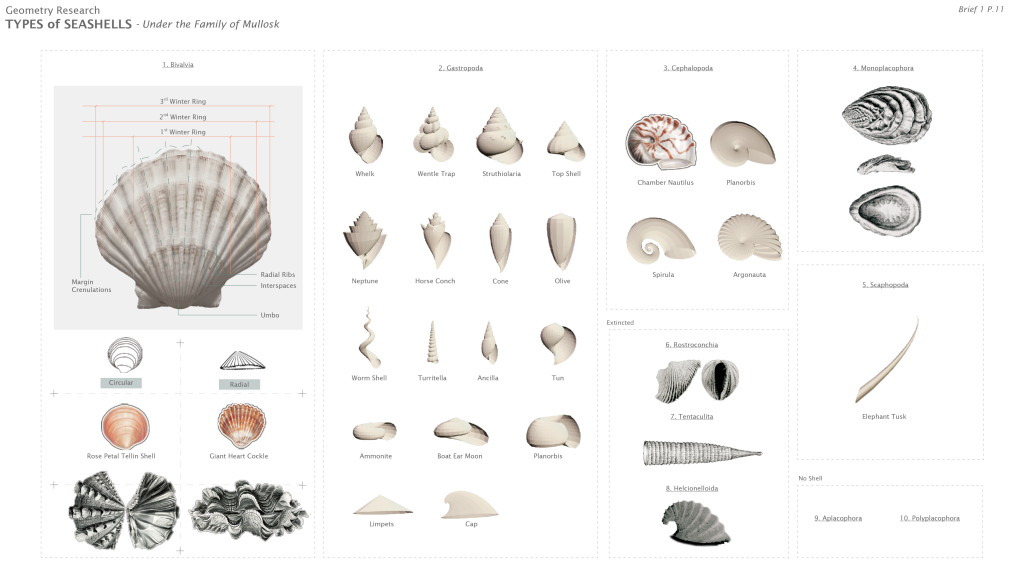

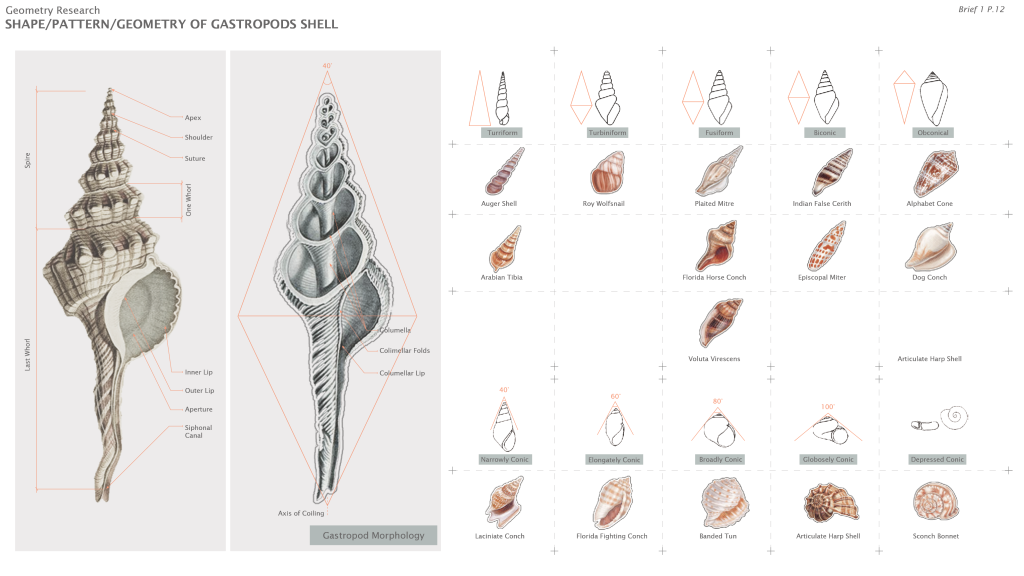

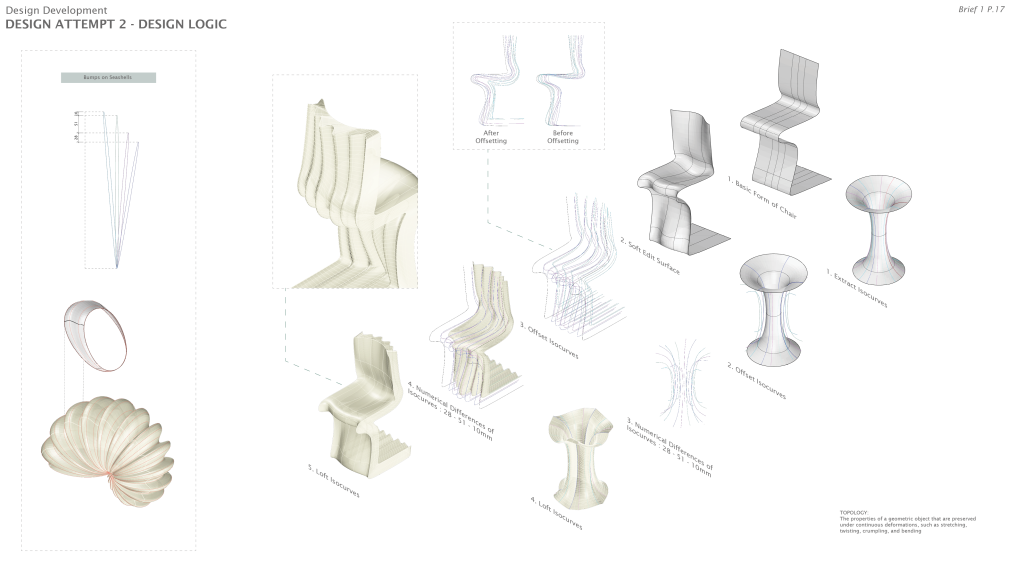

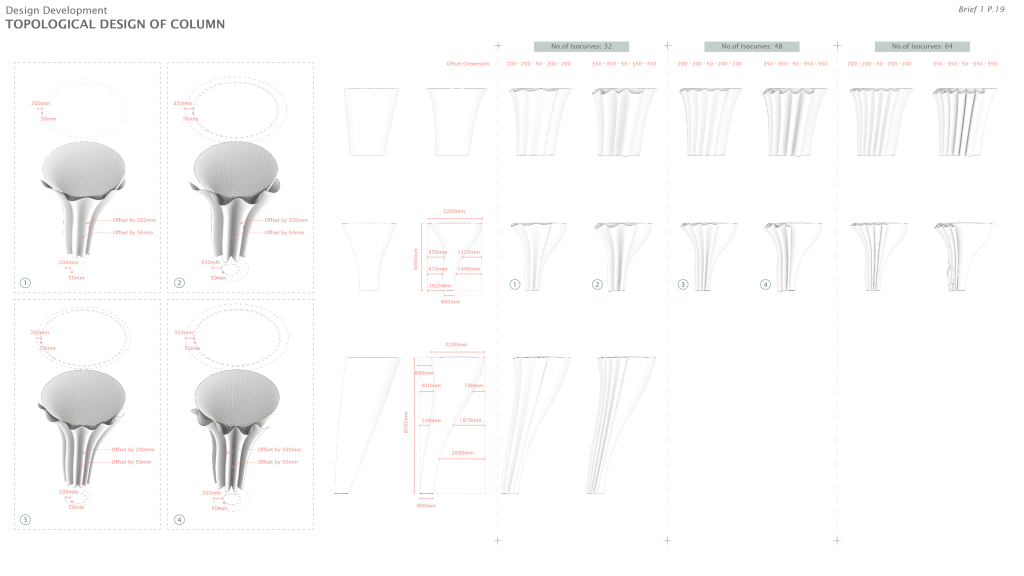

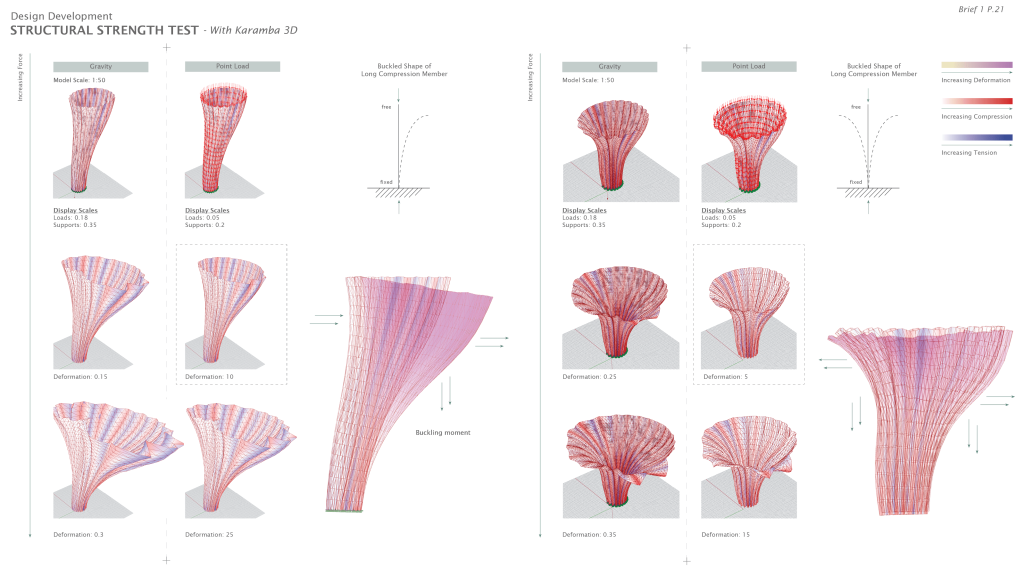

In addressing the problem of food waste, this project implements a cradle-to-cradle system that transforms seashells into bio-bricks. This system encompasses cost-effective food catering services through local mussel cultivation and the repurposing of seashell waste, serving as the foundation for other project elements. By implementing thoughtfully designed programmes, this project aims to achieve maximum synergy between its various components.

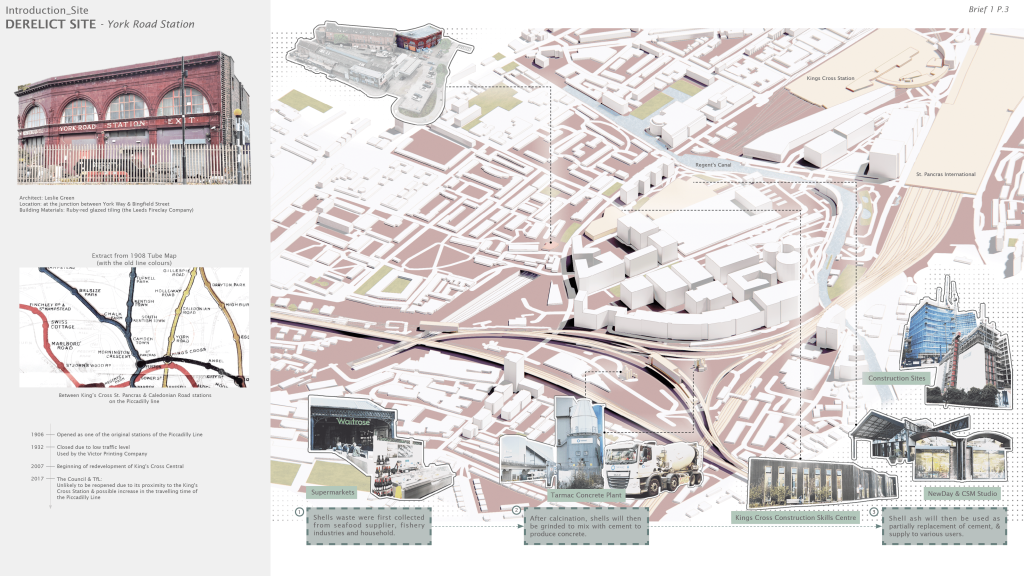

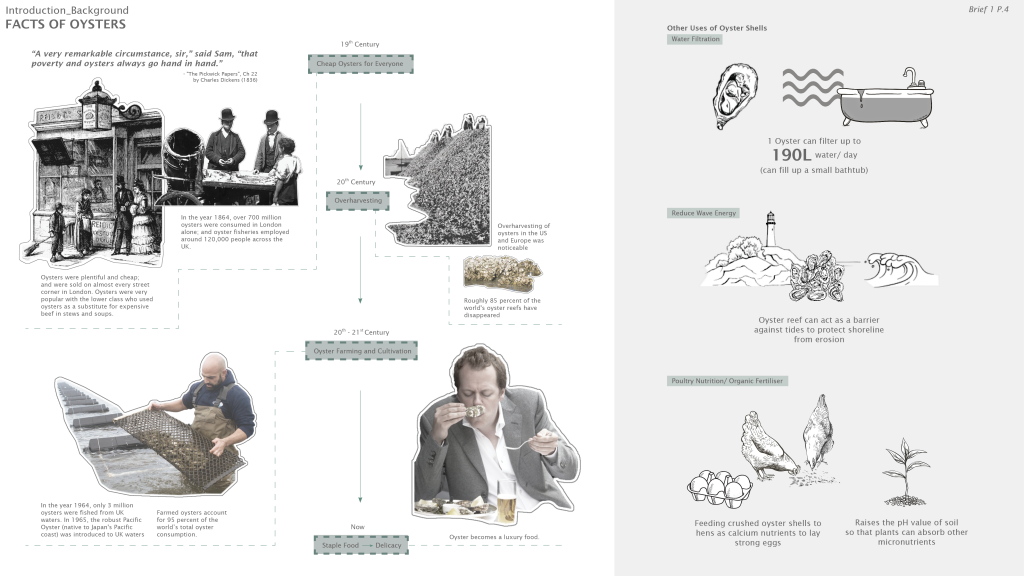

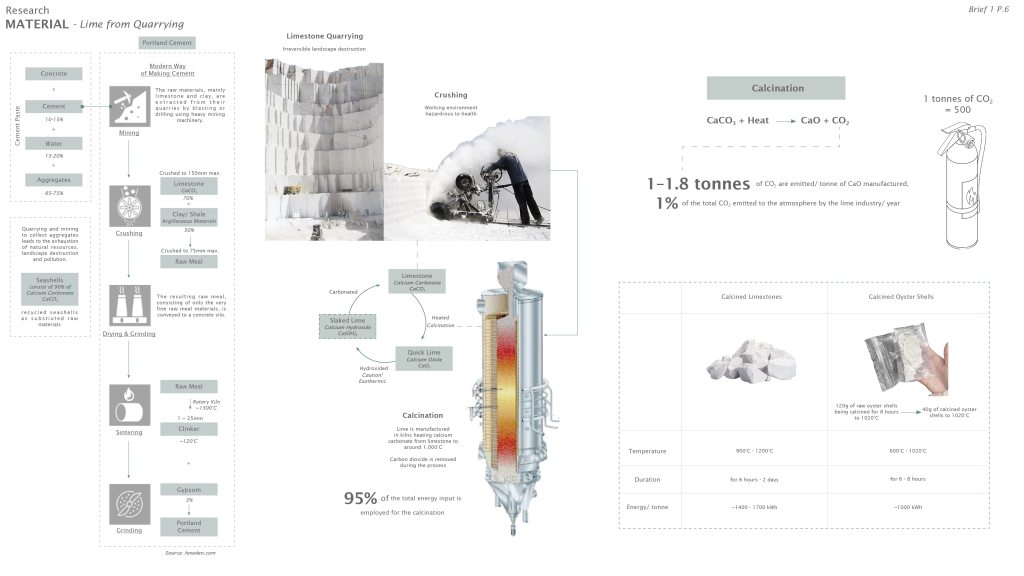

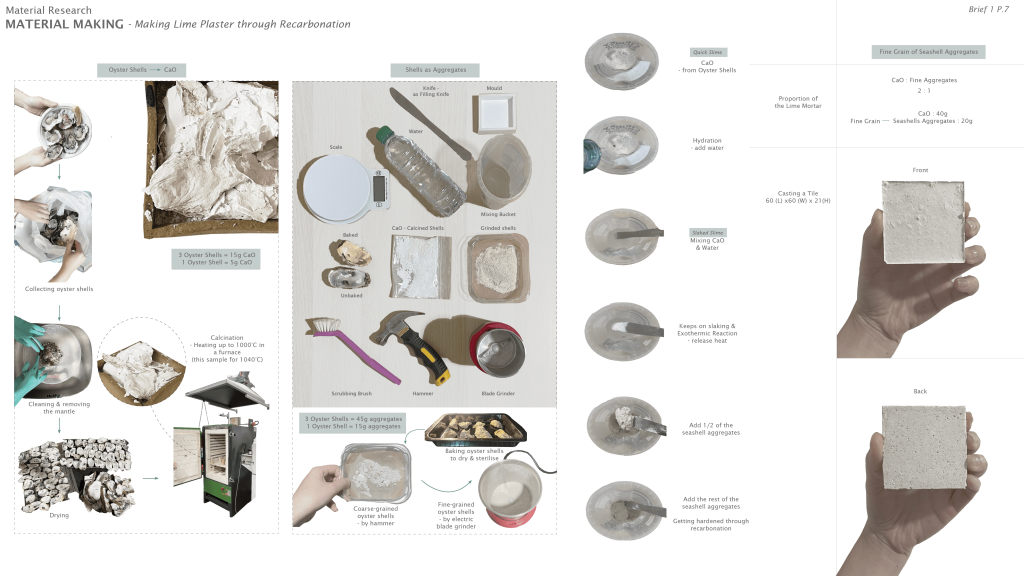

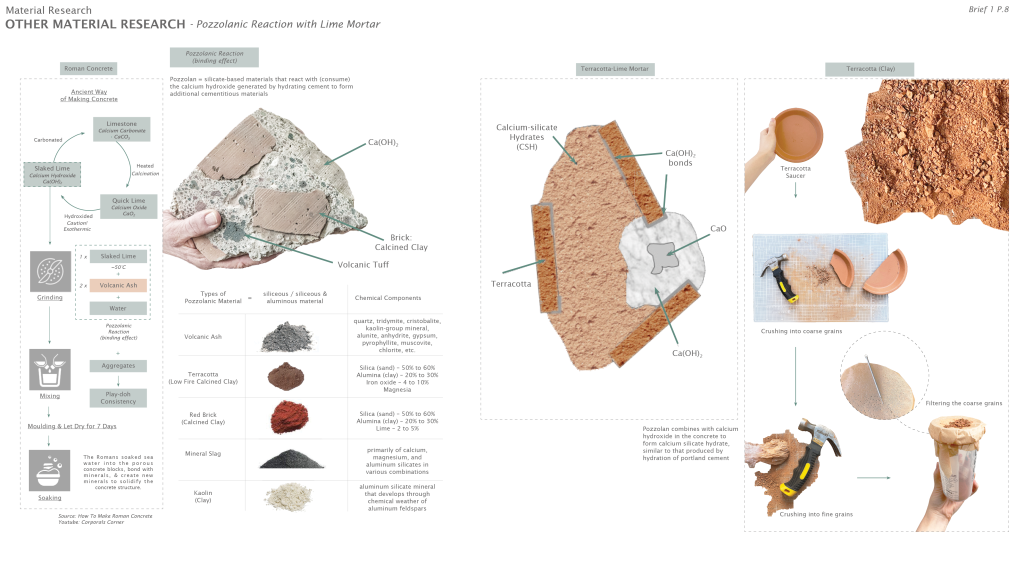

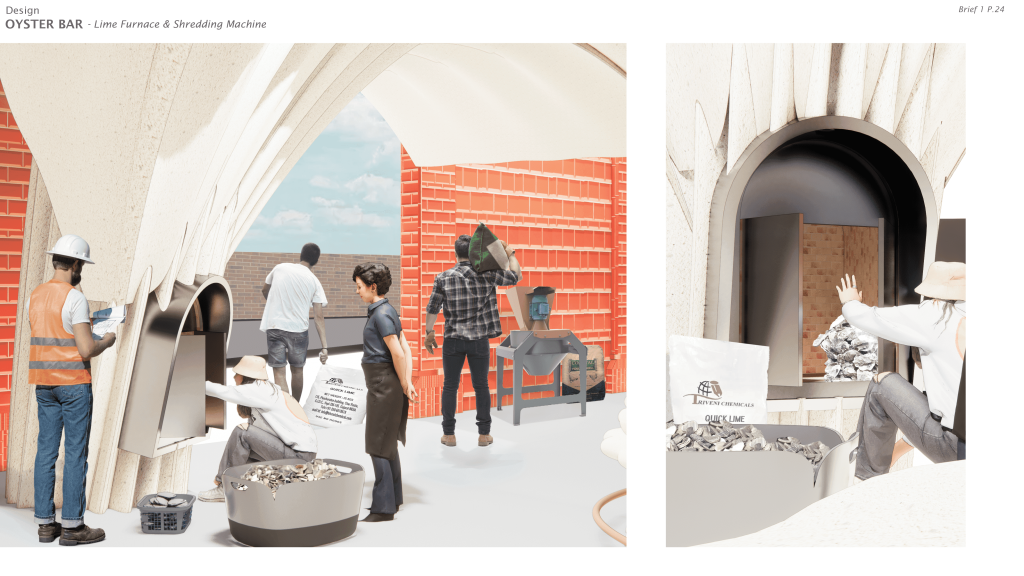

The project aims to design a food consumption system that produce zero waste by turning oyster shells into calcium oxide. The calcined shells can be made into oyster-crete as the building material of the bar, and other calcium-related products such as chicken supplements, etc. The business plan can therefore subsidise the procurement of oysters and provide affordable oysters for the lower classes in the neighbourhood.

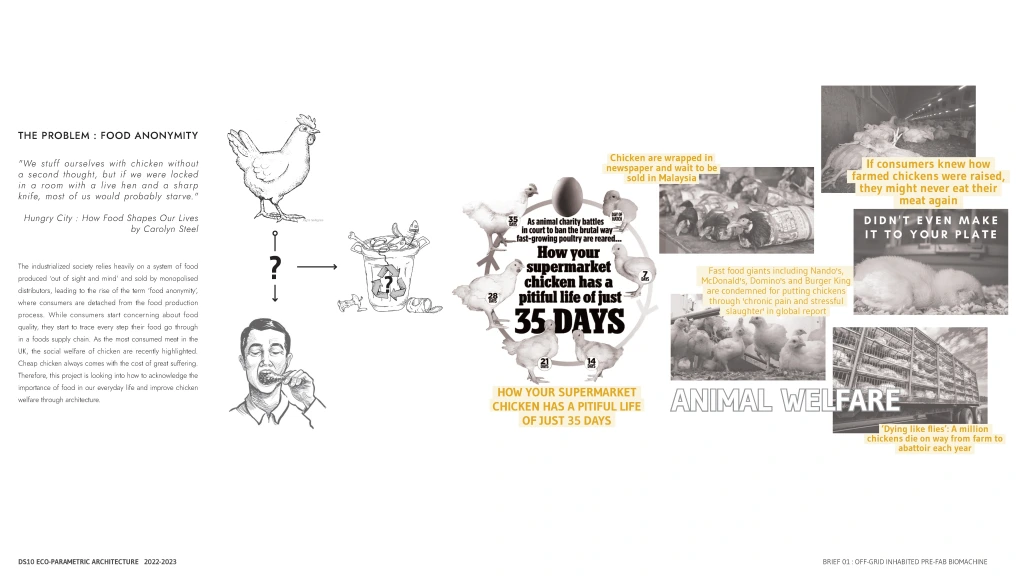

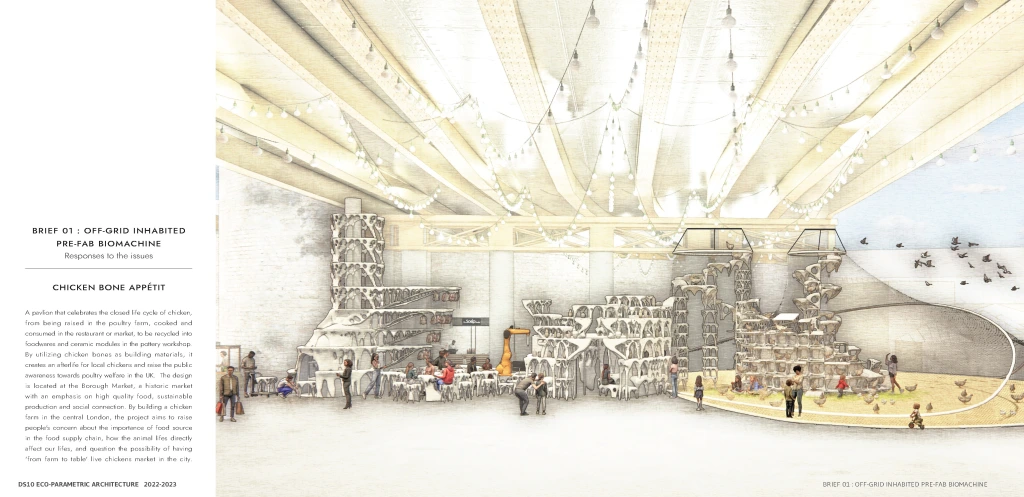

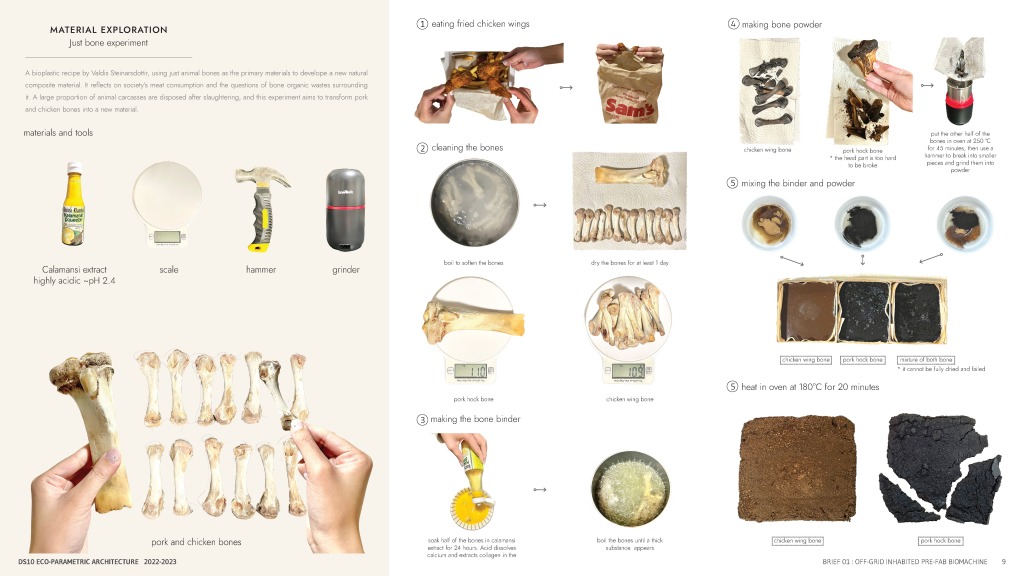

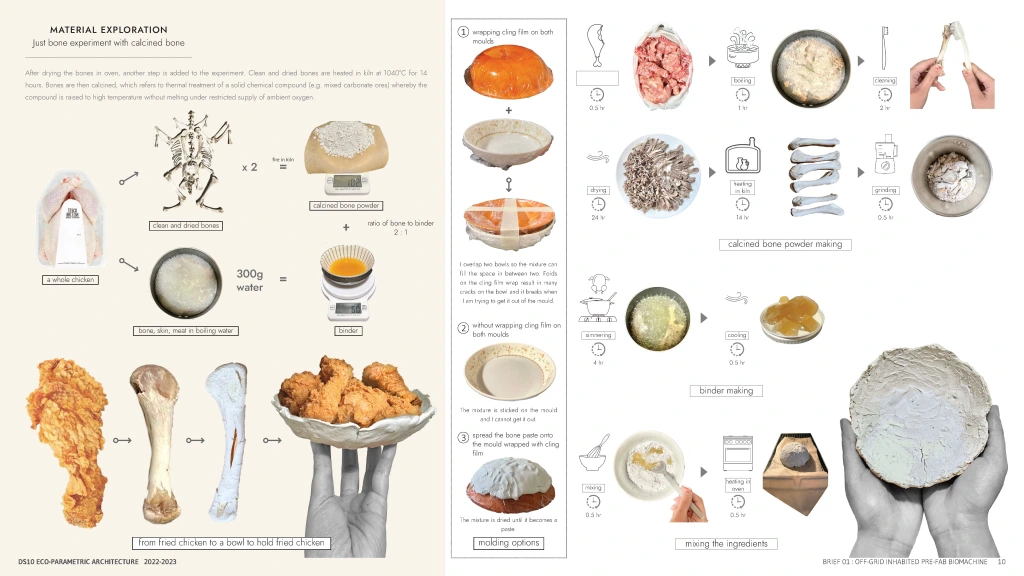

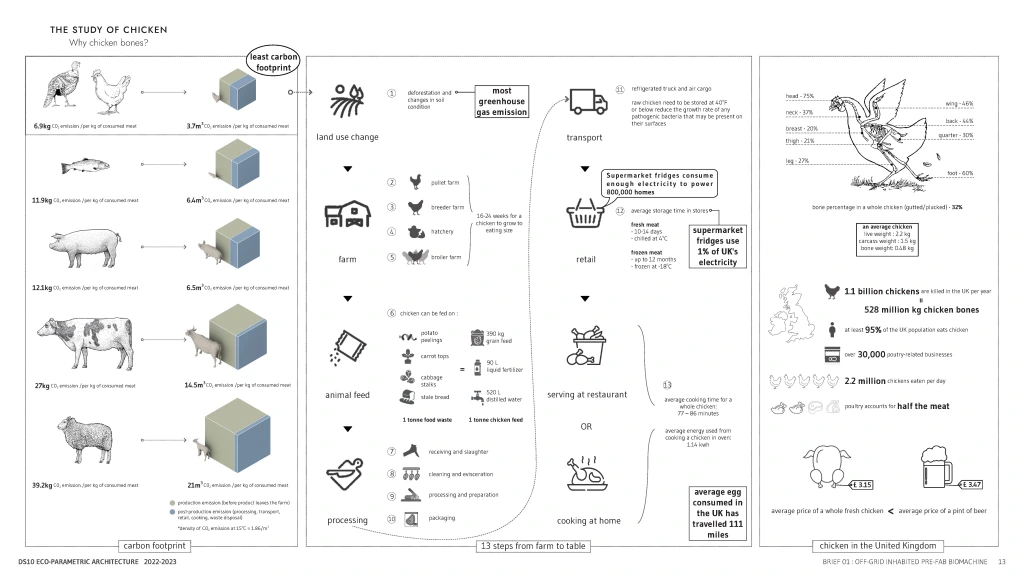

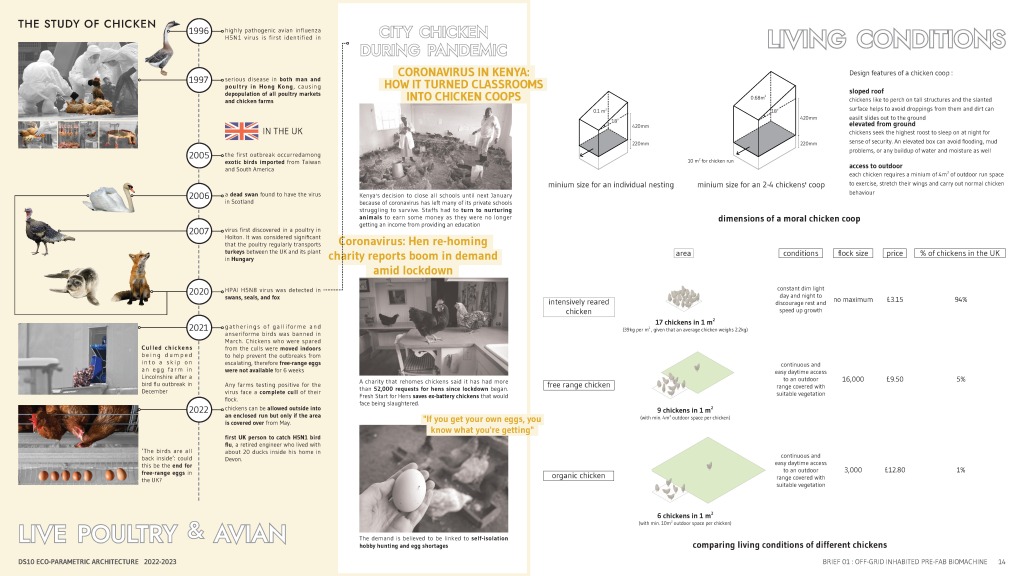

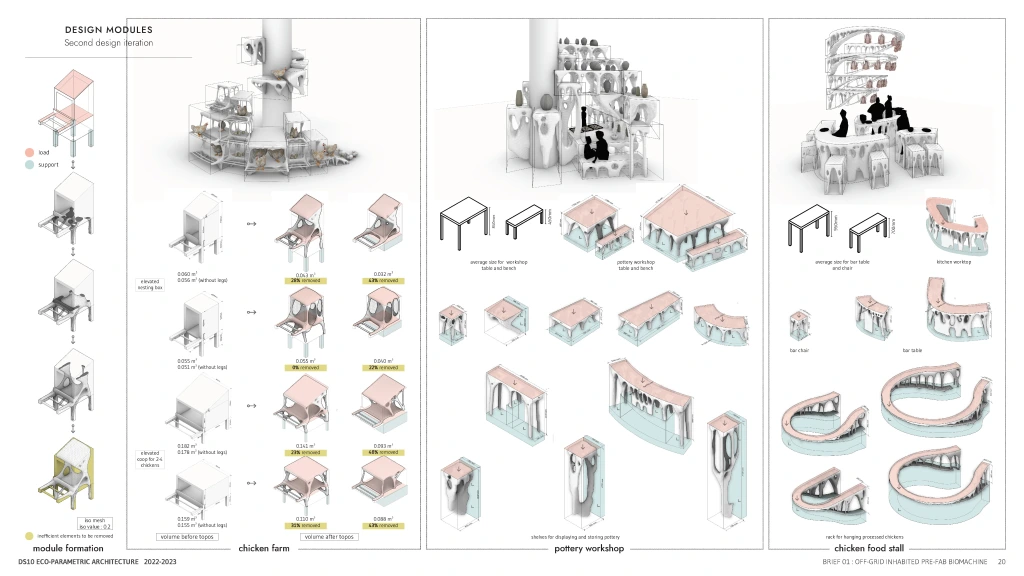

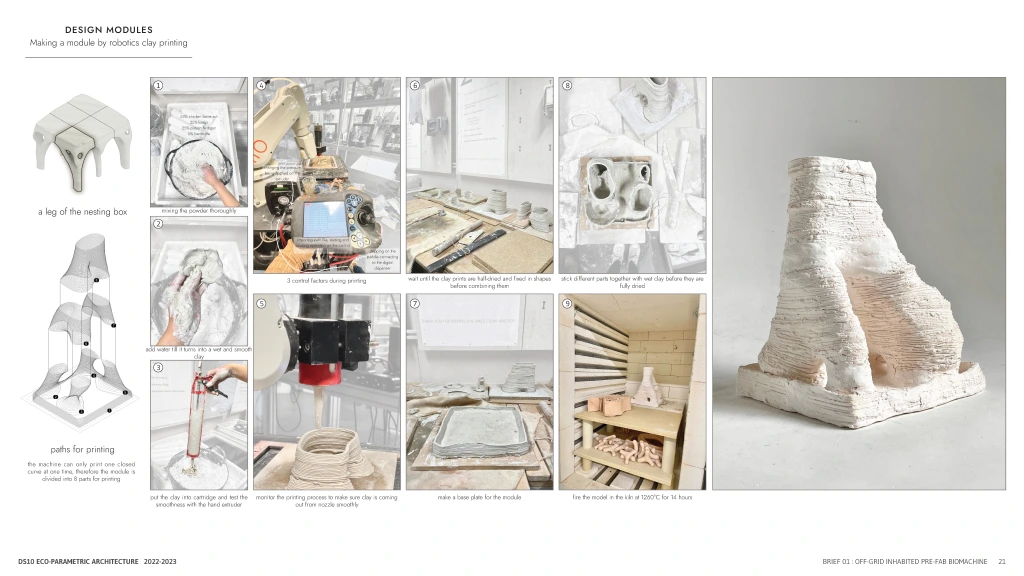

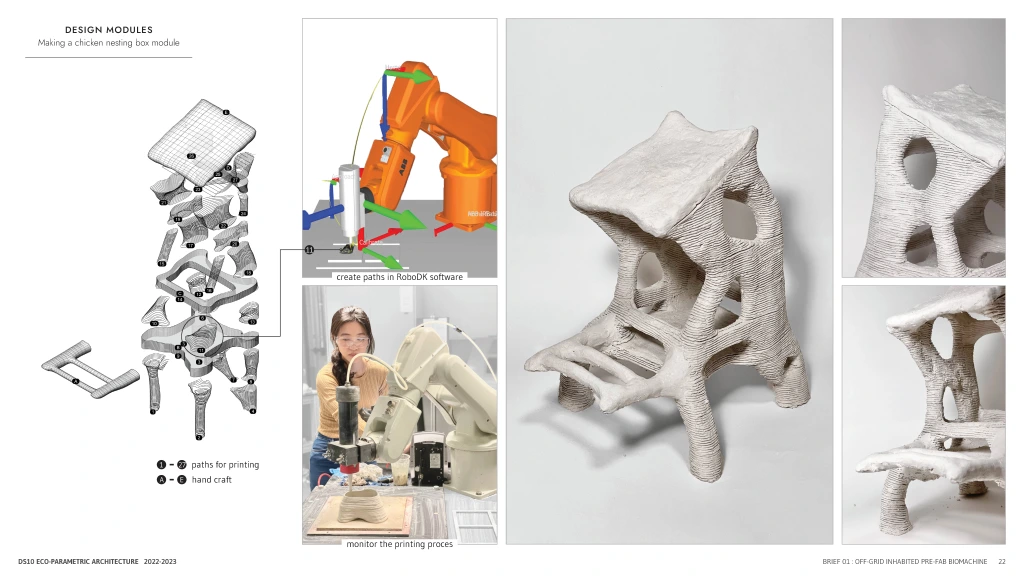

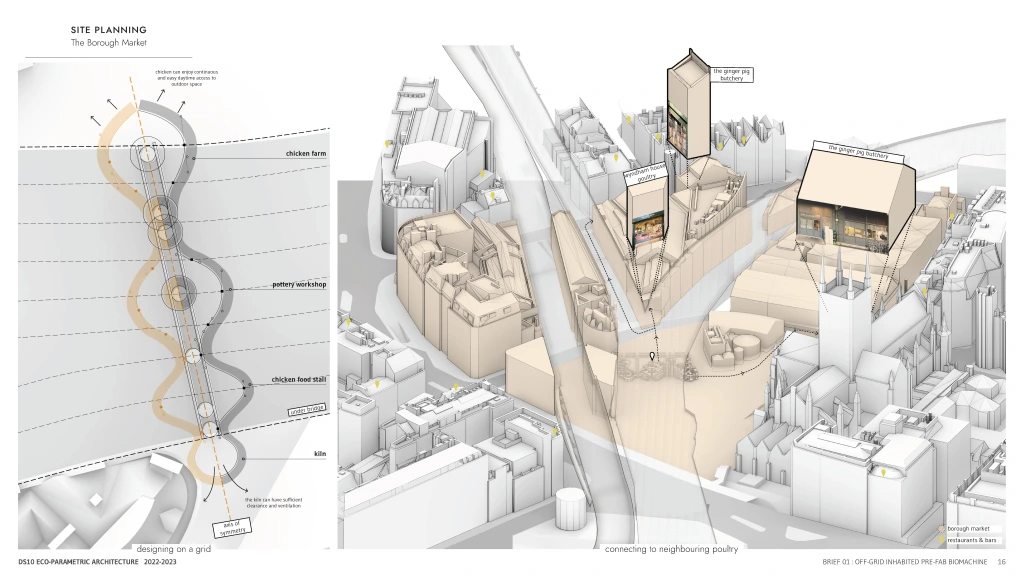

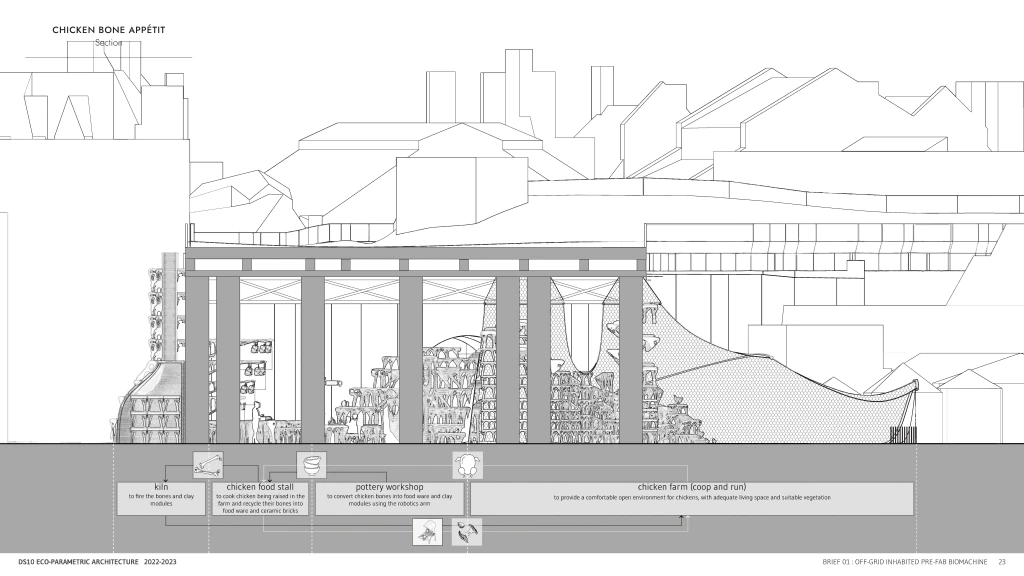

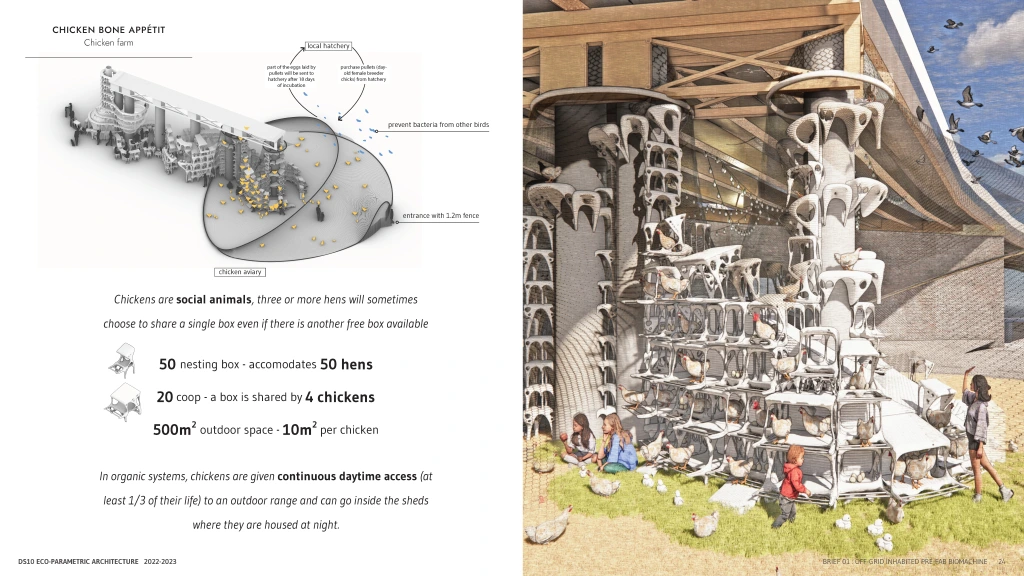

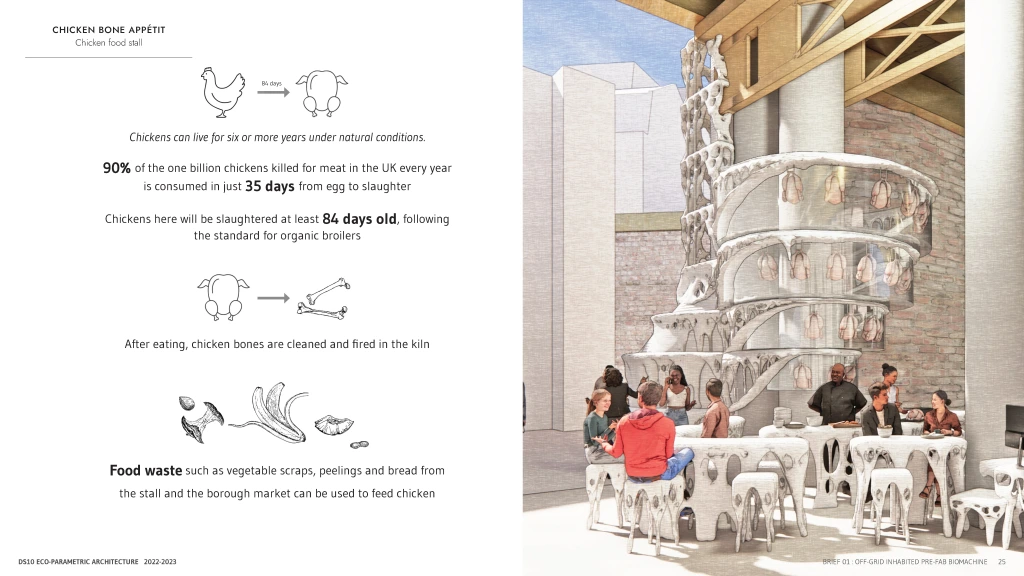

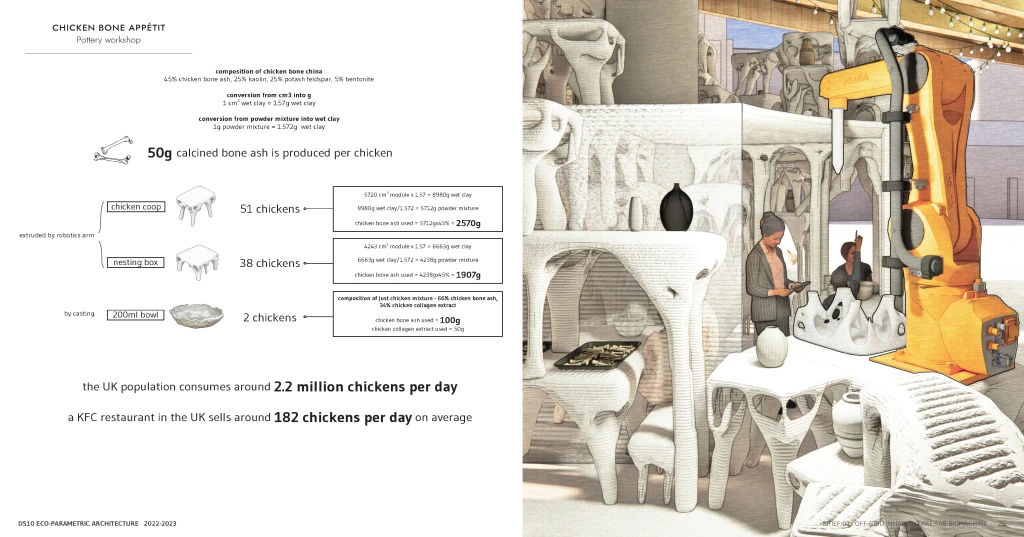

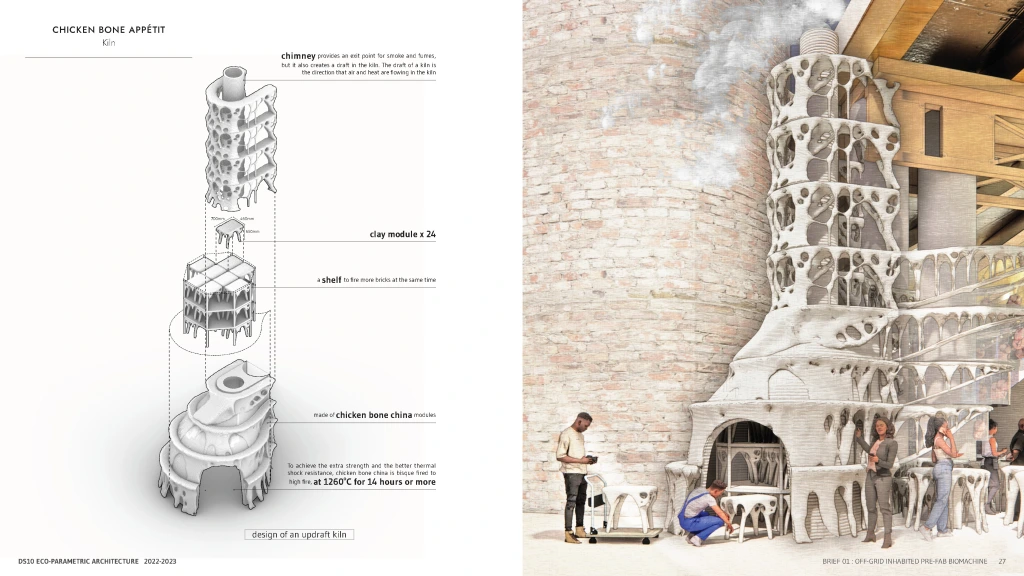

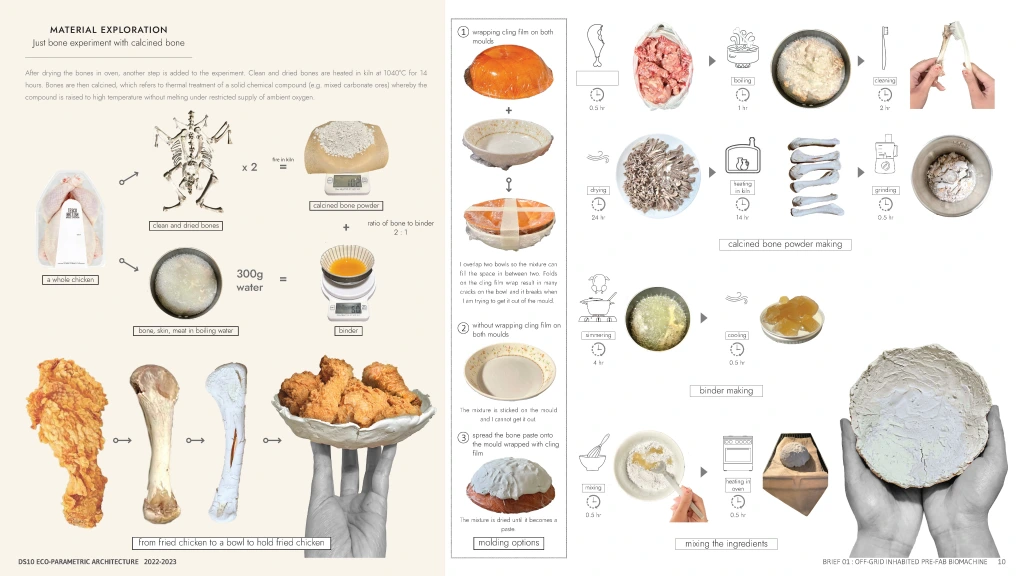

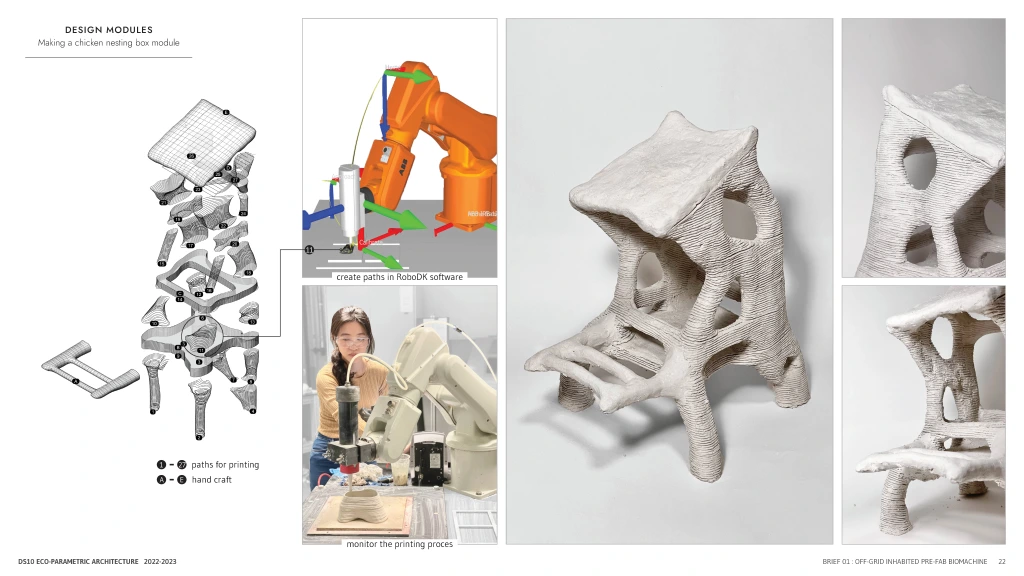

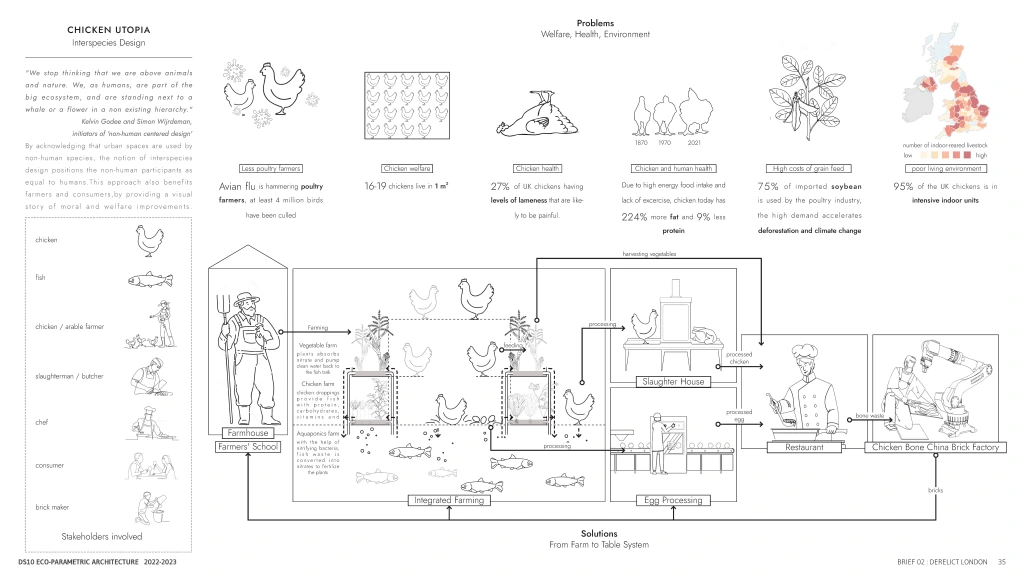

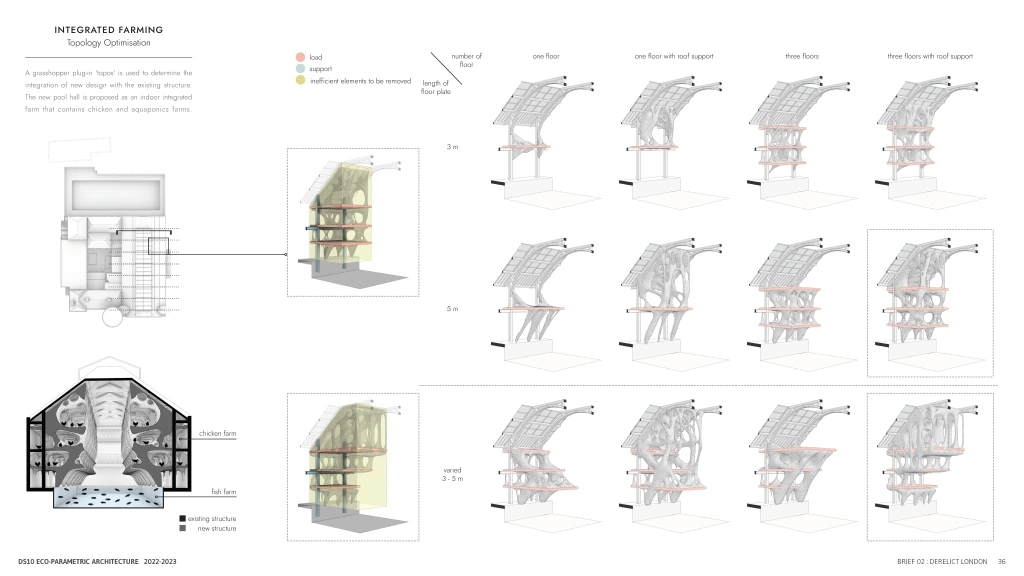

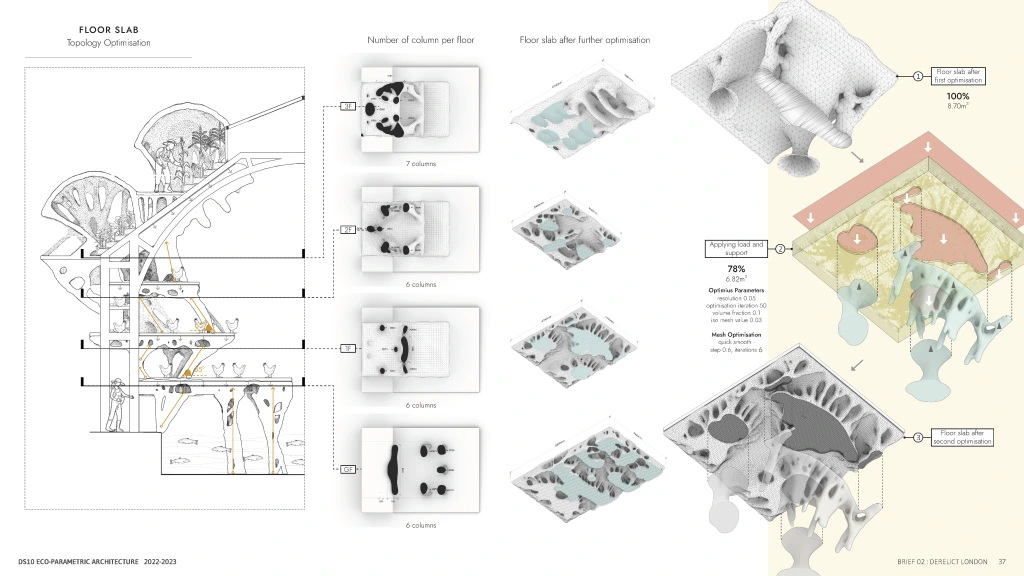

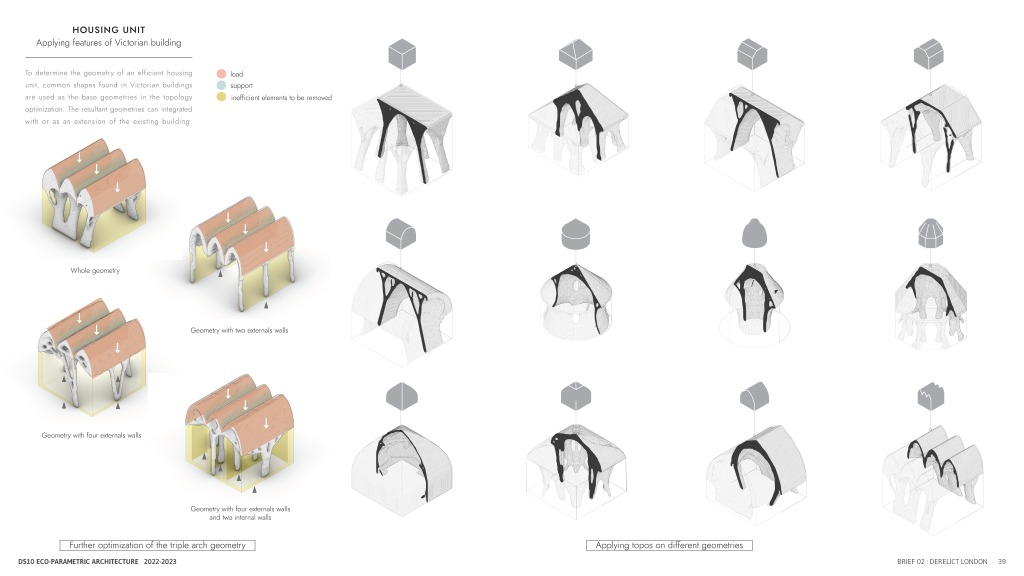

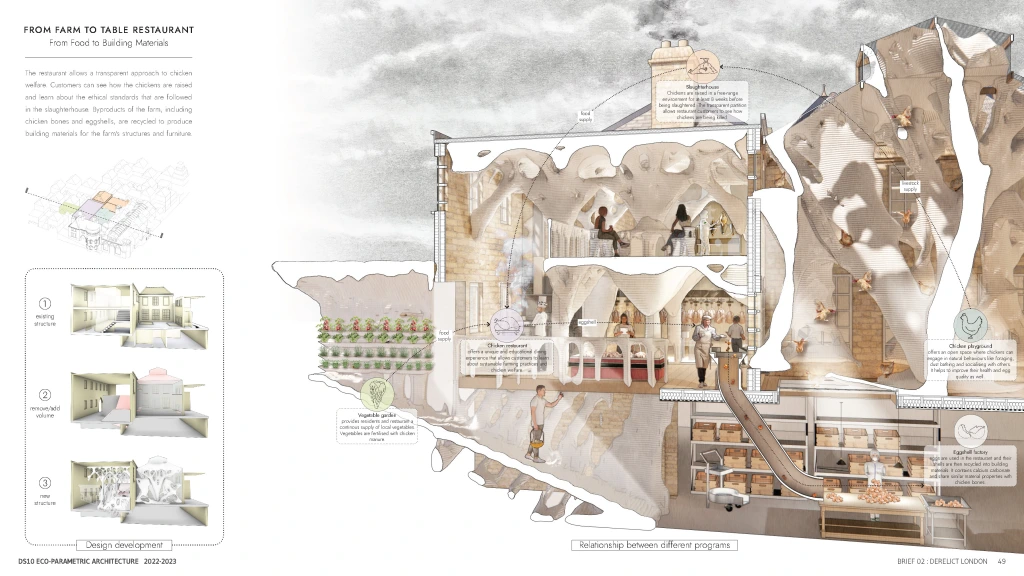

A pavlion that celebrates the closed life cycle of chicken, from being raised in the poultry farm, cooked and consumed in the restaurant or market, to be recycled into foodwares and ceramic modules in the pottery workshop.

By utilizing chicken bones as building materials, it creates an afterlife for local chickens and raise the public awareness towards poultry welfare in the UK. The design is located at the Borough Market, a historic market with an emphasis on high quality food, sustainable production and social connection. By building a chicken farm in the central London, the project aims to raise people’s concern about the importance of food source in the food supply chain, how the animal lifes directly affect our lifes, and question the possibility of having ‘from farm to table’ live chickens market in the city.

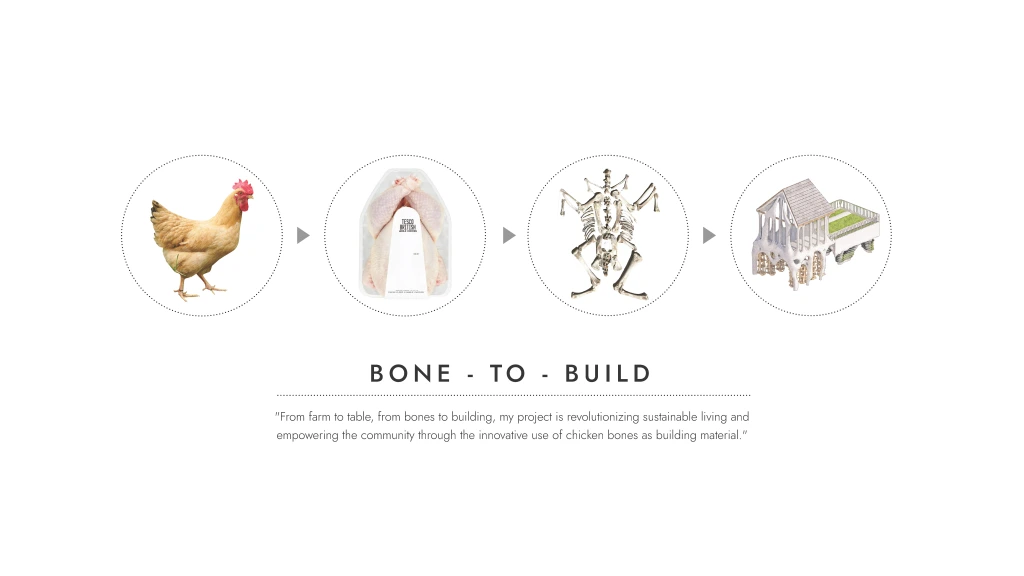

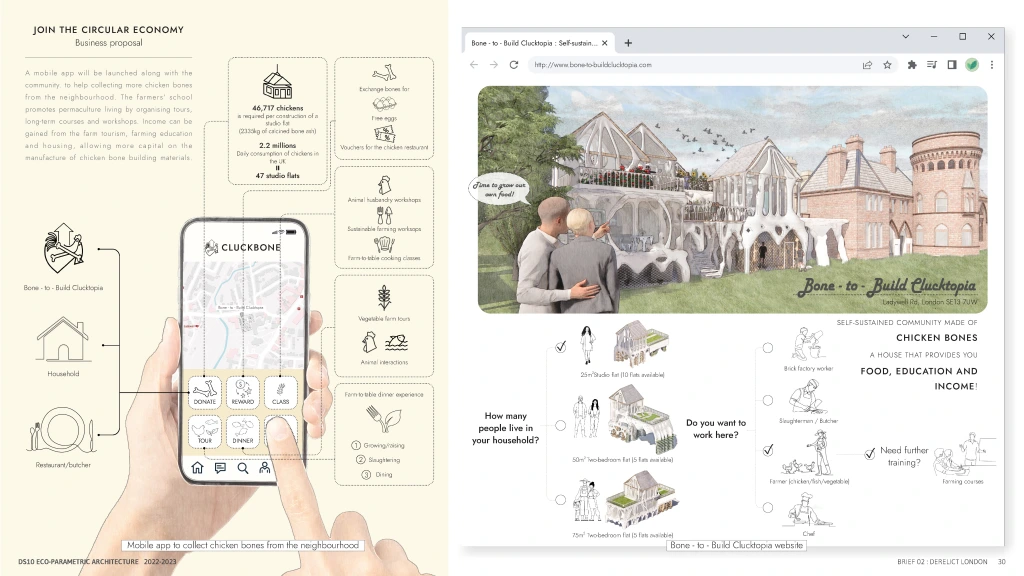

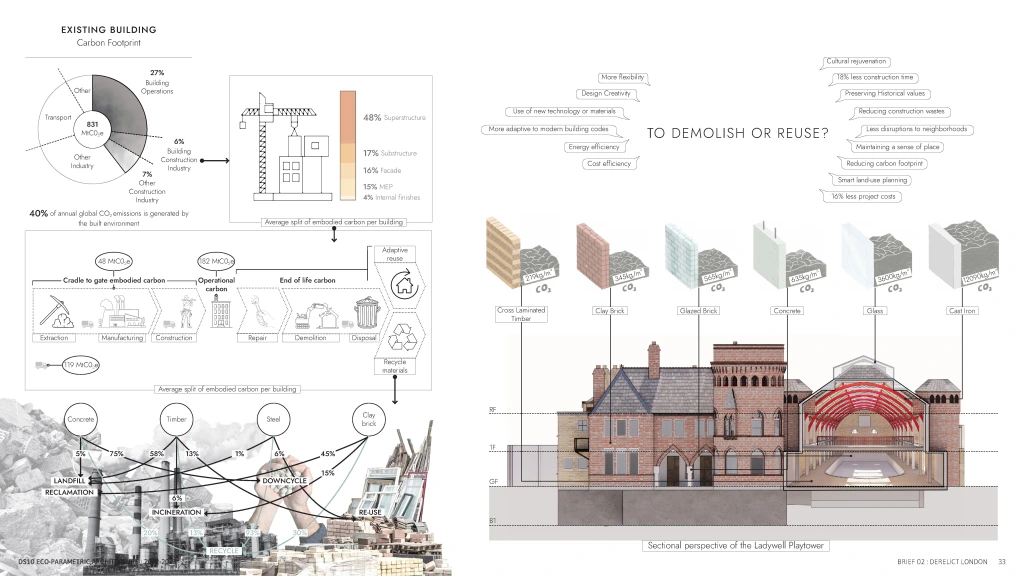

The Bone-to-Build Clucktopia project is is a sustainable living project that aims to revolutionize the way we live, build, and consume. At the heart of this project is the innovative use of chicken bones as building material, transforming what was once considered waste into a valuable resource.

The project begins with the happy chickens that live in Clucktopia’s spacious and comfortable coops, where they are free to roam, perch, and scratch. These happy chickens provide more than just eggs and meat – they also produce an abundance of bones that would otherwise go to waste.

The use of chicken bones as building material is just one aspect of Clucktopia’s sustainable living approach. The project also includes a farm-to-table restaurant, where visitors can enjoy educational experiences, such as tours of the farm and workshops on sustainable living practices.

Clucktopia is not just a farm, but a social hub that brings people together and empowers the community through sustainable living practices. The project is a solution to the growing problem of waste and the need for sustainable living practices.

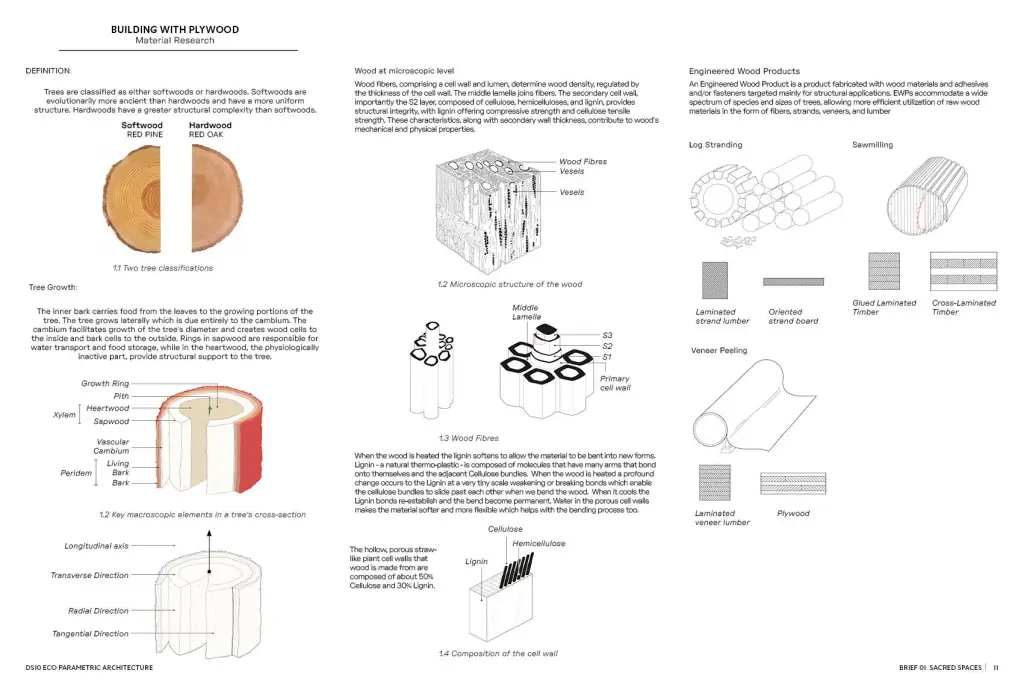

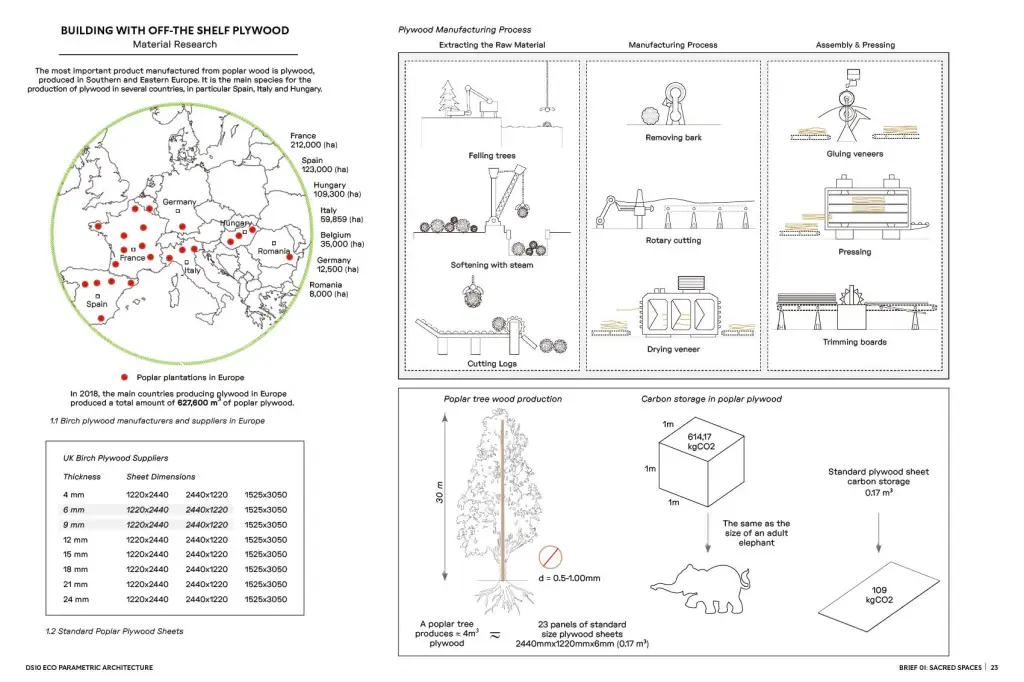

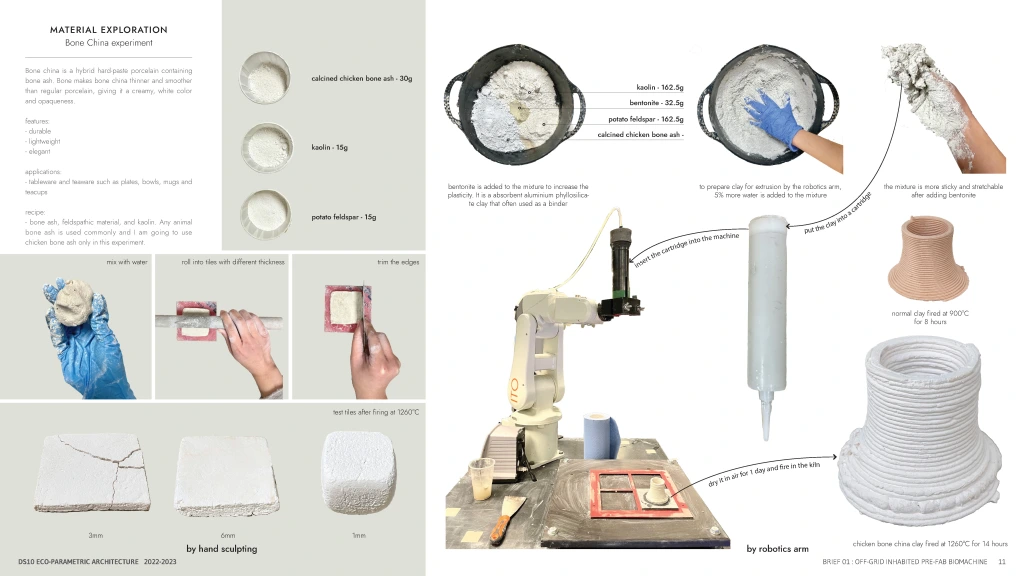

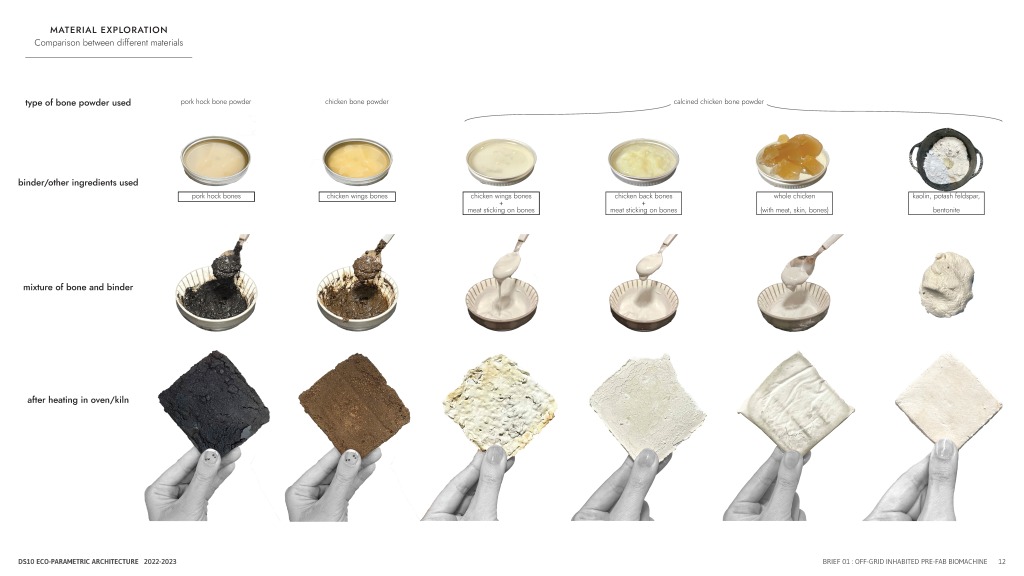

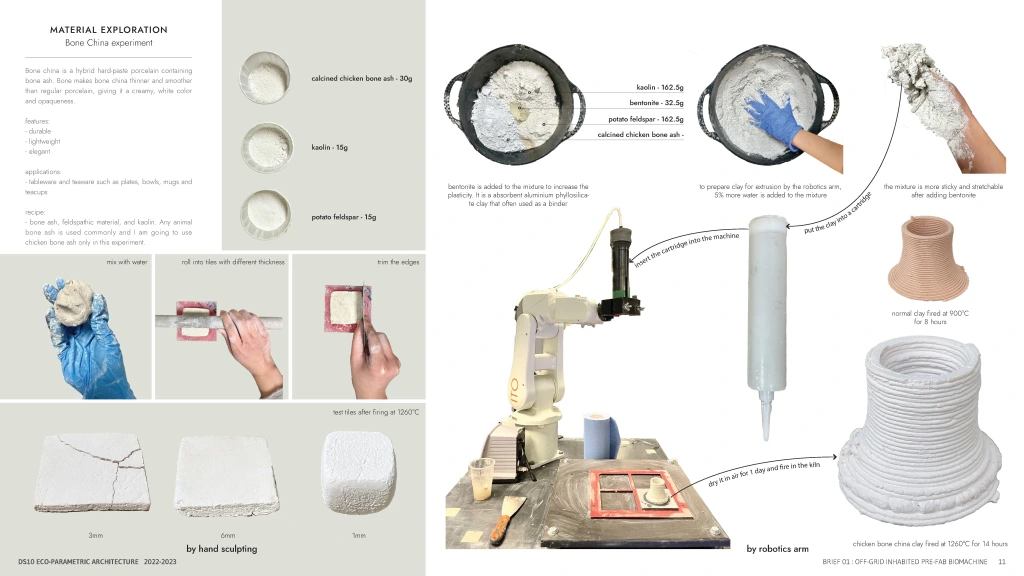

Material Research

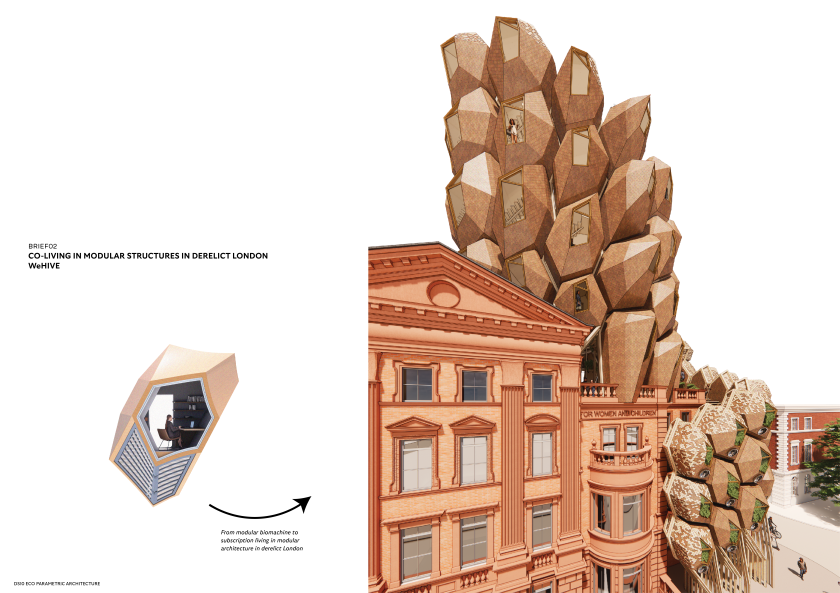

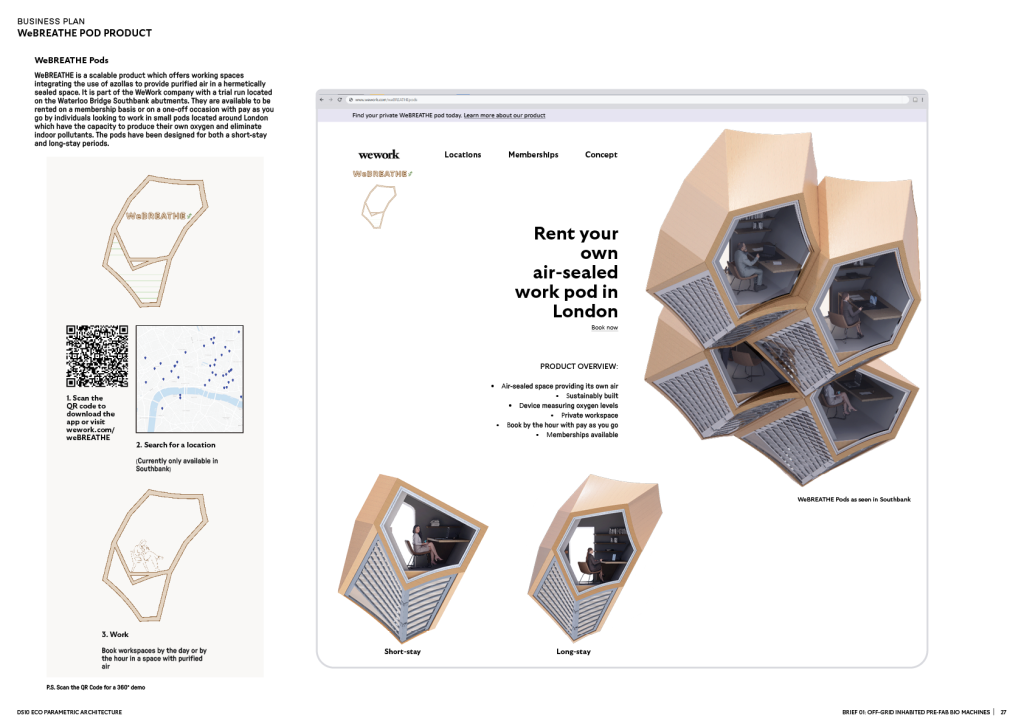

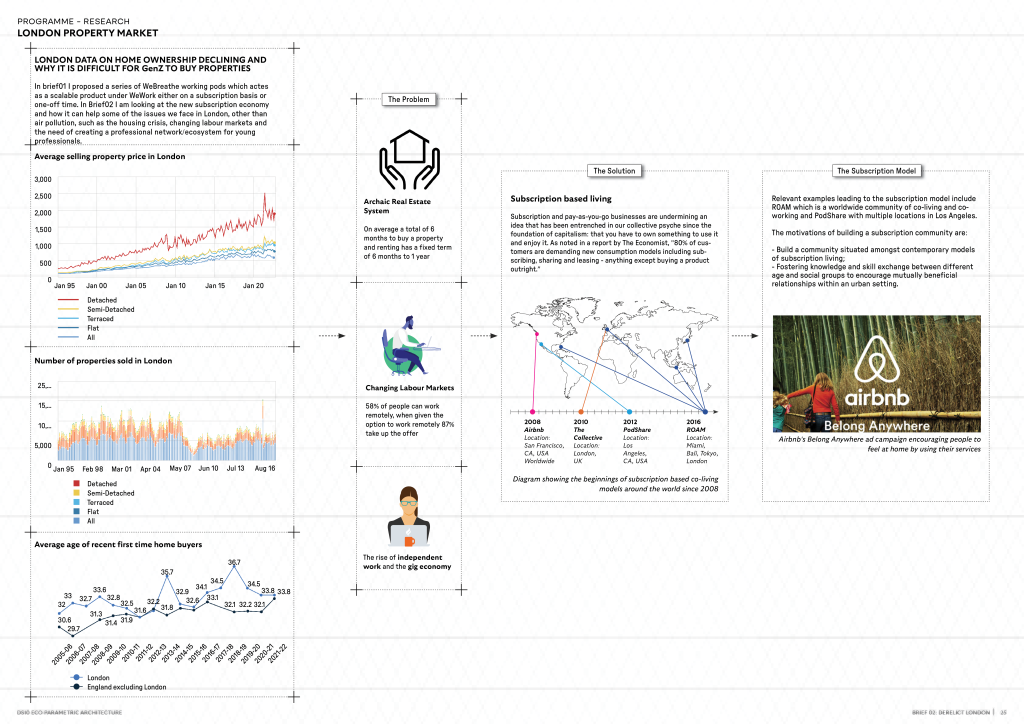

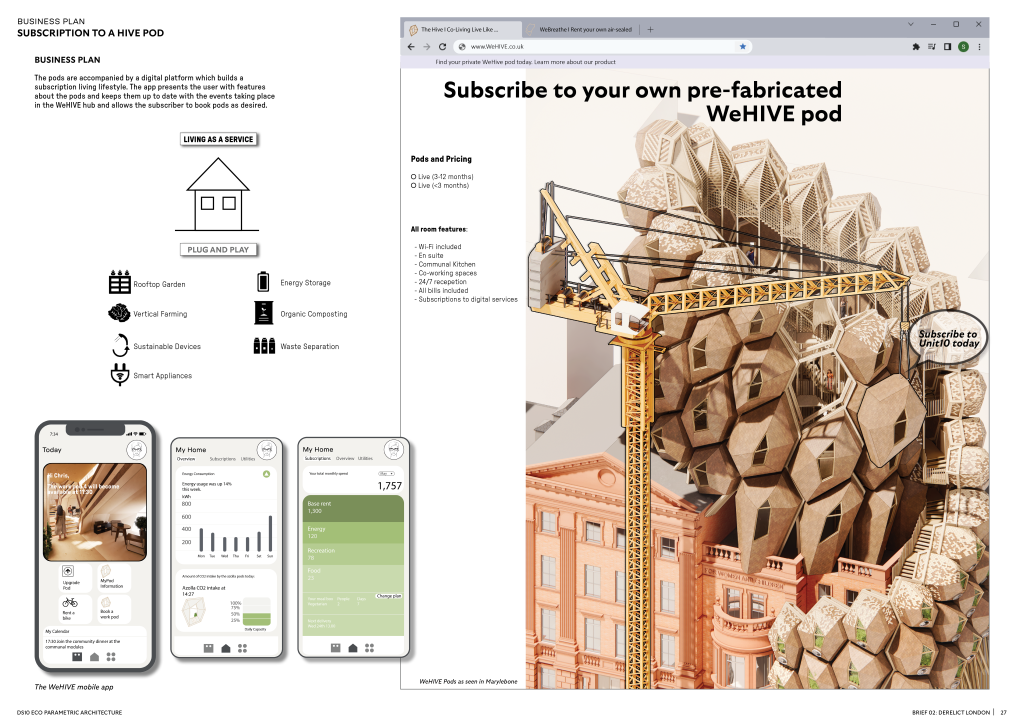

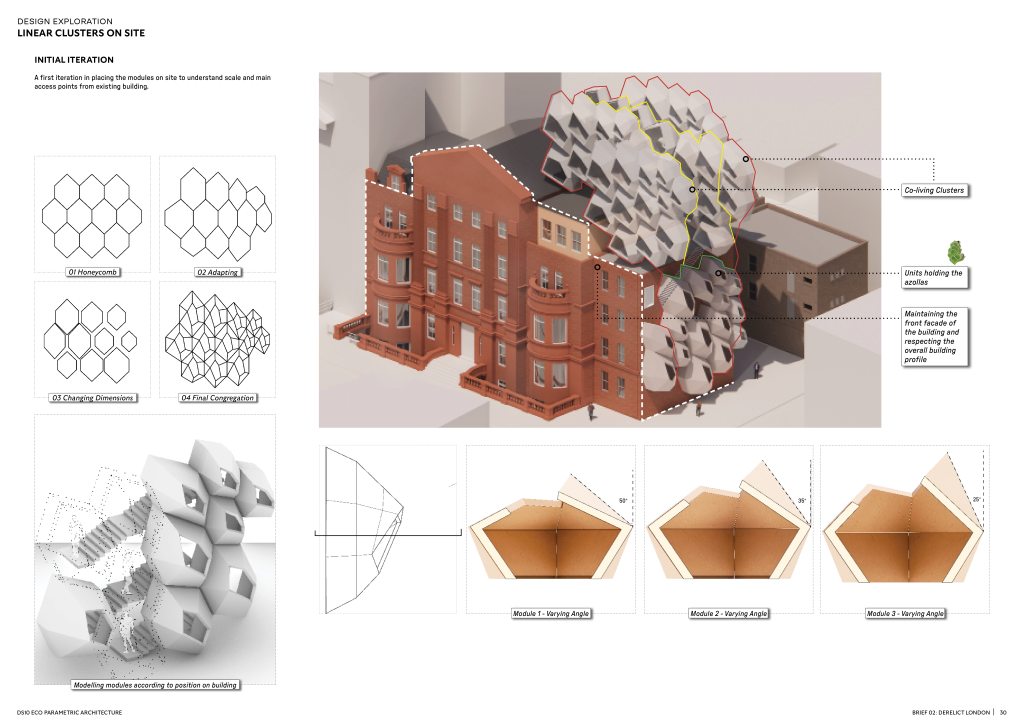

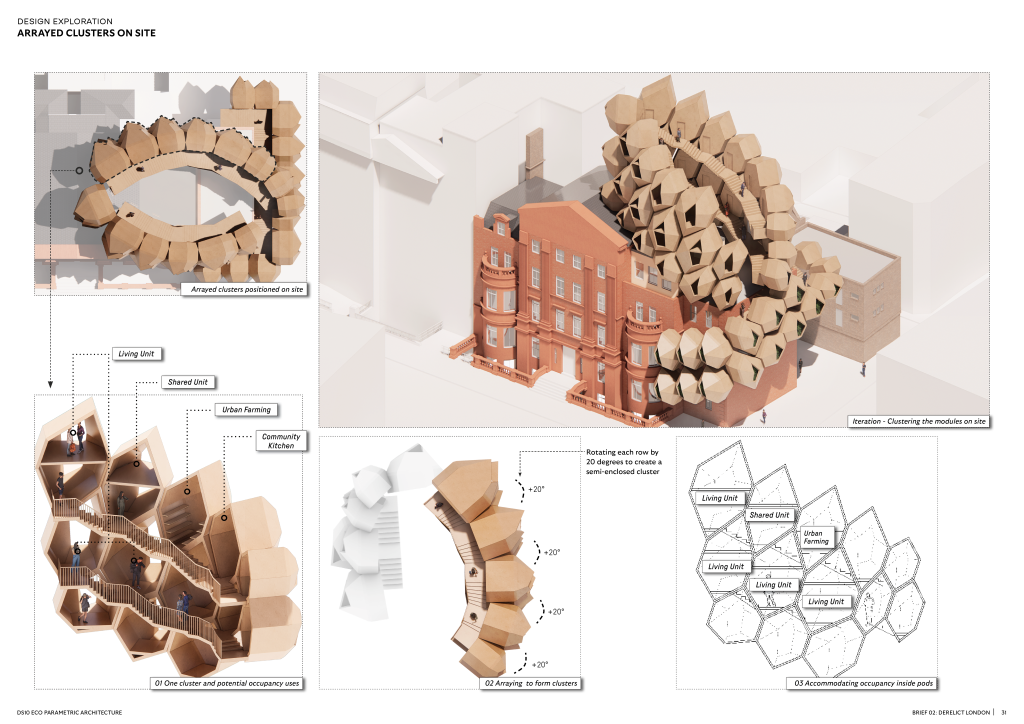

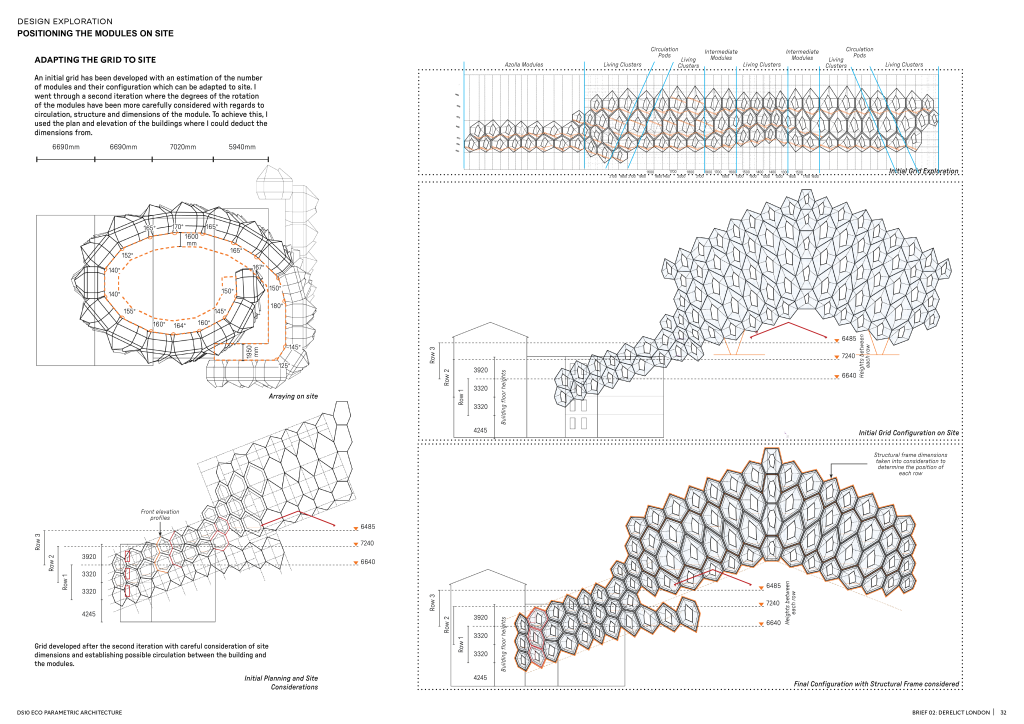

In Brief01 proposal a series of WeBreathe working pods acted as a scalable product under WeWork either on a subscription basis or one-off time. In Brief02 I looked at the new subscription economy and how it can help some of the issues we face in London, other than air pollution, such as the housing crisis, changing labour markets and the need to create a professional network/ecosystem for young professionals.

Subscription and pay-as-you-go businesses are undermining an idea that has been entrenched in our collective psyche since the foundation of capitalism: that you have to own something to use it and enjoy it. As noted in a report by The Economist, “80% of customers are demanding new consumption models including subscribing, sharing and leasing – anything except buying a product outright.”

The Subscription Model

Relevant examples leading to the subscription model include ROAM which is a worldwide community of co-living and co-working and PodShare with multiple locations in Los Angeles.

The motivations of building a subscription community are: